Essay on Examination System

Students are often asked to write an essay on Examination System in their schools and colleges. And if you’re also looking for the same, we have created 100-word, 250-word, and 500-word essays on the topic.

Let’s take a look…

100 Words Essay on Examination System

Introduction.

Examinations are a crucial part of our education system. They evaluate students’ understanding of subjects, shaping their future.

Role of Examinations

Exams test students’ knowledge and skills. They promote competition, encouraging students to work harder.

Types of Examination

Exams are of many types: oral, written, practical. Each has its own significance in assessing a student’s capabilities.

Drawbacks of Examinations

Exams can cause stress and anxiety among students. They often promote rote learning over understanding.

While exams have their drawbacks, they are still an essential tool for measuring student’s academic performance.

Also check:

- Advantages and Disadvantages of Examination System

250 Words Essay on Examination System

The examination system is an integral part of the education system, serving as a yardstick to gauge a learner’s understanding of the subjects taught. It is a time-tested method that has been used for centuries to measure students’ intellectual capabilities.

The Purpose of Examinations

Examinations fulfill several purposes. They provide a standard measure to assess students’ knowledge, skills, and understanding of various subjects. Examinations also promote a competitive spirit among students, encouraging them to work harder and perform better.

Criticism of the Examination System

Despite its significance, the examination system has been criticized for its rigid structure and its inability to assess a student’s overall development. Many argue that it encourages rote learning rather than critical thinking and problem-solving skills. Moreover, the high-stakes nature of examinations can cause extreme stress and anxiety among students.

Reforming the Examination System

To address these issues, there is a growing call for reforms in the examination system. These reforms could include a shift from summative to formative assessments, which focus on continuous evaluation and feedback rather than a single high-stakes exam. Incorporating project-based assessments, group work, and presentations could also help evaluate a student’s creativity, teamwork, and communication skills.

In conclusion, while the examination system has its drawbacks, it is an essential tool for assessing students’ academic progress. However, it is imperative to continually refine and adapt this system to ensure it accurately reflects a student’s overall abilities and potential.

500 Words Essay on Examination System

The examination system: a critical evaluation.

The examination system, a cornerstone of conventional education, has been a subject of intense debate among educators, students, and policymakers. This essay aims to critically evaluate the examination system, its merits, demerits, and possible alternatives.

Examinations are designed to assess a student’s understanding of a subject within a specific timeframe. They serve as a barometer for gauging academic proficiency, helping institutions to standardize their evaluation process. Examinations often determine a student’s progression through the education system, with high-stakes exams influencing university admissions and future career prospects.

Merits of the Examination System

The examination system has several advantages. Firstly, it offers an objective measure of a student’s grasp of a subject, reducing bias in evaluation. Secondly, it fosters a competitive environment, encouraging students to strive for excellence. Thirdly, examinations can instill discipline and time management skills, as students must organize their study schedules effectively to cover the syllabus.

Drawbacks of the Examination System

Despite its merits, the examination system has significant drawbacks. It often promotes rote learning, where students memorize facts without understanding the context or application. This approach can stifle creativity and critical thinking, essential skills in the modern world. Additionally, examinations can induce stress and anxiety, impacting students’ mental health. High-stakes exams may also lead to an inequitable education system, where students with access to resources and tutoring have an unfair advantage.

Alternatives to the Traditional Examination System

Given these criticisms, many educators advocate for alternatives to traditional examinations. Continuous assessment is one such method, where students are evaluated throughout the academic year based on assignments, projects, and participation. This approach promotes continuous learning and reduces the pressure associated with end-of-term exams.

Another alternative is the portfolio-based assessment, where students compile a portfolio of their work throughout the course. This method allows for a more holistic evaluation, considering creativity, problem-solving, and practical application of knowledge.

Conclusion: A Call for Reform

In conclusion, while the examination system has its merits, it is not without its flaws. The emphasis on rote learning, the stress associated with high-stakes exams, and the potential for inequity necessitate a reevaluation of this system. Alternatives such as continuous assessment and portfolio-based assessment offer promising avenues for a more holistic, equitable, and low-stress evaluation system. As we move forward, it is crucial to adapt our education system to cater to the diverse needs of students, fostering creativity, critical thinking, and a lifelong love for learning.

That’s it! I hope the essay helped you.

If you’re looking for more, here are essays on other interesting topics:

- Essay on Monsoon

- Essay on Enjoying the Monsoon

- Essay on Society

Apart from these, you can look at all the essays by clicking here .

Happy studying!

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

Essay on Examination with Outline Quotations and tips

Best essay on examination system in pakistan for matric/inter in 1000-1500 words.

In this post, you will find an essay discussing the examination system in Pakistan, accompanied by quotations. It is intended for students studying in Matric, FFSC, and the 2nd year. Class 12 students can utilize this essay as a practice tool for their annual exams. Similarly, FSC students can write a similar essay titled “Essay on Examination with Outline Quotations and tips” or “Best Essay on Examination System in Pakistan.” If you require additional essays for the 2nd year, please visit our collection of Essays for FSC.

Essay on Examination Outline:

1. Introduction. Examinations are an integral part of our system of education but they are extremely faulty. 2. They fail to give an accurate assessment of a student. They do not test a student’s intelligence or ability. They test only his cramming power. Judging by the examination results, many of the great scientists were quite dull. 3. The element of chance in the examinations. The making of scripts is never uniform. Some examiners may be strict, others very lenient. 4. Examinations make the work round the year uneven. Students just study near the examinations and idle away their time during the rest of the year. This makes their work round the year very uneven. 5. This system adversely affects class teaching. A good teacher feels hampered by the limits imposed by the examination system. 6. Examinations are a necessary evil. If there were no examinations, students would not study at all. And it must be said to their credit that, in spite of all the drawbacks in them, a good student has not usually failed nor a third-rater topped. 7. The need for reforms. Instead of completely doing away with the system, we should try to introduce certain reforms: (a) Semester systems can make the work round the year more even. (b) Marking scripts should be made more accurate and uniform. (c) Examinations may be accompanied with viva voce, particularly in the case of marginal students. (d) Unfair means should be checked.

Examinations Essay for Matric/Inter in 1000-1500 Words

Introduction:.

Examinations have come to stay as a part of our education system. They are considered to be a big nuisance and both the teachers and the students detest them. It is really possible to discover a large number of defects in them; still in the absence of any other satisfactory system of evaluation, it is impracticable to abolish them. They are perhaps evil, yet they are indispensable.

Examinations are often condemned on account of the very prominent role of chance involved in them. The marking of the scripts can never be uniform. Even if we grant that all examiners are sincere and earnest – in fact, many of them are whimsical and willful – we can still not affirm that examinations are scientifically impartial to all examinees. The possibility of the personal prejudices of an examiner beclouding his better judgment cannot be excluded. If an average script follows three brilliant scripts, it will be awarded poor marks; if it follows two exceptionally poor ones, it will earn a better reward than it deserves. Mr. Shahid might be too strict. He will bewail the poor standards and make fascinating crisscross patterns on the scripts. Mrs. Nadia might be a bit too lenient. She would like to give every student a pass on humanitarian grounds. How far can the awards are given by these two examiners be accepted as a fair index of the relative ability of their examinees?

Under the prevailing system of examinations, the students enjoy a ten-month holiday and have a two-month working session. They merrily skip around and flirt their time away for the first ten months. Then, as the examinations approach, one can sniff a chill of seriousness in the air. The atmosphere starts getting heavy, the infection is gradually rife and the students start pouring over their books. They skim through their syllabi, just to get the hang of what they are about, manage to stuff their brains with some ill-digested facts temporarily, then forget all about them once the examinations are over. But these two months play havoc with their physique. The whole period is spent in extreme nervous tension. Shave and hair-cut, cosmetics, and coiffures are all forgotten. Chemists are pestered to procure pills causing sleeplessness. The erstwhile lotus-eater suddenly becomes a Ulysses. But to his utter dismay, he often discovers that he is no match for the giant that examination is and collapses with acute nervous exhaustion.

This system exercises an adverse effect on the class teaching in two ways. First, a good teacher always finds himself hampered by the limitations imposed by the examination system. He does not teach, he prepares the students for the examination. Secondly, a number of students, by virtue of having a good memory, get into a class where they do not deserve to be. Their lessons being beyond their comprehension, they feel bored in the class and create mischief. It is these students who pollute the atmosphere in the class and are responsible for the widespread indiscipline found in colleges. But even the devil must be given its due. It must be acknowledged that the examinations do compel students to study a little. Or they would not study even this much. Secondly, despite all the tricks played by chance, it is never noticed that a good student has failed or a third-rater has topped. Thus examinations may not be scientifically accurate or impartial, still, they do substantial justice.

Examiners are appointed en masse and they often do a bad job of the work entrusted to them. But then there is no better substitute for this system. We cannot abolish these examinations. All we can do is to improve upon them so that they cease to be a lottery indiscreetly doling out a few lacs with innumerable blanks. Related topics. The uses and abuses of examinations. Examinations are a necessary evil.

Essay on Examination Quotations

- “The difference between a good and a poor student is result”. (ETC Wanyanwu)

- “Prepare well! Take two inks; you may never know when one pen will stop writing!” (Ernest Agyemang Yeboah)

- “Examinations were a great trial to me.”

- “The roots of education are bitter, but the fruit is sweet”. (Aristotle)

Tips for Writing an Examination Essay:

- Comprehend the topic: Begin by thoroughly understanding the essay prompt. Ensure a clear understanding of what the topic requires you to discuss.

- Create an essay plan: Develop an outline or structure for your essay. This will help organize your thoughts and ensure a logical flow of ideas.

- Engaging introduction: Start with an attention-grabbing introduction that provides background information on examinations and presents your thesis statement.

- Develop strong arguments: Use body paragraphs to elaborate on your ideas and arguments. Each paragraph should focus on a specific point, supported by evidence and examples.

- Utilize relevant examples: Incorporate real-life examples or personal experiences to illustrate your points and make your essay more relatable and persuasive.

- Maintain focus: Stay on-topic and avoid straying from the main theme of examinations. Ensure each paragraph directly relates to the central subject.

- Be concise and clear: Use clear and concise language to express your ideas. Avoid excessive jargon or complex vocabulary that may confuse the reader.

- Summarize in the conclusion: Provide a summary of your main points and restate your thesis in the conclusion. Leave the reader with a sense of closure.

- Proofread and revise: Take time to review your essay for grammar, spelling, and punctuation errors. Make necessary revisions to improve clarity and coherence.

- Manage time effectively: During an examination, allocate sufficient time for planning, writing, and proofreading to ensure a well-structured and polished essay.

Conclusion for Essay on Examination

examinations play a significant role in assessing students’ knowledge and progress. While they can be challenging, it is important to approach them as opportunities for growth. However, it is crucial to remember that exams should not be the sole determinant of a student’s abilities or potential. A balanced assessment approach, incorporating various evaluation methods throughout the year, provides a more comprehensive understanding of students’ skills. Additionally, it is essential to prioritize a holistic education that promotes critical thinking and problem-solving. By fostering a love for learning and focusing on overall development, examinations can serve as a means to equip students with essential skills for their future endeavors. Ultimately, the aim should be to create an educational environment that encourages continuous improvement and prepares students to become well-rounded individuals capable of making meaningful contributions to society.

You Might Also Like

- Women Place in Society Essay with Outline and Quotations

- The Power of Public Opinion Essay With Outline and Quotation...

- Corruption in Public Life Essay With Outline and Quotations

- Generation Gap Essay With Outline and Quotation

- The Importance of Discipline in Life Essay with Outline

- Pleasures of Reading Essay with Outline

Leisure – Its Uses and Abuses Essay with Outline

- Social Responsibilities of a Businessman Essay with Outline

- The Importance of Consumer Movement Essay with Outline

Sportsmanship Essay With Outline

Ameer Hamza

Your related, rising prices essay with outline and quotation, environmental pollution essay with outline and quotations, black money essay with outline, the values of games essay with outline, leave a reply cancel reply.

Your email address will not be published. Required fields are marked *

- Privacy Policy

Examinations system essay for 2nd year with outline

Examinations essay, the examination system of pakistan essay.

The difference between try and triumph is a little umph. - Marvin Phillips

Exams are not just tests, they tell you what you possess in your mind - Saif Ullah Zahid

Your final exams grades must not be damaging to your employment and prospect - Anonymous

much good work is lost for the lack of a little more - Edward H. Herriman

- Education problems in Pakistan essay

- My first day at college essay

- Cricket match essay for college level

- Life in a big city essay

- Courtesy Essay

No comments:

Post a Comment

Trending Topics

Latest posts.

- 2nd year English guess paper 2024 for Punjab Boards

- 2nd Year English Complete Notes in PDF

- 2nd year guess paper 2024 Punjab board

- Important English Essays for 2nd Year 2023

- 2nd year chemistry guess paper 2024 Punjab board

- 2nd year all subjects notes PDF Download

- 2nd Year Part II Book II Questions Notes free PDF Download

- 2nd year past papers solved and unsloved all Punjab boards

- 2nd year physics guess paper 2024

- 1st year Past papers solved and unsolved all Punjab Boards

- 9th class guess paper 2024 pdf

- 9th class English guess paper 2024 pdf download

- 9th class general science guess paper 2024 pdf download

- 9th class physics guess paper 2024 pdf download

- 9th class biology guess paper 2024 for All Punjab Boards

- 9th class Islamiat Lazmi guess paper pdf 2024

- 9th class Urdu guess paper 2024 pdf download

- BISE Hyderabad

- BISE Lahore

- bise rawalpindi

- BISE Sargodha

- career-counseling

- how to pass

- Punjab Board

- Sindh-Board

- Solved mcqs

- Student-Guide

Essay on Our Examination System in Pakistan with Quotations

This post contains a sample of an Essay on Our Examination system in Pakistan with Quotations for the students of FSC, 2nd year. Students of Class 12 can prepare this essay only as practice for annual exams. Our examination system essay has been taken from Sunshine English (Comprehensive-II) and I have added some appropriate quotations in it. Students of FSC can write the same essay under the title, Essay on Our Examination System with Quotes, Examination System of Pakistan essay with quotations and Examination System in Pakistan essay. If you need more essays for 2nd year you can visit Essays for FSC .

Examination System of Pakistan Essay with Quotations for FSC, 2nd Year Students

Education makes life worth living. A country without a proper, system of education can make no progress. Only the educated and skilled people can pave the way to progress. For good education, an adequate system of examination is necessary. Unfortunately, our system of examination is not satisfactory. It is replete with faults. The current system of examination is such as tests the memory of the students. It is not a fair test of knowledge, understanding and comprehension. The questions asked in the examination are not such as may promote the inborn faculties of students. The usual practice among the students is to learn everything by heart without a complete comprehension of it. This is practised in the present system of examination. though the students pass the examination in this way, they cannot develop their awareness greatly.

Education is a responsibility on every Muslim, male or female. (Hazrat Muhammad صلی اللہ علیہ وسلم)

Furthermore, the method of evaluation of scripts is faulty. Every examiner has his particular outlook, temperament and a specified way of observation. Even the atmosphere affects the mood of examiners. As a result, the assessment is somewhat personal and therefore inaccurate. Moreover, the questions asked in the examination are subjective; they cannot be awarded exact marks.

In our country, the climate is of the extreme kind. The examinations take place usually in the hot summer. The scorching heat leads to several troubles. The students cannot concentrate fully on their studies. They have to go to the examination hall in the scorching heat of summer. Moreover, our academic terms are short because of extreme weather conditions. Consequently, students cannot read their course fully. This also adds to the difficulties of the students in the examination.

“The roots of education are bitter, but the fruit is sweet” . (Aristotle)

I have some suitable suggestions for the improvement of our examination system.

First, the examinations should be held in the spring season so that the students may work in the pleasant weather conditions. This will enable the students to focus their attention on their studies. This would also increase the length of the academic year.

“The difference between a good and a poor student is result”. ( ETC Wanyanwu)

Second, the questions papers should be set in such a way as they should be the real test of the student’s knowledge and insight.

Third, the declaration of result should be prompt and quick. This will save the time of the students. Even the length of the academic term would be increased.

Fourth, the students securing outstanding positions should be awarded prizes. They should be honoured with scholarship. This will increase the passion for study among the students. I hope that the government would take prompt steps in this regard.

“Prepare well! Take two inks; you may never know when one pen will stop writing!” ( Ernest Agyemang Yeboah)

You may also like:

- A Visit to a Historical Place Essay with Quotations

- Essay on Illiteracy Problem with Quotations

- Essay on Picnic Party with Quotations

- Essay on Science and Technology with Quotations

- Essay on Kashmir Issue with Quotations

- More In English Essays

Essay Writing 101: The Basics That Every Writer Should Know

Students and Social Service Essay with Quotations

Load Shedding in Pakistan Essay – 1200 Words

Sÿędã Rashda

March 4, 2023 at 1:28 pm

Please send in pdf

March 5, 2023 at 12:06 pm

At present you can copy, take screenshot or note down on your notebook. However, soon PDF Button will be added under famous essays.

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

- Privacy Policty

- Terms of Service

- Advertise with Us

Essay on Our Examination System in Pakistan

- January 17, 2024

Kainat Shakeel

Examinations, a foundation of the educational system, play a vital part in shaping the academic trip of students. In Pakistan, as in multitudinous countries, the examination system has endured significant changeovers over time. claw into the nuances of the Pakistani examination system, exploring its elaboration, challenges faced by students, and proposals for reform. The examination system in Pakistan holds a vital position in determining the academic prowess of students. It serves as a mark for assessing their understanding of the class, critical thinking capacities, and overall academic performance. Examinations, in various forms, have been an integral part of the educational environment in Pakistan.

Evolution of the Examination System

Taking a historical perspective, examinations in Pakistan have evolved significantly. From traditional oral assessments to the current written and formalized tests, the system has acclimated to the changing educational requirements. The shift towards a more structured examination format reflects the dynamic nature of education in Pakistan.

Structure of the Current Examination System

In the contemporary script, the examination system in Pakistan comprises colorful types, including board examinations and university assessments. The grading system, with its nuances and evaluation criteria, adds another subcaste of complexity. Understanding the complications of these structures is essential to grasp the difficulties encountered by pupils.

” In the Pakistani examination system, success is frequently equated with grades, overshadowing the significance of holistic literacy and practical operation of knowledge.”

Challenges faced by students.

During the examination period, students encounter various difficulties and obstacles that they must overcome. The stress and pressure associated with performing well, coupled with the competitive nature of examinations, produce an environment that can be inviting. Its pivotal to fix these challenges to address them effectively.

” Our examination system should be a tool for assessing skills and fostering creativity, but too frequently it becomes a source of stress and anxiety for scholars in Pakistan.”

Impact on learning.

While examinations serve as a tool for assessing academic progress, their impact on actual learning is a subject of debate. On one hand, examinations can motivate students to study and perform better. On the other hand, critics argue that the current system may prioritize rote memorization over deep understanding.

“Examinations are redoubtable indeed to the stylish set, for the topmost fool may ask further than the wisest man can answer. Charles Caleb Colton”

Reforms and proposals.

Feting the defects in the current system, ongoing reforms are aiming to make examinations more student-friendly and reflective of actual learning. Suggestions for enhancement include changes in question formats, emphasis on practical operations, and a shift towards nonstop assessment.

Technological Integration in Examinations

The integration of technology in the examination process has been a recent development. Digital examinations bring effectiveness and availability, but enterprises with security and access to technology pose challenges.

The Role of Teachers and Parents

Creating a probative environment during examinations requires collaboration between teachers and parents. Emotional and academic support from these crucial numbers can significantly impact a students capability to manage the challenges posed by examinations.

Alternatives to Traditional Examinations

Exploring indispensable assessment styles, similar to design-grounded evaluations, practical demonstrations, and nonstop assessment, offers a holistic approach to assessing students. This shift from the conventional test-centric model aims to give a further comprehensive understanding of a students capabilities.

Cultural Influences on the Examination System

Cultural stations towards examinations in Pakistan contribute to the dynamics of the system. Understanding these influences and comparing them with examination systems in other countries can give precious perceptivity to the strengths and sins of the Pakistani model.

Managing strategies for students

Admitting the stress associated with examinations, and enforcing managing strategies becomes essential. Balancing internal health with academic performance is a skill that students need to cultivate. This section explores effective managing mechanisms to navigate the grueling test period.

” Reforming the examination system in Pakistan is pivotal for producing graduates who are just academically complete but also equipped with the skills demanded for the complications of the ultramodern world.”

Government programs and impact.

Government programs play a vital part in shaping the examination of geography. An analysis of these programs and their impact on students and the education system as a total is pivotal for understanding the broader environment of examinations in Pakistan. Considering the current state of the examination system, prognostications for the future are essential. Anticipated changes and developments, both in terms of technology integration and pedagogical approaches, shape the unborn geography of examinations in Pakistan.

In conclusion, the examination system in Pakistan is a multifaceted reality with strengths and challenges. While it serves as a pivotal metric for academic performance, there a nonstop need for reform and adaptation. Striking a balance between tradition and invention, addressing the well-being of students, and fostering a positive learning environment are crucial rudiments in the ongoing converse on examinations.

Kainat Shakeel is a versatile Content Writer Head and Digital Marketer with a keen understanding of tech news, digital market trends, fashion, technology, laws, and regulations. As a storyteller in the digital realm, she weaves narratives that bridge the gap between technology and human experiences. With a passion for staying at the forefront of industry trends, her blog is a curated space where the worlds of fashion, tech, and legal landscapes converge.

Examination System In Pakistan Essay

With the help of this article we are going to explain examination system In Pakistan essay. Every country has its special system for conducting the exams at any level of education. Similarly in Pakistan there is also an education system is followed that is called as the easy way of conducting the exams at school, college and university level. So the examination system is different at each level according to eh level of studies. This system is explained below that will make you familiar by reading this article. Ministry of Education Government of Pakistan is collaborating with the boards of director to upgrade the study system and that is being followed by schools and colleges under which a student find it easier to pass in a class, such as the minimum passing marks are 33% that is the lowest aggregate as compare to any examination system in world. So to get further details and examination system in Pakistan essay, keep on reading this article.

- Biotechnology Scope In Pakistan April 6, 2023

Educational system Pakistan is divided into five levels such as:

- Primary level

- Middle level

- Secondary level

- Intermediate level

- University level

All the schools, colleges and universities in Pakistan have been set in three categories namely:

- Government schools

- Private schools

Examination System in Pakistan:

If we give a look over the educational and examination system of Pakistan then majority of them are found to be in the poor condition just because of the lack of attention and shortage of funds. All the teachers are not offered with the best and adequate salary. All the private schools in Pakistan are found to be doing some better jobs as they are offered with the best pays all along with the necessary training for teaching. But one of the biggest drawbacks of these private schools is that as they are giving with the excellent services then at the same time their fee charges are not affordable by each single person.

Some of the educational system that are presently working in the Pakistan they are actually producing no synergy as they are creating conflicts and division among people. In Pakistan there are English medium schools, Urdu medium schools and madaras.

All the students who are coming out from the English educational institutions they are not much aware of Islamic teachings and a student who are coming out of Urdu medium school they don’t get excellent jobs. Its better solution is that the hierarchy of schooling systems should be abolished soon. There is one of the greatest needs to improve and update the curriculum and pedagogy.

Maximum attention should be given on the subjects of mathematics and Language so that the students would be better able to enhance their skills in the creative writing. Some educational trips should be arranged for the students that will going to help improving their knowledge about the history.

Well we hope that with the help of this article all the readers would have get some idea about the examination system in Pakistan essay.

Related Articles

Best CV Format in Pakistan

A Hostel Life of Student Essay

University Life Essay

Preparation For Aptitude Test Guide in Pakistan

Leave a reply cancel reply.

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

Adblock Detected

An English Essay on Our Examination system for B.A. and F.A students

Our Examination System

- Share on Facebook

- Share on Twitter

I am a published author of dozens of books in Pakistan. I have been Editor of The Guide in National University of Modern Languages Islamabad (NUML). I am MPhil in Applied Languistics from University of the Lahore. Being an M.Ed I mostly spend my time training the teachers.

Post a Comment

Contact form.

Essay on Examination

Examination is a test of a person’s capacity, knowledge, and ability. It proves what standard of learning a person has acquired during a specific period of time in a specific syllabus. It is the most hated and most shunned things for some students who never like to indulge in it with pleasure until they have a charm of acquiring a degree. Otherwise, they compare it with a nightmare.

Yet examinations are not totally devoid of good. There is a saying about it.

Trials are a veritable curse but they have their use. (adsbygoogle = window.adsbygoogle || []).push({});

Education System and Exam

The system of education of mostly examination ridden which aims at the test of achievement and success. The examination is the center of studies and hard work. It is a motivating force to work.

Its importance and efficacy have been called in question. The most important point is that examinations are not the real test of knowledge and understanding. They are the test of ignorance or cramming. Still, we can say that examinations are necessary evil which cannot be avoided.

Uses of Examination

Difference between genius and dunce.

Examinations have many uses. They help us find the most efficient individual among many. we can distinguish between the scholar and the dullard, the genius and the dunce. In this way, they help us discriminate between the genuine gold and the sparkling brass.

Compel to work hard

Secondly, the examinations compel us to work hard. the careless students become serious near the examinations. They buy books they had no intention to buy and gird up their loins.

It is a fact that many students read for the sake of examinations. Thus, examinations are a very effective way of goading students to read.

Fitness for promotion to a higher grade/class

Thirdly, examinations are proof and guarantee of man’s efficiency. They provide us a proof of the fitness of the student for promotion to a higher grade/class. An employer can safely entrust a job to the degree holder. Without a degree, no one will higher his services. The factories, industries or mills cannot allow the person to perform a technical task without a specific degree/course.

Way to attain degrees / diplomas

Similarly, we do not ask everyone to prescribe medicine for us. Only the person holding a degree enjoys the right to operate upon our body. Hence, if we abolish examinations, we shall have to abolish degrees or diplomas.

Abuses of examinations

Examinations have certain abuses as well. Many students consider it a curse. They consider them to be a game of chance. The students are never sure of their success. There are always doubts in their minds. Success does not depend upon preparation. Even a student with selected studies may pass and the student with thorough preparation may fail.

Uncertainty of success

Some students keep studying the whole session but fail. On the other hand, many others who buy help books and cheap notes near the examinations and cram a few questions, pass. Such examinations are a curse for the shining students.

Test of memory

The examinations are a test of nerves. All examinations have a limit of time and place. A student is tested at a bad place and in a bad manner. The question arises how a student’s hard work and worth for a semester or full one year is judged in a short time. They are never a foolproof test of one’s ability. They are the test of one’s memory and writing/typing speed.

Use of unfair means

Some students try to use unfair means to pass out the examinations. The innocent, hardworking and intelligent remain in the background.

Final words

But in spite of all this, we cannot say that there should be no examinations. There must be some proper way of judging the real worth of the students. So proper changes are required to avoid the abuses and increase the usefulness of the examinations. The assessment criteria of the examinations must be improved in such a way that all the students can show their abilities and can pass them without any fear.

More on essays

- Essay on Education

- Purpose of Education (Essay)

- Essay on my Best Teacher

- My Best Friend Essay

- Essay on My Hobby

- Subject List

- Take a Tour

- For Authors

- Subscriber Services

- Publications

- African American Studies

- African Studies

- American Literature

- Anthropology

- Architecture Planning and Preservation

- Art History

- Atlantic History

- Biblical Studies

- British and Irish Literature

- Childhood Studies

Chinese Studies

- Cinema and Media Studies

- Communication

- Criminology

- Environmental Science

- Evolutionary Biology

- International Law

- International Relations

- Islamic Studies

- Jewish Studies

- Latin American Studies

- Latino Studies

- Linguistics

- Literary and Critical Theory

- Medieval Studies

- Military History

- Political Science

- Public Health

- Renaissance and Reformation

- Social Work

- Urban Studies

- Victorian Literature

- Browse All Subjects

How to Subscribe

- Free Trials

In This Article Expand or collapse the "in this article" section The Examination System

Introduction, introductory works.

- General Overviews

- Han through Tang (206 bce –905)

- Five Dynasties through Yuan (907–1368)

- Ming through Qing (1368–1911)

- Taiping (1850–1864)

- Primary Sources

- Secondary Sources

- Terminological Issues

- Data for Specific Examinations

- Collections of Source Materials

- Modern Historiography

- Examination Tiers

- Examination Administration

- Examination Curriculum

- The Eight-Legged Essay

- Examination Aids

- Impact on Literature

- Examination Life

- Impact on Society

- Foreign Perspectives

Related Articles Expand or collapse the "related articles" section about

About related articles close popup.

Lorem Ipsum Sit Dolor Amet

Vestibulum ante ipsum primis in faucibus orci luctus et ultrices posuere cubilia Curae; Aliquam ligula odio, euismod ut aliquam et, vestibulum nec risus. Nulla viverra, arcu et iaculis consequat, justo diam ornare tellus, semper ultrices tellus nunc eu tellus.

- Intellectual Trends in Late Imperial China

- Local Elites in Ming-Qing China

- Local Elites in Song-Yuan China

- Middle-Period China

- Neo-Confucianism

- Printing and Book Culture

- Qing Dynasty up to 1840

- Traditional Prose

Other Subject Areas

Forthcoming articles expand or collapse the "forthcoming articles" section.

- Computing in China

- Popular Music in Contemporary China

- Sino-Russian Relations Since the 1980s

- Find more forthcoming articles...

- Export Citations

- Share This Facebook LinkedIn Twitter

The Examination System by Rui Magone LAST REVIEWED: 12 April 2019 LAST MODIFIED: 28 April 2014 DOI: 10.1093/obo/9780199920082-0078

The examination system, also known as “civil service examinations” or “imperial examinations”—and, in Chinese, as keju 科舉, keju zhidu 科舉制度, gongju 貢舉, xuanju 選舉 or zhiju 制舉—was the imperial Chinese bureaucracy’s central institution for recruiting its officials. Following both real and idealized models from previous times, the system was established at the beginning of the 7th century CE evolving over several dynasties into a complex institution that prevailed for 1,300 years before its abolition in 1905. One of the system’s most salient features, especially in the late imperial period (1400–1900), was its meritocratic structure (at least in principle, if not necessarily in practice): almost anyone from among the empire’s male population could sit for the examinations. Moreover, candidates were selected based on their performance rather than their pedigree. In order to be accessible to candidates anywhere in the empire, the system’s infrastructure spanned the entire territory. In a long sequence of triennial qualifying examinations at the local, provincial, metropolitan, and palace levels candidates were mainly required to write rhetorically complicated essays elucidating passages from the Confucian canon. Most candidates failed at each level, and only a couple of hundred out of a million or often more examinees attained final examination success at the metropolitan and palace levels. Due to its accessibility and ubiquity, the examination system had a decisive impact on the intellectual and social landscapes of imperial China. This impact was reinforced by the rule that candidates were allowed to retake examinations as often as they needed to in order to reach the next level. It was therefore not uncommon for individuals in imperial China to spend the great part of their lives, occasionally even until their last breath, sitting for the competitions. Indeed the extant sources reveal, by their sheer quantity alone, that large parts of the population, not only aspiring candidates, were in fact obsessed with the civil service examinations in the same way that modern societies are fascinated by sports leagues. To a great extent, it was this obsession, along with the system’s centripetal force constantly pulling the population in the different regions toward the political center in the capital, which may have held the large territory of imperial China together, providing it with both coherence and cohesion. Modern Historiography has tended to have a negative view of the examination system, singling it out, and specifically its predominantly literary curriculum, as the major cause for traditional Chinese society’s failure to develop into a modern nation with a strong scientific and technological tradition of its own. In the late 20th and early 21st century, this paradigm has become gradually more nuanced as historians have begun to develop new ways of approaching the extant sources, in particular the large number of examination essays and aids.

This section addresses readers who have little or no knowledge of the examination system and need both readable and reliable introductions to the subject. These works tend to highlight and describe extensively the Qing civil examinations during the 19th century, thus often creating among readers the impression that the system worked more or less the same in previous periods. While this was clearly not so, it is undeniable that no period in the long history of the civil examinations happens to be as well documented as the 19th century. Readers who desire to obtain a historically more nuanced sense of the system are referred to the sections General Overviews and Overviews by Period . Another problem with introductory works concerns the ideal balance between information and narration. Miyazaki 1981 and especially Jackson and Hugus 1999 are focused on telling a good story rather than providing copious evidence in dense footnotes. By contrast, Wilkinson 2012 and Zi 1894 are overtly technical, requiring a slow reading pace. The best way to strike a balance is to combine both approaches by, ideally, pairing Jackson and Hugus 1999 and Wilkinson 2012 . A problem that concerns Wang 1988 , Qi 2006 , and Li 2010 , all introductory works written by Chinese scholars in Chinese, is that they often quote passages from original Primary Sources in classical Chinese without providing a modern Chinese translation. One way to access these passages linguistically is to work directly with the literature cited under Terminological Issues . Finally, even though often neglected, the examination system also included a military branch, of which Zi 1896 provides the most readable account. Compared to their civil counterparts, the military competitions were of minimal significance, but they often served as a platform to obliquely move up the civil examination ladder.

Jackson, Beverley, and David Hugus. Ladder to the Clouds: Intrigue and Tradition in Chinese Rank . Berkeley, CA: Ten Speed, 1999.

Follows the trajectory of a late Qing examination candidate from his birth to his official position. Even though often leaning toward the fictitious, it is definitely a good read and one of the best illustrated books about the late imperial examination system and officialdom.

Li Bing李兵. Qiannian keju (千年科举). Changsha, China: Yuelu shushe, 2010.

Written in a rather colloquial and therefore accessible style, this well-illustrated book by a renowned expert of the examination system gives answers to questions most frequently asked about this topic, such as whether women were allowed to sit for the examinations. Has a good and sizable list of further readings.

Miyazaki, Ichisada. China’s Examination Hell: The Civil Service Examinations of Imperial China . Translated by Conrad Shirokauer. New Haven, CT: Yale University Press, 1981.

Originally published in 1963 in a longer and more academic version, this is a popular work by one of the most prominent Japanese scholars of the examination system. Packed with vivid anecdotes, this brief and captivating text describes all examination tiers. It is focused on the circumstances of the late Qing period, albeit not always explicitly.

Qi Rushan 齐如山. Zhongguo de kemin g (中国的科名). Shenyang, China: Liaoning Jiaoyu chubanshe, 2006.

Originally published in Taipei in 1956, this is a very accessible introduction arranged according to key terms used at the examinations.

Wang Daocheng 王道成. Keju shihua (科举史话). Beijing: Zhonghua shuju, 1988.

This is a short, easy to read, yet very informative introduction to the topic by a leading expert. While mainly focused on describing the Qing period, it also devotes a chapter to the system’s history. Has a very valuable appendix containing samples of all Qing examination genres. There are several books with an identical title, so make sure to use the one authored by Wang Daocheng.

Wilkinson, Endymion. Chinese History: A New Manual . Cambridge, MA: Harvard University Asia Center, 2012.

Chapter 22 “Education and Examinations” (pp. 292–304) of this monumental work contains a systematic introduction to the structure and curriculum of the late imperial examination system. Has also a section on primary and secondary sources. There are several editions of this manual; the 2012 version is the one you should use.

Zi, Étienne. Pratique des examens littéraires en Chine . Shanghai: Imprimerie de la Mission Catholique, 1894.

This is the most thorough and reliable description of the late Qing examination system. Has a copious amount of high-quality illustrations, which have been recycled in many other publications. Even though this book is now available online, try to use the original edition if you want to consult or reproduce the illustrative material, in particular the large-scale map of the Jiangnan examination compound. Also available in a 1971 reprint (Taipei: Chengwen, 1971).

Zi, Étienne. Pratique des examens militaires en Chine . Shanghai: Imprimerie de la Mission Catholique, 1896.

This is the best account of the late Qing military examination system available in any language. Describes all tiers and provides samples of examination topics. Richly illustrated, it also includes images of the weaponry used for testing the military candidates. Like the previous text, available in a 1971 reprint (Taipei: Chengwen, 1971).

back to top

Users without a subscription are not able to see the full content on this page. Please subscribe or login .

Oxford Bibliographies Online is available by subscription and perpetual access to institutions. For more information or to contact an Oxford Sales Representative click here .

- About Chinese Studies »

- Meet the Editorial Board »

- 1989 People's Movement

- Agricultural Technologies and Soil Sciences

- Agriculture, Origins of

- Ancestor Worship

- Anti-Japanese War

- Architecture, Chinese

- Assertive Nationalism and China's Core Interests

- Astronomy under Mongol Rule

- Book Publishing and Printing Technologies in Premodern Chi...

- Buddhist Monasticism

- Buddhist Poetry of China

- Budgets and Government Revenues

- Calligraphy

- Central-Local Relations

- Chiang Kai-shek

- Children’s Culture and Social Studies

- China and Africa

- China and Peacekeeping

- China and the World, 1900-1949

- China's Agricultural Regions

- China’s Soft Power

- China’s West

- Chinese Alchemy

- Chinese Communist Party Since 1949, The

- Chinese Communist Party to 1949, The

- Chinese Diaspora, The

- Chinese Nationalism

- Chinese Script, The

- Christianity in China

- Classical Confucianism

- Collective Agriculture

- Concepts of Authentication in Premodern China

- Confucius Institutes

- Consumer Society

- Contemporary Chinese Art Since 1976

- Criticism, Traditional

- Cross-Strait Relations

- Cultural Revolution

- Daoist Canon

- Deng Xiaoping

- Dialect Groups of the Chinese Language

- Disability Studies

- Drama (Xiqu 戏曲) Performance Arts, Traditional Chinese

- Dream of the Red Chamber

- Early Imperial China

- Economic Reforms, 1978-Present

- Economy, 1895-1949

- Emergence of Modern Banks

- Energy Economics and Climate Change

- Environmental Issues in Contemporary China

- Environmental Issues in Pre-Modern China

- Establishment Intellectuals

- Ethnicity and Minority Nationalities Since 1949

- Ethnicity and the Han

- Examination System, The

- Fall of the Qing, 1840-1912, The

- Falun Gong, The

- Family Relations in Contemporary China

- Fiction and Prose, Modern Chinese

- Film, Chinese Language

- Film in Taiwan

- Financial Sector, The

- Five Classics

- Folk Religion in Contemporary China

- Folklore and Popular Culture

- Foreign Direct Investment in China

- Gender and Work in Contemporary China

- Gender Issues in Traditional China

- Great Leap Forward and the Famine, The

- Guomindang (1912–1949)

- Han Expansion to the South

- Health Care System, The

- Heritage Management

- Heterodox Sects in Premodern China

- Historical Archaeology (Qin and Han)

- Hukou (Household Registration) System, The

- Human Origins in China

- Human Resource Management in China

- Human Rights in China

- Imperialism and China, c. 1800-1949

- Industrialism and Innovation in Republican China

- Innovation Policy in China

- Islam in China

- Journalism and the Press

- Judaism in China

- Labor and Labor Relations

- Landscape Painting

- Language, The Ancient Chinese

- Language Variation in China

- Late Imperial Economy, 960–1895

- Late Maoist Economic Policies

- Law in Late Imperial China

- Law, Traditional Chinese

- Li Bai and Du Fu

- Liang Qichao

- Literati Culture

- Literature Post-Mao, Chinese

- Literature, Pre-Ming Narrative

- Liu, Zongzhou

- Macroregions

- Management Style in "Chinese Capitalism"

- Marketing System in Pre-Modern China, The

- Marxist Thought in China

- Material Culture

- May Fourth Movement

- Media Representation of Contemporary China, International

- Medicine, Traditional Chinese

- Medieval Economic Revolution

- Migration Under Economic Reform

- Ming and Qing Drama

- Ming Dynasty

- Ming Poetry 1368–1521: Era of Archaism

- Ming Poetry 1522–1644: New Literary Traditions

- Ming-Qing Fiction

- Modern Chinese Drama

- Modern Chinese Poetry

- Modernism and Postmodernism in Chinese Literature

- Music in China

- Needham Question, The

- Neolithic Cultures in China

- New Social Classes, 1895–1949

- One Country, Two Systems

- Opium Trade

- Orientalism, China and

- Palace Architecture in Premodern China (Ming-Qing)

- Paleography

- People’s Liberation Army (PLA), The

- Philology and Science in Imperial China

- Poetics, Chinese-Western Comparative

- Poetry, Early Medieval

- Poetry, Traditional Chinese

- Political Art and Posters

- Political Dissent

- Political Thought, Modern Chinese

- Polo, Marco

- Popular Music in the Sinophone World

- Population Dynamics in Pre-Modern China

- Population Structure and Dynamics since 1949

- Porcelain Production

- Post-Collective Agriculture

- Poverty and Living Standards since 1949

- Prose, Traditional

- Regional and Global Security, China and

- Religion, Ancient Chinese

- Renminbi, The

- Republican China, 1911-1949

- Revolutionary Literature under Mao

- Rural Society in Contemporary China

- School of Names

- Silk Roads, The

- Sino-Hellenic Studies, Comparative Studies of Early China ...

- Sino-Japanese Relations Since 1945

- Social Welfare in China

- Sociolinguistic Aspects of the Chinese Language

- Su Shi (Su Dongpo)

- Sun Yat-sen and the 1911 Revolution

- Taiping Civil War

- Taiwanese Democracy

- Technology Transfer in China

- Television, Chinese

- Terracotta Warriors, The

- Tertiary Education in Contemporary China

- Texts in Pre-Modern East and South-East Asia, Chinese

- The Economy, 1949–1978

- The Shijing詩經 (Classic of Poetry; Book of Odes)

- Township and Village Enterprises

- Traditional Historiography

- Transnational Chinese Cinemas

- Tribute System, The

- Unequal Treaties and the Treaty Ports, The

- United States-China Relations, 1949-present

- Urban Change and Modernity

- Vernacular Language Movement

- Village Society in the Early Twentieth Century

- Warlords, The

- Water Management

- Women Poets and Authors in Late Imperial China

- Xi, Jinping

- Yan'an and the Revolutionary Base Areas

- Yuan Dynasty

- Yuan Dynasty Poetry

- Privacy Policy

- Cookie Policy

- Legal Notice

- Accessibility

Powered by:

- [66.249.64.20|185.147.128.134]

- 185.147.128.134

- Website Inauguration Function.

- Vocational Placement Cell Inauguration

- Media Coverage.

- Certificate & Recommendations

- Privacy Policy

- Science Project Metric

- Social Studies 8 Class

- Computer Fundamentals

- Introduction to C++

- Programming Methodology

- Programming in C++

- Data structures

- Boolean Algebra

- Object Oriented Concepts

- Database Management Systems

- Open Source Software

- Operating System

- PHP Tutorials

- Earth Science

- Physical Science

- Sets & Functions

- Coordinate Geometry

- Mathematical Reasoning

- Statics and Probability

- Accountancy

- Business Studies

- Political Science

- English (Sr. Secondary)

Hindi (Sr. Secondary)

- Punjab (Sr. Secondary)

- Accountancy and Auditing

- Air Conditioning and Refrigeration Technology

- Automobile Technology

- Electrical Technology

- Electronics Technology

- Hotel Management and Catering Technology

- IT Application

- Marketing and Salesmanship

- Office Secretaryship

- Stenography

- Hindi Essays

- English Essays

Letter Writing

- Shorthand Dictation

Essay on “Examination System” Complete Essay for Class 10, Class 12 and Graduation and other classes.

Examination System

Examination are a necessary evil. It is quite understandable that whenever we put in hard work to make successful and venture, we wait for some time to see or guess the results that might’s have been achieved or might possibly be achieved. It is in this context that examinations become unavoidable. Though methods and yardsticks employed may differ and that even widely.

A student studies the whole year and then needs to be examined. It is even in the interest of the students himself or herself to know where he or she stands and how far his or her efforts have borne fruit.

However, the examination system as we have today becomes a farce in essence. It is because of many reasons and factors. The most distressing among these factors is the menace of copying. The students who may be dullards but can manage to indulge in large-scale copying get high marks, whereas the really meritorious students who have worked hard get low marks.

Even otherwise , the prevalent examination system encourages cramming. Those who have a good memory or can indulge. In cramming, steal a march over others who cannot do this. Then, it is extremely painful to all lovers of transparency that sometimes even the question papers are sold in the market a day or so before an examination.

Some efforts have been made to bring reforms in the examination such as the introduction of gradation system, the setting of a number of different question papers. Objective questions , etc. but much still remains to be desired and done.

About evirtualguru_ajaygour

Leave a Reply Cancel reply

Your email address will not be published. Required fields are marked *

Quick Links

Popular Tags

Visitors question & answer.

- Gangadhar Singh on Essay on “A Journey in a Crowded Train” Complete Essay for Class 10, Class 12 and Graduation and other classes.

- Hemashree on Hindi Essay on “Charitra Bal”, “चरित्र बल” Complete Hindi Essay, Paragraph, Speech for Class 7, 8, 9, 10, 12 Students.

- S.J Roy on Letter to the editor of a daily newspaper, about the misuse and poor maintenance of a public park in your area.

- ashutosh jaju on Essay on “If there were No Sun” Complete Essay for Class 10, Class 12 and Graduation and other classes.

- Unknown on Essay on “A Visit to A Hill Station” Complete Essay for Class 10, Class 12 and Graduation and other classes.

Download Our Educational Android Apps

Latest Desk

- Role of the Indian Youth | Social Issue Essay, Article, Paragraph for Class 12, Graduation and Competitive Examination.

- Students and Politics | Social Issue Essay, Article, Paragraph for Class 12, Graduation and Competitive Examination.

- Menace of Drug Addiction | Social Issue Essay, Article, Paragraph for Class 12, Graduation and Competitive Examination.

- How to Contain Terrorism | Social Issue Essay, Article, Paragraph for Class 12, Graduation and Competitive Examination.

- Sanskrit Diwas “संस्कृत दिवस” Hindi Nibandh, Essay for Class 9, 10 and 12 Students.

- Nagrik Suraksha Diwas – 6 December “नागरिक सुरक्षा दिवस – 6 दिसम्बर” Hindi Nibandh, Essay for Class 9, 10 and 12 Students.

- Jhanda Diwas – 25 November “झण्डा दिवस – 25 नवम्बर” Hindi Nibandh, Essay for Class 9, 10 and 12 Students.

- NCC Diwas – 28 November “एन.सी.सी. दिवस – 28 नवम्बर” Hindi Nibandh, Essay for Class 9, 10 and 12 Students.

- Example Letter regarding election victory.

- Example Letter regarding the award of a Ph.D.

- Example Letter regarding the birth of a child.

- Example Letter regarding going abroad.

- Letter regarding the publishing of a Novel.

Vocational Edu.

- English Shorthand Dictation “East and Dwellings” 80 and 100 wpm Legal Matters Dictation 500 Words with Outlines.

- English Shorthand Dictation “Haryana General Sales Tax Act” 80 and 100 wpm Legal Matters Dictation 500 Words with Outlines meaning.

- English Shorthand Dictation “Deal with Export of Goods” 80 and 100 wpm Legal Matters Dictation 500 Words with Outlines meaning.

- English Shorthand Dictation “Interpreting a State Law” 80 and 100 wpm Legal Matters Dictation 500 Words with Outlines meaning.

An official website of the United States government

The .gov means it’s official. Federal government websites often end in .gov or .mil. Before sharing sensitive information, make sure you’re on a federal government site.

The site is secure. The https:// ensures that you are connecting to the official website and that any information you provide is encrypted and transmitted securely.

- Publications

- Account settings

Preview improvements coming to the PMC website in October 2024. Learn More or Try it out now .

- Advanced Search

- Journal List

- Springer Nature - PMC COVID-19 Collection

An automated essay scoring systems: a systematic literature review

Dadi ramesh.

1 School of Computer Science and Artificial Intelligence, SR University, Warangal, TS India

2 Research Scholar, JNTU, Hyderabad, India

Suresh Kumar Sanampudi

3 Department of Information Technology, JNTUH College of Engineering, Nachupally, Kondagattu, Jagtial, TS India

Associated Data

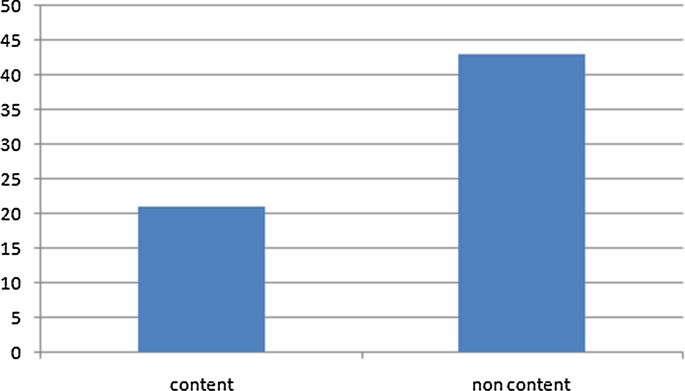

Assessment in the Education system plays a significant role in judging student performance. The present evaluation system is through human assessment. As the number of teachers' student ratio is gradually increasing, the manual evaluation process becomes complicated. The drawback of manual evaluation is that it is time-consuming, lacks reliability, and many more. This connection online examination system evolved as an alternative tool for pen and paper-based methods. Present Computer-based evaluation system works only for multiple-choice questions, but there is no proper evaluation system for grading essays and short answers. Many researchers are working on automated essay grading and short answer scoring for the last few decades, but assessing an essay by considering all parameters like the relevance of the content to the prompt, development of ideas, Cohesion, and Coherence is a big challenge till now. Few researchers focused on Content-based evaluation, while many of them addressed style-based assessment. This paper provides a systematic literature review on automated essay scoring systems. We studied the Artificial Intelligence and Machine Learning techniques used to evaluate automatic essay scoring and analyzed the limitations of the current studies and research trends. We observed that the essay evaluation is not done based on the relevance of the content and coherence.

Supplementary Information

The online version contains supplementary material available at 10.1007/s10462-021-10068-2.

Introduction

Due to COVID 19 outbreak, an online educational system has become inevitable. In the present scenario, almost all the educational institutions ranging from schools to colleges adapt the online education system. The assessment plays a significant role in measuring the learning ability of the student. Most automated evaluation is available for multiple-choice questions, but assessing short and essay answers remain a challenge. The education system is changing its shift to online-mode, like conducting computer-based exams and automatic evaluation. It is a crucial application related to the education domain, which uses natural language processing (NLP) and Machine Learning techniques. The evaluation of essays is impossible with simple programming languages and simple techniques like pattern matching and language processing. Here the problem is for a single question, we will get more responses from students with a different explanation. So, we need to evaluate all the answers concerning the question.

Automated essay scoring (AES) is a computer-based assessment system that automatically scores or grades the student responses by considering appropriate features. The AES research started in 1966 with the Project Essay Grader (PEG) by Ajay et al. ( 1973 ). PEG evaluates the writing characteristics such as grammar, diction, construction, etc., to grade the essay. A modified version of the PEG by Shermis et al. ( 2001 ) was released, which focuses on grammar checking with a correlation between human evaluators and the system. Foltz et al. ( 1999 ) introduced an Intelligent Essay Assessor (IEA) by evaluating content using latent semantic analysis to produce an overall score. Powers et al. ( 2002 ) proposed E-rater and Intellimetric by Rudner et al. ( 2006 ) and Bayesian Essay Test Scoring System (BESTY) by Rudner and Liang ( 2002 ), these systems use natural language processing (NLP) techniques that focus on style and content to obtain the score of an essay. The vast majority of the essay scoring systems in the 1990s followed traditional approaches like pattern matching and a statistical-based approach. Since the last decade, the essay grading systems started using regression-based and natural language processing techniques. AES systems like Dong et al. ( 2017 ) and others developed from 2014 used deep learning techniques, inducing syntactic and semantic features resulting in better results than earlier systems.

Ohio, Utah, and most US states are using AES systems in school education, like Utah compose tool, Ohio standardized test (an updated version of PEG), evaluating millions of student's responses every year. These systems work for both formative, summative assessments and give feedback to students on the essay. Utah provided basic essay evaluation rubrics (six characteristics of essay writing): Development of ideas, organization, style, word choice, sentence fluency, conventions. Educational Testing Service (ETS) has been conducting significant research on AES for more than a decade and designed an algorithm to evaluate essays on different domains and providing an opportunity for test-takers to improve their writing skills. In addition, they are current research content-based evaluation.

The evaluation of essay and short answer scoring should consider the relevance of the content to the prompt, development of ideas, Cohesion, Coherence, and domain knowledge. Proper assessment of the parameters mentioned above defines the accuracy of the evaluation system. But all these parameters cannot play an equal role in essay scoring and short answer scoring. In a short answer evaluation, domain knowledge is required, like the meaning of "cell" in physics and biology is different. And while evaluating essays, the implementation of ideas with respect to prompt is required. The system should also assess the completeness of the responses and provide feedback.

Several studies examined AES systems, from the initial to the latest AES systems. In which the following studies on AES systems are Blood ( 2011 ) provided a literature review from PEG 1984–2010. Which has covered only generalized parts of AES systems like ethical aspects, the performance of the systems. Still, they have not covered the implementation part, and it’s not a comparative study and has not discussed the actual challenges of AES systems.

Burrows et al. ( 2015 ) Reviewed AES systems on six dimensions like dataset, NLP techniques, model building, grading models, evaluation, and effectiveness of the model. They have not covered feature extraction techniques and challenges in features extractions. Covered only Machine Learning models but not in detail. This system not covered the comparative analysis of AES systems like feature extraction, model building, and level of relevance, cohesion, and coherence not covered in this review.

Ke et al. ( 2019 ) provided a state of the art of AES system but covered very few papers and not listed all challenges, and no comparative study of the AES model. On the other hand, Hussein et al. in ( 2019 ) studied two categories of AES systems, four papers from handcrafted features for AES systems, and four papers from the neural networks approach, discussed few challenges, and did not cover feature extraction techniques, the performance of AES models in detail.

Klebanov et al. ( 2020 ). Reviewed 50 years of AES systems, listed and categorized all essential features that need to be extracted from essays. But not provided a comparative analysis of all work and not discussed the challenges.

This paper aims to provide a systematic literature review (SLR) on automated essay grading systems. An SLR is an Evidence-based systematic review to summarize the existing research. It critically evaluates and integrates all relevant studies' findings and addresses the research domain's specific research questions. Our research methodology uses guidelines given by Kitchenham et al. ( 2009 ) for conducting the review process; provide a well-defined approach to identify gaps in current research and to suggest further investigation.

We addressed our research method, research questions, and the selection process in Sect. 2 , and the results of the research questions have discussed in Sect. 3 . And the synthesis of all the research questions addressed in Sect. 4 . Conclusion and possible future work discussed in Sect. 5 .

Research method

We framed the research questions with PICOC criteria.

Population (P) Student essays and answers evaluation systems.

Intervention (I) evaluation techniques, data sets, features extraction methods.

Comparison (C) Comparison of various approaches and results.

Outcomes (O) Estimate the accuracy of AES systems,

Context (C) NA.

Research questions

To collect and provide research evidence from the available studies in the domain of automated essay grading, we framed the following research questions (RQ):

RQ1 what are the datasets available for research on automated essay grading?

The answer to the question can provide a list of the available datasets, their domain, and access to the datasets. It also provides a number of essays and corresponding prompts.

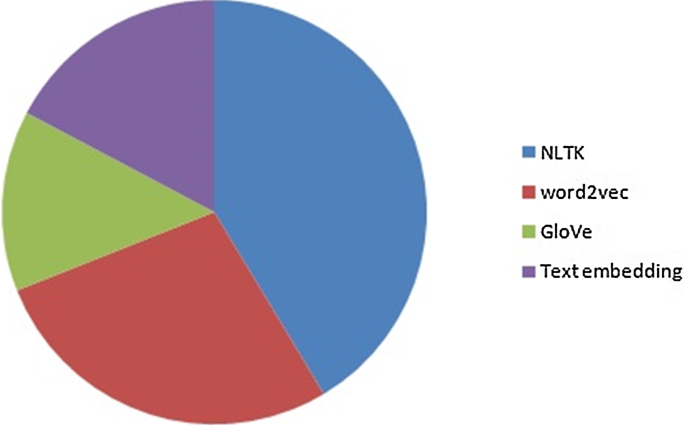

RQ2 what are the features extracted for the assessment of essays?

The answer to the question can provide an insight into various features so far extracted, and the libraries used to extract those features.

RQ3, which are the evaluation metrics available for measuring the accuracy of algorithms?

The answer will provide different evaluation metrics for accurate measurement of each Machine Learning approach and commonly used measurement technique.

RQ4 What are the Machine Learning techniques used for automatic essay grading, and how are they implemented?

It can provide insights into various Machine Learning techniques like regression models, classification models, and neural networks for implementing essay grading systems. The response to the question can give us different assessment approaches for automated essay grading systems.

RQ5 What are the challenges/limitations in the current research?

The answer to the question provides limitations of existing research approaches like cohesion, coherence, completeness, and feedback.

Search process

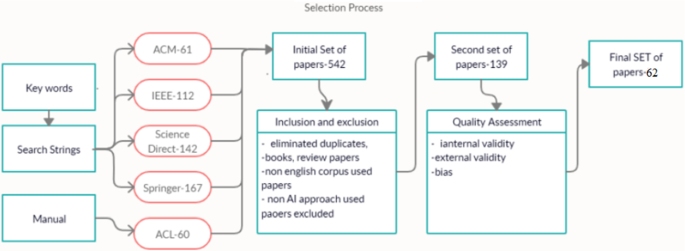

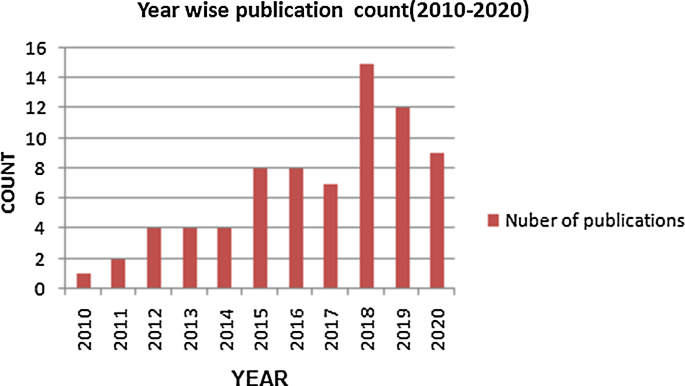

We conducted an automated search on well-known computer science repositories like ACL, ACM, IEEE Explore, Springer, and Science Direct for an SLR. We referred to papers published from 2010 to 2020 as much of the work during these years focused on advanced technologies like deep learning and natural language processing for automated essay grading systems. Also, the availability of free data sets like Kaggle (2012), Cambridge Learner Corpus-First Certificate in English exam (CLC-FCE) by Yannakoudakis et al. ( 2011 ) led to research this domain.

Search Strings : We used search strings like “Automated essay grading” OR “Automated essay scoring” OR “short answer scoring systems” OR “essay scoring systems” OR “automatic essay evaluation” and searched on metadata.

Selection criteria

After collecting all relevant documents from the repositories, we prepared selection criteria for inclusion and exclusion of documents. With the inclusion and exclusion criteria, it becomes more feasible for the research to be accurate and specific.

Inclusion criteria 1 Our approach is to work with datasets comprise of essays written in English. We excluded the essays written in other languages.

Inclusion criteria 2 We included the papers implemented on the AI approach and excluded the traditional methods for the review.

Inclusion criteria 3 The study is on essay scoring systems, so we exclusively included the research carried out on only text data sets rather than other datasets like image or speech.

Exclusion criteria We removed the papers in the form of review papers, survey papers, and state of the art papers.

Quality assessment

In addition to the inclusion and exclusion criteria, we assessed each paper by quality assessment questions to ensure the article's quality. We included the documents that have clearly explained the approach they used, the result analysis and validation.

The quality checklist questions are framed based on the guidelines from Kitchenham et al. ( 2009 ). Each quality assessment question was graded as either 1 or 0. The final score of the study range from 0 to 3. A cut off score for excluding a study from the review is 2 points. Since the papers scored 2 or 3 points are included in the final evaluation. We framed the following quality assessment questions for the final study.

Quality Assessment 1: Internal validity.

Quality Assessment 2: External validity.

Quality Assessment 3: Bias.

The two reviewers review each paper to select the final list of documents. We used the Quadratic Weighted Kappa score to measure the final agreement between the two reviewers. The average resulted from the kappa score is 0.6942, a substantial agreement between the reviewers. The result of evolution criteria shown in Table Table1. 1 . After Quality Assessment, the final list of papers for review is shown in Table Table2. 2 . The complete selection process is shown in Fig. Fig.1. 1 . The total number of selected papers in year wise as shown in Fig. Fig.2. 2 .

Quality assessment analysis

Final list of papers

Selection process

Year wise publications

What are the datasets available for research on automated essay grading?

To work with problem statement especially in Machine Learning and deep learning domain, we require considerable amount of data to train the models. To answer this question, we listed all the data sets used for training and testing for automated essay grading systems. The Cambridge Learner Corpus-First Certificate in English exam (CLC-FCE) Yannakoudakis et al. ( 2011 ) developed corpora that contain 1244 essays and ten prompts. This corpus evaluates whether a student can write the relevant English sentences without any grammatical and spelling mistakes. This type of corpus helps to test the models built for GRE and TOFEL type of exams. It gives scores between 1 and 40.

Bailey and Meurers ( 2008 ), Created a dataset (CREE reading comprehension) for language learners and automated short answer scoring systems. The corpus consists of 566 responses from intermediate students. Mohler and Mihalcea ( 2009 ). Created a dataset for the computer science domain consists of 630 responses for data structure assignment questions. The scores are range from 0 to 5 given by two human raters.

Dzikovska et al. ( 2012 ) created a Student Response Analysis (SRA) corpus. It consists of two sub-groups: the BEETLE corpus consists of 56 questions and approximately 3000 responses from students in the electrical and electronics domain. The second one is the SCIENTSBANK(SemEval-2013) (Dzikovska et al. 2013a ; b ) corpus consists of 10,000 responses on 197 prompts on various science domains. The student responses ladled with "correct, partially correct incomplete, Contradictory, Irrelevant, Non-domain."

In the Kaggle (2012) competition, released total 3 types of corpuses on an Automated Student Assessment Prize (ASAP1) (“ https://www.kaggle.com/c/asap-sas/ ” ) essays and short answers. It has nearly 17,450 essays, out of which it provides up to 3000 essays for each prompt. It has eight prompts that test 7th to 10th grade US students. It gives scores between the [0–3] and [0–60] range. The limitations of these corpora are: (1) it has a different score range for other prompts. (2) It uses statistical features such as named entities extraction and lexical features of words to evaluate essays. ASAP + + is one more dataset from Kaggle. It is with six prompts, and each prompt has more than 1000 responses total of 10,696 from 8th-grade students. Another corpus contains ten prompts from science, English domains and a total of 17,207 responses. Two human graders evaluated all these responses.

Correnti et al. ( 2013 ) created a Response-to-Text Assessment (RTA) dataset used to check student writing skills in all directions like style, mechanism, and organization. 4–8 grade students give the responses to RTA. Basu et al. ( 2013 ) created a power grading dataset with 700 responses for ten different prompts from US immigration exams. It contains all short answers for assessment.

The TOEFL11 corpus Blanchard et al. ( 2013 ) contains 1100 essays evenly distributed over eight prompts. It is used to test the English language skills of a candidate attending the TOFEL exam. It scores the language proficiency of a candidate as low, medium, and high.

International Corpus of Learner English (ICLE) Granger et al. ( 2009 ) built a corpus of 3663 essays covering different dimensions. It has 12 prompts with 1003 essays that test the organizational skill of essay writing, and13 prompts, each with 830 essays that examine the thesis clarity and prompt adherence.