Navigation Menu

Search code, repositories, users, issues, pull requests..., provide feedback.

We read every piece of feedback, and take your input very seriously.

Saved searches

Use saved searches to filter your results more quickly.

To see all available qualifiers, see our documentation .

- Notifications

Collection of must read papers for Data Science, or Machine Learning / Deep Learning Engineer

hurshd0/must-read-papers-for-ml

Folders and files, repository files navigation, must read papers for data science, ml, and dl, curated collection of data science, machine learning and deep learning papers, reviews and articles that are on must read list..

NOTE: 🚧 in process of updating, let me know what additional papers, articles, blogs to add I will add them here.

👉 ⭐ this repo

Contributing

- 👉 🔃 Please feel free to Submit Pull Request , if links are broken, or I am missing any important papers, blogs or articles.

👇 READ THIS 👇

- 👉 Reading paper with heavy math is hard, it takes time and effort to understand, most of it is dedication and motivation to not quit, don't be discouraged, read once, read twice, read thrice,... until it clicks and blows you away.

🥇 - Read it first

🥈 - Read it second

🥉 - Read it third

Data Science

📊 pre-processing & eda.

🥇 📄 Data preprocessing - Tidy data - by Hadley Wickham

📓 General DS

🥇 📄 Statistical Modeling: The Two Cultures - by Leo Breiman

🥈 📄 A study in Rashomon curves and volumes: A new perspective on generalization and model simplicity in machine learning

- 📹 KDD 2019 Cynthia Rudin's Keynote

🥇 📄 Frequentism and Bayesianism: A Python-driven Primer by Jake VanderPlas

Machine Learning

🎯 general ml.

🥇 📄 Model Evaluation, Model Selection, and Algorithm Selection in Machine Learning - by Sebastian Raschka

🥇 📄 A Brief Introduction into Machine Learning - by Gunnar Ratsch

🥉 📄 An Introduction to the Conjugate Gradient Method Without the Agonizing Pain - by Jonathan Richard Shewchuk

🥉 📄 On Model Stability as a Function of Random Seed

🔍 Outlier/Anomaly detection

🥇 📰 Outlier Detection : A Survey

🥈 📄 XGBoost: A Scalable Tree Boosting System

🥈 📄 LightGBM: A Highly Efficient Gradient BoostingDecision Tree

🥈 📄 AdaBoost and the Super Bowl of Classifiers - A Tutorial Introduction to Adaptive Boosting

🥉 📄 Greedy Function Approximation: A Gradient Boosting Machine

📖 Unraveling Blackbox ML

🥉 📄 Peeking Inside the Black Box: Visualizing Statistical Learning with Plots of Individual Conditional Expectation

🥉 📄 Data Shapley: Equitable Valuation of Data for Machine Learning

✂️ Dimensionality Reduction

🥇 📄 A Tutorial on Principal Component Analysis

🥈 📄 How to Use t-SNE Effectively

🥉 📄 Visualizing Data using t-SNE

📈 Optimization

🥇 📄 A Tutorial on Bayesian Optimization

🥈 📄 Taking the Human Out of the Loop: A review of Bayesian Optimization

Famous Blogs

Sebastian Raschka Chip Huyen

🎱 🔮 Recommenders

🥇 📄 A Survey of Collaborative Filtering Techniques

🥇 📄 Collaborative Filtering Recommender Systems

🥇 📄 Deep Learning Based Recommender System: A Survey and New Perspectives

🥇 📄 🤔 ⭐ Explainable Recommendation: A Survey and New Perspectives ⭐

Case Studies

🥈 📄 The Netflix Recommender System: Algorithms, Business Value,and Innovation

- Netflix Recommendations: Beyond the 5 stars Part 1

- Netflix Recommendations: Beyond the 5 stars Part 2

🥈 📄 Two Decades of Recommender Systems at Amazon.com

🥈 🌐 How Does Spotify Know You So Well?

👉 More In-Depth study, 📕 Recommender Systems Handbook

Famous Deep Learning Blogs 🤠

🌐 Stanford UFLDL Deep Learning Tutorial

🌐 Distill.pub

🌐 Colah's Blog

🌐 Andrej Karpathy

🌐 Zack Lipton

🌐 Sebastian Ruder

🌐 Jay Alammar

📚 Neural Networks and Deep Learning Neural Networks

⭐ 🥇 📰 The Matrix Calculus You Need For Deep Learning - Terence Parr and Jeremy Howard ⭐

🥇 📰 Deep learning -Yann LeCun, Yoshua Bengio & Geoffrey Hinton

🥇 📄 Generalization in Deep Learning

🥇 📄 Topology of Learning in Artificial Neural Networks

🥇 📄 Dropout: A Simple Way to Prevent Neural Networks from Overfitting

🥈 📄 Polynomial Regression As an Alternative to Neural Nets

🥈 🌐 The Neural Network Zoo

🥈 🌐 Image Completion with Deep Learning in TensorFlow

🥈 📄 Batch Normalization: Accelerating Deep Network Training by Reducing Internal Covariate Shift

🥉 📄 A systematic study of the class imbalance problem in convolutional neural networks

🥉 📄 All Neural Networks are Created Equal

🥉 📄 Adam: A Method for Stochastic Optimization

🥉 📄 AutoML: A Survey of the State-of-the-Art

🥇 📄 Visualizing and Understanding Convolutional Networks -by Andrej Karpathy Justin Johnson Li Fei-Fei

🥈 📄 Deep Residual Learning for Image Recognition

🥈 📄 AlexNet-ImageNet Classification with Deep Convolutional Neural Networks

🥈 📄 VGG Net-VERY DEEP CONVOLUTIONAL NETWORKS FOR LARGE-SCALE IMAGE RECOGNITION

🥉 📄 A Mathematical Theory of Deep Convolutional Neural Networks for Feature Extraction

🥉 📄 Large-scale Video Classification with Convolutional Neural Networks

🥉 📄 Bottom-Up and Top-Down Attention for Image Captioning and Visual Question Answering

⚫ CapsNet 🔱

🥇 📄 Dynamic Routing Between Capsules

Blog explaning, "What are CapsNet, or Capsule Networks?"

Capsule Networks Tutorial by Aureline Geron

🏞️ 💬 Image Captioning

🥇 📄 Show and Tell: A Neural Image Caption Generator

🥈 📄 Neural Machine Translation by Jointly Learning to Align and Translate

🥈 📄 StyleNet: Generating Attractive Visual Captions with Styles

🥈 📄 Show, Attend and Tell: Neural Image Caption Generation with Visual Attention

🥈 📄 Where to put the Image in an Image Caption Generator

🥈 📄 Dank Learning: Generating Memes Using Deep Neural Networks

🚗 🚶♂️ Object Detection 🦅 🏈

🥈 📄 ResNet-Deep Residual Learning for Image Recognition

🥈 📄 YOLO-You Only Look Once: Unified, Real-Time Object Detection

🥈 📄 Microsoft COCO: Common Objects in Context

- COCO dataset

🥈 📄 (R-CNN) Rich feature hierarchies for accurate object detection and semantic segmentation

🥈 📄 Fast R-CNN

- 💻 Papers with Code

🥈 📄 Faster R-CNN

🥈 📄 Mask R-CNN

🚗 🚶♂️ 👫 Pose Detection 🏃 💃

🥈 📄 DensePose: Dense Human Pose Estimation In The Wild

🥈 📄 Parsing R-CNN for Instance-Level Human Analysis

🔡 🔣 Deep NLP 💱 🔢

🥇 📄 A Primer on Neural Network Models for Natural Language Processing

🥇 📄 Empirical Evaluation of Gated Recurrent Neural Networks on Sequence Modeling

🥇 📄 On the Properties of Neural Machine Translation: Encoder–Decoder Approaches

🥇 📄 LSTM: A Search Space Odyssey - by Klaus Greff et al.

🥇 📄 A Critical Review of Recurrent Neural Networksfor Sequence Learning

🥇 📄 Visualizing and Understanding Recurrent Networks

⭐ 🥇 📄 Attention Is All You Need ⭐

🥇 📄 An Empirical Exploration of Recurrent Network Architectures

🥇 📄 Open AI (GPT-2) Language Models are Unsupervised Multitask Learners

🥇 📄 BERT: Pre-training of Deep Bidirectional Transformers forLanguage Understanding

- Google BERT Annoucement

🥉 📄 Parameter-Efficient Transfer Learning for NLP

🥉 📄 A Sensitivity Analysis of (and Practitioners’ Guide to) ConvolutionalNeural Networks for Sentence Classification

🥉 📄 A Survey on Recent Advances in Named Entity Recognition from Deep Learning models

🥉 📄 Convolutional Neural Networks for Sentence Classification

🥉 📄 Pervasive Attention: 2D Convolutional Neural Networks for Sequence-to-Sequence Prediction

🥉 📄 Single Headed Attention RNN: Stop Thinking With Your Head

🥇 📄 Generative Adversarial Nets - Goodfellow et al.

📚 GAN Rabbit Hole -> GAN Papers

⭕➖⭕ GNNs (Graph Neural Networks)

🥉 📄 A Comprehensive Survey on Graph Neural Networks

👨⚕️ 💉 Medical AI 💊 🔬

Machine learning classifiers and fMRI: a tutorial overview - by Francisco et al.

👇 Cool Stuff 👇

🔊 📄 SoundNet: Learning Sound Representations from Unlabeled Video

🎨 📄 CAN: Creative Adversarial NetworksGenerating “Art” by Learning About Styles andDeviating from Style Norms

🎨 📄 Deep Painterly Harmonization

- Github Code

🕺 💃 📄 Everybody Dance Now

- Everybody Dance Now - Youtube Video

⚽ Soccer on Your Tabletop

👱♀️ 💇♀️ 📄 SC-FEGAN: Face Editing Generative Adversarial Network with User's Sketch and Color

📸 📄 Handheld Mobile Photography in Very Low Light

🏯 🕌 📄 Learning Deep Features for Scene Recognitionusing Places Database

🚅 🚄 📄 High-Speed Tracking withKernelized Correlation Filters

🎬 📄 Recent progress in semantic image segmentation

Rabbit hole -> 🔊 🌐 Analytics Vidhya Top 10 Audio Processing Tasks and their papers

:blonde_man: -> 👴 📄 📄 Face Aging With Condintional GANS

:blonde_man: -> 👴 📄 📄 Dual Conditional GANs for Face Aging and Rejuvenation

⚖️ 📄 BAGAN: Data Augmentation with Balancing GAN

labml.ai Annotated PyTorch Paper Implementations

📰 Cap Stone Projects 📰

8 Awesome Data Science Capstone Projects

10 Powerful Applications of Linear Algebra in Data Science

Top 5 Interesting Applications of GANs

Deep Learning Applications a beginner can build in minutes

2019-10-28 Started must-read-papers-for-ml repo

2019-10-29 Added analytics vidhya use case studies article links

2019-10-30 Added Outlier/Anomaly detection paper, separated Boosting, CNN, Object Detection, NLP papers, and added Image captioning papers

2019-10-31 Added Famous Blogs from Deep and Machine Learning Researchers

2019-11-1 Fixed markdown issues, added contribution guideline

2019-11-20 Added Recommender Surveys, and Papers

2019-12-12 Added R-CNN variants, PoseNets, GNNs

2020-02-23 Added GRU paper

Contributors 2

Beginning with machine learning: a comprehensive primer

- Published: 21 July 2021

- Volume 230 , pages 2363–2444, ( 2021 )

Cite this article

- Rahul Yedida 1 &

- Snehanshu Saha 2

721 Accesses

4 Citations

2 Altmetric

Explore all metrics

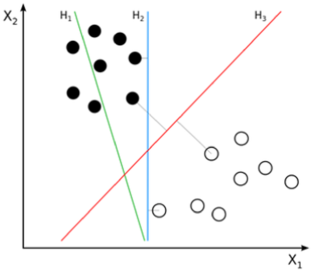

This is a primer on machine learning for beginners. Certainly, there are plenty of excellent books on the subject, providing detailed explanations of many algorithms. The intent of this primer is not to outdo these texts in rigor; rather, to provide an introduction to the subject that is accessible, yet covers all the mathematical details, and provides implementations of most algorithms in Python. We feel this provides a well-rounded understanding of each algorithm: only by writing the code and seeing the math applied, and visually inspecting the algorithm’s working, will a reader be fully able to connect all the dots. The style of the primer is largely conversational, and avoids too much formal jargon. We will certainly introduce all required technical terms, but while explaining an algorithm, we will use simple English and avoid unnecessarily formalisms. We hope this proves useful for individuals willing to seriously study the subject.

This is a preview of subscription content, log in via an institution to check access.

Access this article

Price includes VAT (Russian Federation)

Instant access to the full article PDF.

Rent this article via DeepDyve

Institutional subscriptions

Similar content being viewed by others

What Is Machine Learning?

A survey on semi-supervised learning

A survey of transfer learning, data availability statement.

This manuscript has associated data in a data repository. [Authors’ comment: ...].

Blog Link: https://beginningwithml.wordpress.com/ .

https://www.coursera.org/learn/machine-learning .

Image from https://rasbt.github.io/mlxtend/user_guide/general_concepts/gradient-optimization/ .

https://see.stanford.edu/course/cs229 .

There are other necessary conditions for a matrix to be invertible, but being a square matrix is a fundamental requirement.

This is not, strictly speaking, true. In some cases, the algorithm will perform worse than if the sample was within the range, but in such cases, not scaling would almost certainly not be of help. You could fix this by performing outlier analysis , which aims to find such samples, or by clipping the value to 1, which is a less frequently used approach, but useful in some domains.

Source: https://en.wikipedia.org/wiki/Sigmoid_function .

http://ece.eng.umanitoba.ca/undergraduate/ECE4850T02/Lecture%20Slides/LocallyWeightedRegression.pdf .

We will talk about kernel functions in a lot more detail when we discuss support vector machines. This is just an intuitive understanding of kernels.

https://web.as.uky.edu/statistics/users/pbreheny/621/F10/notes/11-4.pdf .

https://en.wikipedia.org/wiki/Local_regression#Weight_function .

https://www.itl.nist.gov/div898/handbook/pmd/section1/pmd144.htm .

By Inductiveload—self-made, Mathematica, Inkscape, Public Domain, link: https://commons.wikimedia.org/w/index.php?curid=3817954 .

http://www.cs.princeton.edu/courses/archive/spr09/cos513/scribe/lecture11.pdf .

https://stats.stackexchange.com/a/353342/212844 .

By Nicoguaro—Own work, CC BY 4.0, link: https://commons.wikimedia.org/w/index.php?curid=46259145 .

https://drive.google.com/file/d/1Ngq7t_HxcvVKRRQkrepgtU-P2PaUCYKx/view?usp=sharing .

It is actually pretty friendly; it just has an unfortunate name.

https://math.stackexchange.com/a/602192 .

https://math.stackexchange.com/a/38704 .

Credits: https://www.byclb.com/TR/Tutorials/neural_networks/ch4_1.htm .

Credits: CS229 materials from Stanford SEE.

This specific example is called the duck test—and it is where “duck typing" in Python gets its name.

http://math.harvard.edu/~ctm/home/text/others/shannon/entropy/entropy.pdf .

Credits: https://bricaud.github.io/personal-blog/entropy-in-decision-trees/ .

https://1drv.ms/b/s!AiFT_8UzfVHdtwT3lwKOb3mF6ssy .

https://drive.google.com/open?id=1BjZrw5_alezgJEpsKgfzSFl0z5fFRq5S .

Machine Learning, 2nd Edition, by Tom M. Mitchell.

Fayyad and Irani, 1991. On the handling of continuous-valued attributes in decision tree generation. http://web.cs.iastate.edu/~honavar/fayyad.pdf .

Fayyad and Irani, 1993. Multi-interval discretization of continuous-valued attributes for classification learning. https://www.ijcai.org/Proceedings/93-2/Papers/022.pdf .

Quinlan, 1986. Induction of decision trees. http://hunch.net/~coms-4771/quinlan.pdf .

https://commons.wikimedia.org/w/index.php?curid=73710028 .

https://xavierbourretsicotte.github.io/SVM_implementation.html .

http://goelhardik.github.io/2016/11/28/svm-cvxopt/ .

https://jonchar.net/notebooks/SVM/ .

https://people.cs.pitt.edu/~milos/courses/cs3750-Fall2007/lectures/class-kernels.pdf .

http://cs229.stanford.edu/notes/cs229-notes3.pdf .

https://www.coursera.org/learn/neural-networks-deep-learning .

https://1drv.ms/b/s!AiFT_8UzfVHdtwgyEQcKNYmIC4v5?e=CSXVdG .

https://1drv.ms/b/s!AiFT_8UzfVHdtwO9luN6QZavlfq-?e=7vCGet .

https://papers.nips.cc/paper/5422-on-the-number-of-linear-regions-of-deep-neural-networks.pdf .

https://arxiv.org/abs/1806.01844 .

https://www.researchgate.net/publication/332513541_Evolution_of_Novel_Activation_Functions_in_Neural_Network_Training_and_implications_in_Habitability_Classification .

https://arxiv.org/abs/1502.01852 .

Srivastava, Nitish, et al. “Dropout: a simple way to prevent neural networks from overfitting." The journal of machine learning research 15.1 (2014):1929–1958.

Ioffe, Sergey, and Christian Szegedy. “Batch normalization: Accelerating deep network training by reducing internal covariate shift.” arXiv preprint arXiv:1502.03167 (2015).

Santurkar, Shibani, et al. “How does batch normalization help optimization?.” Advances in Neural Information Processing Systems. 2018.

Salimans, Tim, and Durk P. Kingma. “Weight normalization: A simple reparameterization to accelerate training of deep neural networks.” Advances in Neural Information Processing Systems. 2016.

He, Kaiming, et al. "Delving deep into rectifiers: Surpassing human-level performance on imagenet classification." Proceedings of the IEEE international conference on computer vision. 2015.

By Stephenekka—Own work, CC BY-SA 4.0, Link: https://commons.wikimedia.org/w/index.php?curid=49572625 .

Smith, Leslie N. "A disciplined approach to neural network hyper-parameters: Part 1—learning rate, batch size, momentum, and weight decay." arXiv preprint arXiv:1803.09820 (2018).

Smith, Leslie N. “Cyclical learning rates for training neural networks.” 2017 IEEE Winter Conference on Applications of Computer Vision (WACV). IEEE, 2017.

Seong, Sihyeon, et al. “Towards Flatter Loss Surface via Nonmonotonic Learning Rate Scheduling.” UAI. 2018.

Yedida, Rahul, and Snehanshu Saha. “A novel adaptive learning rate scheduler for deep neural networks.” arXiv preprint arXiv:1902.07399 (2019).

Li, Hao, et al. “Visualizing the loss landscape of neural nets.” Advances in Neural Information Processing Systems. 2018.

Zeiler, Matthew D., and Rob Fergus. “Visualizing and understanding convolutional networks.” European conference on computer vision. Springer, Cham, 2014.

Hinton, Geoffrey, Oriol Vinyals, and Jeff Dean. “Distilling the knowledge in a neural network.” arXiv preprint arXiv:1503.02531 (2015).

Furlanello, Tommaso, et al. “Born again neural networks.” arXiv preprint arXiv:1805.04770 (2018).

https://medium.com/@RaghavPrabhu/understanding-of-convolutional-neural-network-cnn-deep-learning-99760835f148 .

https://blog.floydhub.com/gans-story-so-far/ .

https://1drv.ms/b/s!AiFT_8UzfVHdtwIcgiINLQ-o6sCh?e=49iRq4 .

https://www.coursera.org/specializations/deep-learning .

https://course.fast.ai/ .

https://scikit-learn.org/stable/modules/clustering.html .

https://en.wikipedia.org/wiki/Coordinate_descent .

Image taken from https://stats.stackexchange.com/questions/194734/dbscan-what-is-a-core-point .

Tan, P.N., 2018. Introduction to data mining. Pearson Education India.

https://en.wikipedia.org/wiki/DBSCAN .

Image from https://www.analyticsvidhya.com/blog/2017/02/test-data-scientist-clustering/ .

https://en.wikipedia.org/wiki/Ward%27s_method .

https://newonlinecourses.science.psu.edu/stat505/node/146/ .

From Tan, P.N., 2018. Introduction to data mining. Pearson Education India.

https://en.wikipedia.org/wiki/Graph_partition#Problem .

Bach, F.R. and Jordan, M.I., 2004. Learning spectral clustering. In Advances in neural information processing systems (pp. 305-312).

https://en.wikipedia.org/wiki/Laplacian_matrix .

https://calculatedcontent.com/2012/10/09/spectral-clustering/ .

Ng, A.Y., Jordan, M.I. and Weiss, Y., 2002. On spectral clustering: Analysis and an algorithm. In Advances in neural information processing systems (pp. 849–856).

https://en.wikipedia.org/wiki/Dunn_index .

see Bernard Desgraupes notes: https://cran.r-project.org/web/packages/clusterCrit/vignettes/clusterCrit.pdf .

https://en.wikipedia.org/wiki/Silhouette_(clustering) .

from L. Kaufman and P. J. Rousseeuw, Finding groups in data: an introduction to cluster analysis, vol. 344. John Wiley & Sons, 2009.

http://cda.psych.uiuc.edu/multivariate_fall_2012/systat_cluster_manual.pdf .

see: https://en.wikipedia.org/wiki/Cophenetic_correlation .

https://en.wikipedia.org/wiki/Rand_index .

https://scikit-learn.org/stable/auto_examples/cluster/plot_kmeans_silhouette_analysis.html .

https://web.archive.org/web/20110124070213/http://gremlin1.gdcb.iastate.edu/MIP/gene/MicroarrayData/gapstatistics.pdf .

https://datasciencelab.wordpress.com/tag/gap-statistic/ .

https://stats.stackexchange.com/a/11702 .

https://en.wikipedia.org/wiki/Jaccard_index .

Author information

Authors and affiliations.

Department of Computer Science, North Carolina State University, Raleigh, USA

Rahul Yedida

CSIS and APPCAIR, BITS Pilani K K Birla Goa Campus, Sancoale, India

Snehanshu Saha

You can also search for this author in PubMed Google Scholar

Corresponding author

Correspondence to Snehanshu Saha .

Rights and permissions

Reprints and permissions

About this article

Yedida, R., Saha, S. Beginning with machine learning: a comprehensive primer. Eur. Phys. J. Spec. Top. 230 , 2363–2444 (2021). https://doi.org/10.1140/epjs/s11734-021-00209-7

Download citation

Received : 14 November 2020

Accepted : 22 June 2021

Published : 21 July 2021

Issue Date : September 2021

DOI : https://doi.org/10.1140/epjs/s11734-021-00209-7

Share this article

Anyone you share the following link with will be able to read this content:

Sorry, a shareable link is not currently available for this article.

Provided by the Springer Nature SharedIt content-sharing initiative

Advertisement

- Find a journal

- Publish with us

- Track your research

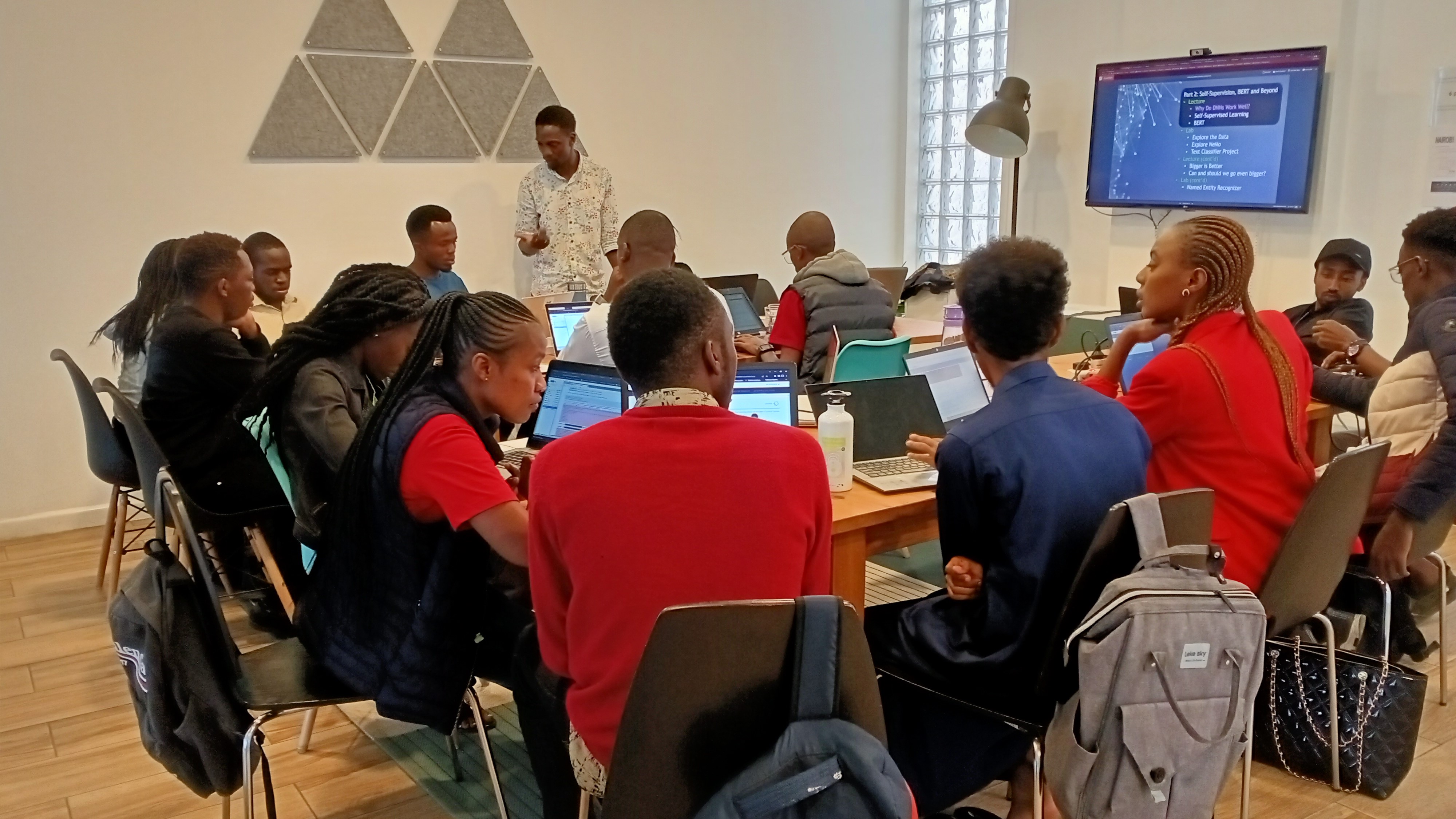

How to Read Research Papers: A Pragmatic Approach for ML Practitioners

Is it necessary for data scientists or machine-learning experts to read research papers?

The short answer is yes. And don’t worry if you lack a formal academic background or have only obtained an undergraduate degree in the field of machine learning.

Reading academic research papers may be intimidating for individuals without an extensive educational background. However, a lack of academic reading experience should not prevent Data scientists from taking advantage of a valuable source of information and knowledge for machine learning and AI development .

This article provides a hands-on tutorial for data scientists of any skill level to read research papers published in academic journals such as NeurIPS , JMLR , ICML, and so on.

Before diving wholeheartedly into how to read research papers, the first phases of learning how to read research papers cover selecting relevant topics and research papers.

Step 1: Identify a topic

The domain of machine learning and data science is home to a plethora of subject areas that may be studied. But this does not necessarily imply that tackling each topic within machine learning is the best option.

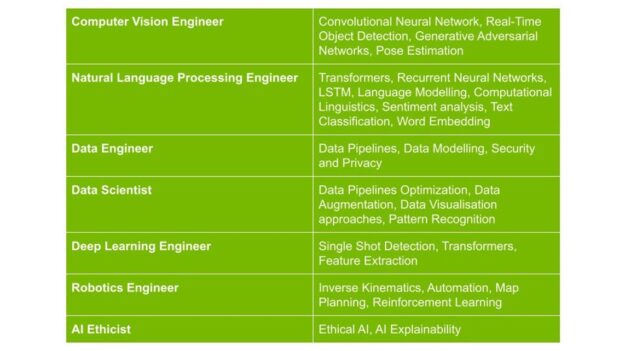

Although generalization for entry-level practitioners is advised, I’m guessing that when it comes to long-term machine learning, career prospects, practitioners, and industry interest often shifts to specialization.

Identifying a niche topic to work on may be difficult, but good. Still, a rule of thumb is to select an ML field in which you are either interested in obtaining a professional position or already have experience.

Deep Learning is one of my interests, and I’m a Computer Vision Engineer that uses deep learning models in apps to solve computer vision problems professionally. As a result, I’m interested in topics like pose estimation, action classification, and gesture identification.

Based on roles, the following are examples of ML/DS occupations and related themes to consider.

For this article, I’ll select the topic Pose Estimation to explore and choose associated research papers to study.

Step 2: Finding research papers

One of the most excellent tools to use while looking at machine learning-related research papers, datasets, code, and other related materials is PapersWithCode .

We use the search engine on the PapersWithCode website to get relevant research papers and content for our chosen topic, “Pose Estimation.” The following image shows you how it’s done.

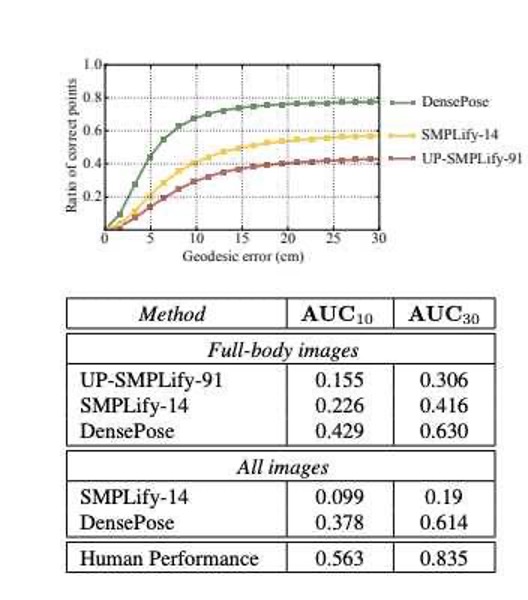

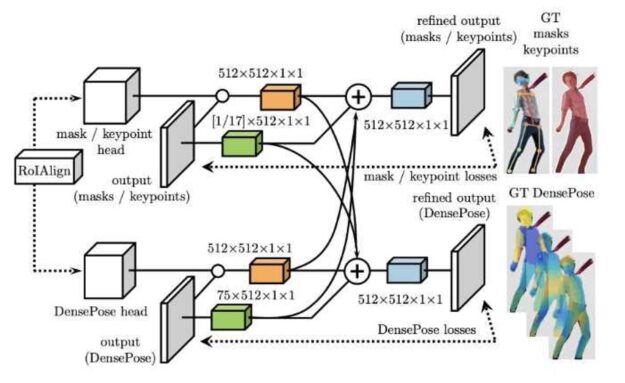

The search results page contains a short explanation of the searched topic, followed by a table of associated datasets, models, papers, and code. Without going into too much detail, the area of interest for this use case is the “Greatest papers with code”. This section contains the relevant papers related to the task or topic. For the purpose of this article, I’ll select the DensePose: Dense Human Pose Estimation In The Wild .

Step 3: First pass (gaining context and understanding)

At this point, we’ve selected a research paper to study and are prepared to extract any valuable learnings and findings from its content.

It’s only natural that your first impulse is to start writing notes and reading the document from beginning to end, perhaps taking some rest in between. However, having a context for the content of a study paper is a more practical way to read it. The title, abstract, and conclusion are three key parts of any research paper to gain an understanding.

The goal of the first pass of your chosen paper is to achieve the following:

- Assure that the paper is relevant.

- Obtain a sense of the paper’s context by learning about its contents, methods, and findings.

- Recognize the author’s goals, methodology, and accomplishments.

The title is the first point of information sharing between the authors and the reader. Therefore, research papers titles are direct and composed in a manner that leaves no ambiguity.

The research paper title is the most telling aspect since it indicates the study’s relevance to your work. The importance of the title is to give a brief perception of the paper’s content.

In this situation, the title is “DensePose: Dense Human Pose Estimation in the Wild.” This gives a broad overview of the work and implies that it will look at how to provide pose estimations in environments with high levels of activity and realistic situations properly.

The abstract portion gives a summarized version of the paper. It’s a short section that contains 300-500 words and tells you what the paper is about in a nutshell. The abstract is a brief text that provides an overview of the article’s content, researchers’ objectives, methods, and techniques.

When reading an abstract of a machine-learning research paper, you’ll typically come across mentions of datasets, methods, algorithms, and other terms. Keywords relevant to the article’s content provide context. It may be helpful to take notes and keep track of all keywords at this point.

For the paper: “ DensePose: Dense Human Pose Estimation In The Wild “, I identified in the abstract the following keywords: pose estimation, COCO dataset, CNN, region-based models, real-time.

It’s not uncommon to experience fatigue when reading the paper from top to bottom at your first initial pass, especially for Data Scientists and practitioners with no prior advanced academic experience. Although extracting information from the later sections of a paper might seem tedious after a long study session, the conclusion sections are often short. Hence reading the conclusion section in the first pass is recommended.

The conclusion section is a brief compendium of the work’s author or authors and/or contributions and accomplishments and promises for future developments and limitations.

Before reading the main content of a research paper, read the conclusion section to see if the researcher’s contributions, problem domain, and outcomes match your needs.

Following this particular brief first pass step enables a sufficient understanding and overview of the research paper’s scope and objectives, as well as a context for its content. You’ll be able to get more detailed information out of its content by going through it again with laser attention.

Step 4: Second pass (content familiarization)

Content familiarization is a process that’s relevant to the initial steps. The systematic approach to reading the research paper presented in this article. The familiarity process is a step that involves the introduction section and figures within the research paper.

As previously mentioned, the urge to plunge straight into the core of the research paper is not required because knowledge acclimatization provides an easier and more comprehensive examination of the study in later passes.

Introduction

Introductory sections of research papers are written to provide an overview of the objective of the research efforts. This objective mentions and explains problem domains, research scope, prior research efforts, and methodologies.

It’s normal to find parallels to past research work in this area, using similar or distinct methods. Other papers’ citations provide the scope and breadth of the problem domain, which broadens the exploratory zone for the reader. Perhaps incorporating the procedure outlined in Step 3 is sufficient at this point.

Another aspect of the benefit provided by the introduction section is the presentation of requisite knowledge required to approach and understand the content of the research paper.

Graph, diagrams, figures

Illustrative materials within the research paper ensure that readers can comprehend factors that support problem definition or explanations of methods presented. Commonly, tables are used within research papers to provide information on the quantitative performances of novel techniques in comparison to similar approaches.

Generally, the visual representation of data and performance enables the development of an intuitive understanding of the paper’s context. In the Dense Pose paper mentioned earlier, illustrations are used to depict the performance of the author’s approach to pose estimation and create. An overall understanding of the steps involved in generating and annotating data samples.

In the realm of deep learning, it’s common to find topological illustrations depicting the structure of artificial neural networks. Again this adds to the creation of intuitive understanding for any reader. Through illustrations and figures, readers may interpret the information themselves and gain a fuller perspective of it without having any preconceived notions about what outcomes should be.

Step 5: Third pass (deep reading)

The third pass of the paper is similar to the second, though it covers a greater portion of the text. The most important thing about this pass is that you avoid any complex arithmetic or technique formulations that may be difficult for you. During this pass, you can also skip over any words and definitions that you don’t understand or aren’t familiar with. These unfamiliar terms, algorithms, or techniques should be noted to return to later.

During this pass, your primary objective is to gain a broad understanding of what’s covered in the paper. Approach the paper, starting again from the abstract to the conclusion, but be sure to take intermediary breaks in between sections. Moreover, it’s recommended to have a notepad, where all key insights and takeaways are noted, alongside the unfamiliar terms and concepts.

The Pomodoro Technique is an effective method of managing time allocated to deep reading or study. Explained simply, the Pomodoro Technique involves the segmentation of the day into blocks of work, followed by short breaks.

What works for me is the 50/15 split, that is, 50 minutes studying and 15 minutes allocated to breaks. I tend to execute this split twice consecutively before taking a more extended break of 30 minutes. If you are unfamiliar with this time management technique, adopt a relatively easy division such as 25/5 and adjust the time split according to your focus and time capacity.

Step 6: Forth pass (final pass)

The final pass is typically one that involves an exertion of your mental and learning abilities, as it involves going through the unfamiliar terms, terminologies, concepts, and algorithms noted in the previous pass. This pass focuses on using external material to understand the recorded unfamiliar aspects of the paper.

In-depth studies of unfamiliar subjects have no specified time length, and at times efforts span into the days and weeks. The critical factor to a successful final pass is locating the appropriate sources for further exploration.

Unfortunately, there isn’t one source on the Internet that provides the wealth of information you require. Still, there are multiple sources that, when used in unison and appropriately, fill knowledge gaps. Below are a few of these resources.

- The Machine Learning Subreddit

- The Deep Learning Subreddit

- PapersWithCode

- Top conferences such as NIPS , ICML , ICLR

- Research Gate

- Machine Learning Apple

The Reference sections of research papers mention techniques and algorithms. Consequently, the current paper either draws inspiration from or builds upon, which is why the reference section is a useful source to use in your deep reading sessions.

Step 7: Summary (optional)

In almost a decade of academic and professional undertakings of technology-associated subjects and roles, the most effective method of ensuring any new information learned is retained in my long-term memory through the recapitulation of explored topics. By rewriting new information in my own words, either written or typed, I’m able to reinforce the presented ideas in an understandable and memorable manner.

To take it one step further, it’s possible to publicize learning efforts and notes through the utilization of blogging platforms and social media. An attempt to explain the freshly explored concept to a broad audience, assuming a reader isn’t accustomed to the topic or subject, requires understanding topics in intrinsic details.

Undoubtedly, reading research papers for novice Data Scientists and ML practitioners can be daunting and challenging; even seasoned practitioners find it difficult to digest the content of research papers in a single pass successfully.

The nature of the Data Science profession is very practical and involved. Meaning, there’s a requirement for its practitioners to employ an academic mindset, more so as the Data Science domain is closely associated with AI, which is still a developing field.

To summarize, here are all of the steps you should follow to read a research paper:

- Identify A Topic.

- Finding associated Research Papers

- Read title, abstract, and conclusion to gain a vague understanding of the research effort aims and achievements.

- Familiarize yourself with the content by diving deeper into the introduction; including the exploration of figures and graphs presented in the paper.

- Use a deep reading session to digest the main content of the paper as you go through the paper from top to bottom.

- Explore unfamiliar terms, terminologies, concepts, and methods using external resources.

- Summarize in your own words essential takeaways, definitions, and algorithms.

Thanks for reading!

Related resources

- DLI course: Building Transformer-Based Natural Language Processing

- GTC session: Enterprise MLOps 101

- GTC session: Intro to Large Language Models: LLM Tutorial and Disease Diagnosis LLM Lab

- GTC session: Build AI Applications with GPU Vector Databases

- NGC Containers: MATLAB

- Webinar: Empowering Future Engineers and Scientists With AI and NVIDIA Modulus

About the Authors

Related posts

Improving Machine Learning Security Skills at a DEF CON Competition

Community Spotlight: Democratizing Computer Vision and Conversational AI in Kenya

An Important Skill for Data Scientists and Machine Learning Practitioners

AI Pioneers Write So Should Data Scientists

Meet the Researcher: Peerapon Vateekul, Deep Learning Solutions for Medical Diagnosis and NLP

Next-Generation Seismic Monitoring with Neural Operators

Simulating realistic traffic behavior with a bi-level imitation learning ai model.

Analyzing the Security of Machine Learning Research Code

Research unveils breakthrough deep learning tool for understanding neural activity and movement control, generative ai research empowers creators with guided image structure control.

Frequently Asked Questions

Journal of Machine Learning Research

The Journal of Machine Learning Research (JMLR), established in 2000 , provides an international forum for the electronic and paper publication of high-quality scholarly articles in all areas of machine learning. All published papers are freely available online.

- 2024.02.18 : Volume 24 completed; Volume 25 began.

- 2023.01.20 : Volume 23 completed; Volume 24 began.

- 2022.07.20 : New special issue on climate change .

- 2022.02.18 : New blog post: Retrospectives from 20 Years of JMLR .

- 2022.01.25 : Volume 22 completed; Volume 23 began.

- 2021.12.02 : Message from outgoing co-EiC Bernhard Schölkopf .

- 2021.02.10 : Volume 21 completed; Volume 22 began.

- More news ...

Latest papers

More PAC-Bayes bounds: From bounded losses, to losses with general tail behaviors, to anytime validity Borja Rodríguez-Gálvez, Ragnar Thobaben, Mikael Skoglund , 2024. [ abs ][ pdf ][ bib ]

Neural Hilbert Ladders: Multi-Layer Neural Networks in Function Space Zhengdao Chen , 2024. [ abs ][ pdf ][ bib ]

QDax: A Library for Quality-Diversity and Population-based Algorithms with Hardware Acceleration Felix Chalumeau, Bryan Lim, Raphaël Boige, Maxime Allard, Luca Grillotti, Manon Flageat, Valentin Macé, Guillaume Richard, Arthur Flajolet, Thomas Pierrot, Antoine Cully , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Random Forest Weighted Local Fréchet Regression with Random Objects Rui Qiu, Zhou Yu, Ruoqing Zhu , 2024. [ abs ][ pdf ][ bib ] [ code ]

PhAST: Physics-Aware, Scalable, and Task-Specific GNNs for Accelerated Catalyst Design Alexandre Duval, Victor Schmidt, Santiago Miret, Yoshua Bengio, Alex Hernández-García, David Rolnick , 2024. [ abs ][ pdf ][ bib ] [ code ]

Unsupervised Anomaly Detection Algorithms on Real-world Data: How Many Do We Need? Roel Bouman, Zaharah Bukhsh, Tom Heskes , 2024. [ abs ][ pdf ][ bib ] [ code ]

Multi-class Probabilistic Bounds for Majority Vote Classifiers with Partially Labeled Data Vasilii Feofanov, Emilie Devijver, Massih-Reza Amini , 2024. [ abs ][ pdf ][ bib ]

Information Processing Equalities and the Information–Risk Bridge Robert C. Williamson, Zac Cranko , 2024. [ abs ][ pdf ][ bib ]

Nonparametric Regression for 3D Point Cloud Learning Xinyi Li, Shan Yu, Yueying Wang, Guannan Wang, Li Wang, Ming-Jun Lai , 2024. [ abs ][ pdf ][ bib ] [ code ]

AMLB: an AutoML Benchmark Pieter Gijsbers, Marcos L. P. Bueno, Stefan Coors, Erin LeDell, Sébastien Poirier, Janek Thomas, Bernd Bischl, Joaquin Vanschoren , 2024. [ abs ][ pdf ][ bib ] [ code ]

Materials Discovery using Max K-Armed Bandit Nobuaki Kikkawa, Hiroshi Ohno , 2024. [ abs ][ pdf ][ bib ]

Semi-supervised Inference for Block-wise Missing Data without Imputation Shanshan Song, Yuanyuan Lin, Yong Zhou , 2024. [ abs ][ pdf ][ bib ]

Adaptivity and Non-stationarity: Problem-dependent Dynamic Regret for Online Convex Optimization Peng Zhao, Yu-Jie Zhang, Lijun Zhang, Zhi-Hua Zhou , 2024. [ abs ][ pdf ][ bib ]

Scaling Speech Technology to 1,000+ Languages Vineel Pratap, Andros Tjandra, Bowen Shi, Paden Tomasello, Arun Babu, Sayani Kundu, Ali Elkahky, Zhaoheng Ni, Apoorv Vyas, Maryam Fazel-Zarandi, Alexei Baevski, Yossi Adi, Xiaohui Zhang, Wei-Ning Hsu, Alexis Conneau, Michael Auli , 2024. [ abs ][ pdf ][ bib ] [ code ]

MAP- and MLE-Based Teaching Hans Ulrich Simon, Jan Arne Telle , 2024. [ abs ][ pdf ][ bib ]

A General Framework for the Analysis of Kernel-based Tests Tamara Fernández, Nicolás Rivera , 2024. [ abs ][ pdf ][ bib ]

Overparametrized Multi-layer Neural Networks: Uniform Concentration of Neural Tangent Kernel and Convergence of Stochastic Gradient Descent Jiaming Xu, Hanjing Zhu , 2024. [ abs ][ pdf ][ bib ]

Sparse Representer Theorems for Learning in Reproducing Kernel Banach Spaces Rui Wang, Yuesheng Xu, Mingsong Yan , 2024. [ abs ][ pdf ][ bib ]

Exploration of the Search Space of Gaussian Graphical Models for Paired Data Alberto Roverato, Dung Ngoc Nguyen , 2024. [ abs ][ pdf ][ bib ]

The good, the bad and the ugly sides of data augmentation: An implicit spectral regularization perspective Chi-Heng Lin, Chiraag Kaushik, Eva L. Dyer, Vidya Muthukumar , 2024. [ abs ][ pdf ][ bib ] [ code ]

Stochastic Approximation with Decision-Dependent Distributions: Asymptotic Normality and Optimality Joshua Cutler, Mateo Díaz, Dmitriy Drusvyatskiy , 2024. [ abs ][ pdf ][ bib ]

Minimax Rates for High-Dimensional Random Tessellation Forests Eliza O'Reilly, Ngoc Mai Tran , 2024. [ abs ][ pdf ][ bib ]

Nonparametric Estimation of Non-Crossing Quantile Regression Process with Deep ReQU Neural Networks Guohao Shen, Yuling Jiao, Yuanyuan Lin, Joel L. Horowitz, Jian Huang , 2024. [ abs ][ pdf ][ bib ]

Spatial meshing for general Bayesian multivariate models Michele Peruzzi, David B. Dunson , 2024. [ abs ][ pdf ][ bib ] [ code ]

A Semi-parametric Estimation of Personalized Dose-response Function Using Instrumental Variables Wei Luo, Yeying Zhu, Xuekui Zhang, Lin Lin , 2024. [ abs ][ pdf ][ bib ]

Learning Non-Gaussian Graphical Models via Hessian Scores and Triangular Transport Ricardo Baptista, Youssef Marzouk, Rebecca Morrison, Olivier Zahm , 2024. [ abs ][ pdf ][ bib ] [ code ]

On the Learnability of Out-of-distribution Detection Zhen Fang, Yixuan Li, Feng Liu, Bo Han, Jie Lu , 2024. [ abs ][ pdf ][ bib ]

Win: Weight-Decay-Integrated Nesterov Acceleration for Faster Network Training Pan Zhou, Xingyu Xie, Zhouchen Lin, Kim-Chuan Toh, Shuicheng Yan , 2024. [ abs ][ pdf ][ bib ] [ code ]

On the Eigenvalue Decay Rates of a Class of Neural-Network Related Kernel Functions Defined on General Domains Yicheng Li, Zixiong Yu, Guhan Chen, Qian Lin , 2024. [ abs ][ pdf ][ bib ]

Tight Convergence Rate Bounds for Optimization Under Power Law Spectral Conditions Maksim Velikanov, Dmitry Yarotsky , 2024. [ abs ][ pdf ][ bib ]

ptwt - The PyTorch Wavelet Toolbox Moritz Wolter, Felix Blanke, Jochen Garcke, Charles Tapley Hoyt , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Choosing the Number of Topics in LDA Models – A Monte Carlo Comparison of Selection Criteria Victor Bystrov, Viktoriia Naboka-Krell, Anna Staszewska-Bystrova, Peter Winker , 2024. [ abs ][ pdf ][ bib ] [ code ]

Functional Directed Acyclic Graphs Kuang-Yao Lee, Lexin Li, Bing Li , 2024. [ abs ][ pdf ][ bib ]

Unlabeled Principal Component Analysis and Matrix Completion Yunzhen Yao, Liangzu Peng, Manolis C. Tsakiris , 2024. [ abs ][ pdf ][ bib ] [ code ]

Distributed Estimation on Semi-Supervised Generalized Linear Model Jiyuan Tu, Weidong Liu, Xiaojun Mao , 2024. [ abs ][ pdf ][ bib ]

Towards Explainable Evaluation Metrics for Machine Translation Christoph Leiter, Piyawat Lertvittayakumjorn, Marina Fomicheva, Wei Zhao, Yang Gao, Steffen Eger , 2024. [ abs ][ pdf ][ bib ]

Differentially private methods for managing model uncertainty in linear regression Víctor Peña, Andrés F. Barrientos , 2024. [ abs ][ pdf ][ bib ]

Data Summarization via Bilevel Optimization Zalán Borsos, Mojmír Mutný, Marco Tagliasacchi, Andreas Krause , 2024. [ abs ][ pdf ][ bib ]

Pareto Smoothed Importance Sampling Aki Vehtari, Daniel Simpson, Andrew Gelman, Yuling Yao, Jonah Gabry , 2024. [ abs ][ pdf ][ bib ] [ code ]

Policy Gradient Methods in the Presence of Symmetries and State Abstractions Prakash Panangaden, Sahand Rezaei-Shoshtari, Rosie Zhao, David Meger, Doina Precup , 2024. [ abs ][ pdf ][ bib ] [ code ]

Scaling Instruction-Finetuned Language Models Hyung Won Chung, Le Hou, Shayne Longpre, Barret Zoph, Yi Tay, William Fedus, Yunxuan Li, Xuezhi Wang, Mostafa Dehghani, Siddhartha Brahma, Albert Webson, Shixiang Shane Gu, Zhuyun Dai, Mirac Suzgun, Xinyun Chen, Aakanksha Chowdhery, Alex Castro-Ros, Marie Pellat, Kevin Robinson, Dasha Valter, Sharan Narang, Gaurav Mishra, Adams Yu, Vincent Zhao, Yanping Huang, Andrew Dai, Hongkun Yu, Slav Petrov, Ed H. Chi, Jeff Dean, Jacob Devlin, Adam Roberts, Denny Zhou, Quoc V. Le, Jason Wei , 2024. [ abs ][ pdf ][ bib ]

Tangential Wasserstein Projections Florian Gunsilius, Meng Hsuan Hsieh, Myung Jin Lee , 2024. [ abs ][ pdf ][ bib ] [ code ]

Learnability of Linear Port-Hamiltonian Systems Juan-Pablo Ortega, Daiying Yin , 2024. [ abs ][ pdf ][ bib ] [ code ]

Off-Policy Action Anticipation in Multi-Agent Reinforcement Learning Ariyan Bighashdel, Daan de Geus, Pavol Jancura, Gijs Dubbelman , 2024. [ abs ][ pdf ][ bib ] [ code ]

On Unbiased Estimation for Partially Observed Diffusions Jeremy Heng, Jeremie Houssineau, Ajay Jasra , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Improving Lipschitz-Constrained Neural Networks by Learning Activation Functions Stanislas Ducotterd, Alexis Goujon, Pakshal Bohra, Dimitris Perdios, Sebastian Neumayer, Michael Unser , 2024. [ abs ][ pdf ][ bib ] [ code ]

Mathematical Framework for Online Social Media Auditing Wasim Huleihel, Yehonathan Refael , 2024. [ abs ][ pdf ][ bib ]

An Embedding Framework for the Design and Analysis of Consistent Polyhedral Surrogates Jessie Finocchiaro, Rafael M. Frongillo, Bo Waggoner , 2024. [ abs ][ pdf ][ bib ]

Low-rank Variational Bayes correction to the Laplace method Janet van Niekerk, Haavard Rue , 2024. [ abs ][ pdf ][ bib ] [ code ]

Scaling the Convex Barrier with Sparse Dual Algorithms Alessandro De Palma, Harkirat Singh Behl, Rudy Bunel, Philip H.S. Torr, M. Pawan Kumar , 2024. [ abs ][ pdf ][ bib ] [ code ]

Causal-learn: Causal Discovery in Python Yujia Zheng, Biwei Huang, Wei Chen, Joseph Ramsey, Mingming Gong, Ruichu Cai, Shohei Shimizu, Peter Spirtes, Kun Zhang , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Decomposed Linear Dynamical Systems (dLDS) for learning the latent components of neural dynamics Noga Mudrik, Yenho Chen, Eva Yezerets, Christopher J. Rozell, Adam S. Charles , 2024. [ abs ][ pdf ][ bib ] [ code ]

Existence and Minimax Theorems for Adversarial Surrogate Risks in Binary Classification Natalie S. Frank, Jonathan Niles-Weed , 2024. [ abs ][ pdf ][ bib ]

Data Thinning for Convolution-Closed Distributions Anna Neufeld, Ameer Dharamshi, Lucy L. Gao, Daniela Witten , 2024. [ abs ][ pdf ][ bib ] [ code ]

A projected semismooth Newton method for a class of nonconvex composite programs with strong prox-regularity Jiang Hu, Kangkang Deng, Jiayuan Wu, Quanzheng Li , 2024. [ abs ][ pdf ][ bib ]

Revisiting RIP Guarantees for Sketching Operators on Mixture Models Ayoub Belhadji, Rémi Gribonval , 2024. [ abs ][ pdf ][ bib ]

Monotonic Risk Relationships under Distribution Shifts for Regularized Risk Minimization Daniel LeJeune, Jiayu Liu, Reinhard Heckel , 2024. [ abs ][ pdf ][ bib ] [ code ]

Polygonal Unadjusted Langevin Algorithms: Creating stable and efficient adaptive algorithms for neural networks Dong-Young Lim, Sotirios Sabanis , 2024. [ abs ][ pdf ][ bib ]

Axiomatic effect propagation in structural causal models Raghav Singal, George Michailidis , 2024. [ abs ][ pdf ][ bib ]

Optimal First-Order Algorithms as a Function of Inequalities Chanwoo Park, Ernest K. Ryu , 2024. [ abs ][ pdf ][ bib ] [ code ]

Resource-Efficient Neural Networks for Embedded Systems Wolfgang Roth, Günther Schindler, Bernhard Klein, Robert Peharz, Sebastian Tschiatschek, Holger Fröning, Franz Pernkopf, Zoubin Ghahramani , 2024. [ abs ][ pdf ][ bib ]

Trained Transformers Learn Linear Models In-Context Ruiqi Zhang, Spencer Frei, Peter L. Bartlett , 2024. [ abs ][ pdf ][ bib ]

Adam-family Methods for Nonsmooth Optimization with Convergence Guarantees Nachuan Xiao, Xiaoyin Hu, Xin Liu, Kim-Chuan Toh , 2024. [ abs ][ pdf ][ bib ]

Efficient Modality Selection in Multimodal Learning Yifei He, Runxiang Cheng, Gargi Balasubramaniam, Yao-Hung Hubert Tsai, Han Zhao , 2024. [ abs ][ pdf ][ bib ]

A Multilabel Classification Framework for Approximate Nearest Neighbor Search Ville Hyvönen, Elias Jääsaari, Teemu Roos , 2024. [ abs ][ pdf ][ bib ] [ code ]

Probabilistic Forecasting with Generative Networks via Scoring Rule Minimization Lorenzo Pacchiardi, Rilwan A. Adewoyin, Peter Dueben, Ritabrata Dutta , 2024. [ abs ][ pdf ][ bib ] [ code ]

Multiple Descent in the Multiple Random Feature Model Xuran Meng, Jianfeng Yao, Yuan Cao , 2024. [ abs ][ pdf ][ bib ]

Mean-Square Analysis of Discretized Itô Diffusions for Heavy-tailed Sampling Ye He, Tyler Farghly, Krishnakumar Balasubramanian, Murat A. Erdogdu , 2024. [ abs ][ pdf ][ bib ]

Invariant and Equivariant Reynolds Networks Akiyoshi Sannai, Makoto Kawano, Wataru Kumagai , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Personalized PCA: Decoupling Shared and Unique Features Naichen Shi, Raed Al Kontar , 2024. [ abs ][ pdf ][ bib ] [ code ]

Survival Kernets: Scalable and Interpretable Deep Kernel Survival Analysis with an Accuracy Guarantee George H. Chen , 2024. [ abs ][ pdf ][ bib ] [ code ]

On the Sample Complexity and Metastability of Heavy-tailed Policy Search in Continuous Control Amrit Singh Bedi, Anjaly Parayil, Junyu Zhang, Mengdi Wang, Alec Koppel , 2024. [ abs ][ pdf ][ bib ]

Convergence for nonconvex ADMM, with applications to CT imaging Rina Foygel Barber, Emil Y. Sidky , 2024. [ abs ][ pdf ][ bib ] [ code ]

Distributed Gaussian Mean Estimation under Communication Constraints: Optimal Rates and Communication-Efficient Algorithms T. Tony Cai, Hongji Wei , 2024. [ abs ][ pdf ][ bib ]

Sparse NMF with Archetypal Regularization: Computational and Robustness Properties Kayhan Behdin, Rahul Mazumder , 2024. [ abs ][ pdf ][ bib ] [ code ]

Deep Network Approximation: Beyond ReLU to Diverse Activation Functions Shijun Zhang, Jianfeng Lu, Hongkai Zhao , 2024. [ abs ][ pdf ][ bib ]

Effect-Invariant Mechanisms for Policy Generalization Sorawit Saengkyongam, Niklas Pfister, Predrag Klasnja, Susan Murphy, Jonas Peters , 2024. [ abs ][ pdf ][ bib ]

Pygmtools: A Python Graph Matching Toolkit Runzhong Wang, Ziao Guo, Wenzheng Pan, Jiale Ma, Yikai Zhang, Nan Yang, Qi Liu, Longxuan Wei, Hanxue Zhang, Chang Liu, Zetian Jiang, Xiaokang Yang, Junchi Yan , 2024. (Machine Learning Open Source Software Paper) [ abs ][ pdf ][ bib ] [ code ]

Heterogeneous-Agent Reinforcement Learning Yifan Zhong, Jakub Grudzien Kuba, Xidong Feng, Siyi Hu, Jiaming Ji, Yaodong Yang , 2024. [ abs ][ pdf ][ bib ] [ code ]

Sample-efficient Adversarial Imitation Learning Dahuin Jung, Hyungyu Lee, Sungroh Yoon , 2024. [ abs ][ pdf ][ bib ]

Stochastic Modified Flows, Mean-Field Limits and Dynamics of Stochastic Gradient Descent Benjamin Gess, Sebastian Kassing, Vitalii Konarovskyi , 2024. [ abs ][ pdf ][ bib ]

Rates of convergence for density estimation with generative adversarial networks Nikita Puchkin, Sergey Samsonov, Denis Belomestny, Eric Moulines, Alexey Naumov , 2024. [ abs ][ pdf ][ bib ]

Additive smoothing error in backward variational inference for general state-space models Mathis Chagneux, Elisabeth Gassiat, Pierre Gloaguen, Sylvain Le Corff , 2024. [ abs ][ pdf ][ bib ]

Optimal Bump Functions for Shallow ReLU networks: Weight Decay, Depth Separation, Curse of Dimensionality Stephan Wojtowytsch , 2024. [ abs ][ pdf ][ bib ]

Numerically Stable Sparse Gaussian Processes via Minimum Separation using Cover Trees Alexander Terenin, David R. Burt, Artem Artemev, Seth Flaxman, Mark van der Wilk, Carl Edward Rasmussen, Hong Ge , 2024. [ abs ][ pdf ][ bib ] [ code ]

On Tail Decay Rate Estimation of Loss Function Distributions Etrit Haxholli, Marco Lorenzi , 2024. [ abs ][ pdf ][ bib ] [ code ]

Deep Nonparametric Estimation of Operators between Infinite Dimensional Spaces Hao Liu, Haizhao Yang, Minshuo Chen, Tuo Zhao, Wenjing Liao , 2024. [ abs ][ pdf ][ bib ]

Post-Regularization Confidence Bands for Ordinary Differential Equations Xiaowu Dai, Lexin Li , 2024. [ abs ][ pdf ][ bib ]

On the Generalization of Stochastic Gradient Descent with Momentum Ali Ramezani-Kebrya, Kimon Antonakopoulos, Volkan Cevher, Ashish Khisti, Ben Liang , 2024. [ abs ][ pdf ][ bib ]

Pursuit of the Cluster Structure of Network Lasso: Recovery Condition and Non-convex Extension Shotaro Yagishita, Jun-ya Gotoh , 2024. [ abs ][ pdf ][ bib ]

Iterate Averaging in the Quest for Best Test Error Diego Granziol, Nicholas P. Baskerville, Xingchen Wan, Samuel Albanie, Stephen Roberts , 2024. [ abs ][ pdf ][ bib ] [ code ]

Nonparametric Inference under B-bits Quantization Kexuan Li, Ruiqi Liu, Ganggang Xu, Zuofeng Shang , 2024. [ abs ][ pdf ][ bib ]

Black Box Variational Inference with a Deterministic Objective: Faster, More Accurate, and Even More Black Box Ryan Giordano, Martin Ingram, Tamara Broderick , 2024. [ abs ][ pdf ][ bib ] [ code ]

On Sufficient Graphical Models Bing Li, Kyongwon Kim , 2024. [ abs ][ pdf ][ bib ]

Localized Debiased Machine Learning: Efficient Inference on Quantile Treatment Effects and Beyond Nathan Kallus, Xiaojie Mao, Masatoshi Uehara , 2024. [ abs ][ pdf ][ bib ] [ code ]

On the Effect of Initialization: The Scaling Path of 2-Layer Neural Networks Sebastian Neumayer, Lénaïc Chizat, Michael Unser , 2024. [ abs ][ pdf ][ bib ]

Improving physics-informed neural networks with meta-learned optimization Alex Bihlo , 2024. [ abs ][ pdf ][ bib ]

A Comparison of Continuous-Time Approximations to Stochastic Gradient Descent Stefan Ankirchner, Stefan Perko , 2024. [ abs ][ pdf ][ bib ]

Critically Assessing the State of the Art in Neural Network Verification Matthias König, Annelot W. Bosman, Holger H. Hoos, Jan N. van Rijn , 2024. [ abs ][ pdf ][ bib ]

Estimating the Minimizer and the Minimum Value of a Regression Function under Passive Design Arya Akhavan, Davit Gogolashvili, Alexandre B. Tsybakov , 2024. [ abs ][ pdf ][ bib ]

Modeling Random Networks with Heterogeneous Reciprocity Daniel Cirkovic, Tiandong Wang , 2024. [ abs ][ pdf ][ bib ]

Exploration, Exploitation, and Engagement in Multi-Armed Bandits with Abandonment Zixian Yang, Xin Liu, Lei Ying , 2024. [ abs ][ pdf ][ bib ]

On Efficient and Scalable Computation of the Nonparametric Maximum Likelihood Estimator in Mixture Models Yangjing Zhang, Ying Cui, Bodhisattva Sen, Kim-Chuan Toh , 2024. [ abs ][ pdf ][ bib ] [ code ]

Decorrelated Variable Importance Isabella Verdinelli, Larry Wasserman , 2024. [ abs ][ pdf ][ bib ]

Model-Free Representation Learning and Exploration in Low-Rank MDPs Aditya Modi, Jinglin Chen, Akshay Krishnamurthy, Nan Jiang, Alekh Agarwal , 2024. [ abs ][ pdf ][ bib ]

Seeded Graph Matching for the Correlated Gaussian Wigner Model via the Projected Power Method Ernesto Araya, Guillaume Braun, Hemant Tyagi , 2024. [ abs ][ pdf ][ bib ] [ code ]

Fast Policy Extragradient Methods for Competitive Games with Entropy Regularization Shicong Cen, Yuting Wei, Yuejie Chi , 2024. [ abs ][ pdf ][ bib ]

Power of knockoff: The impact of ranking algorithm, augmented design, and symmetric statistic Zheng Tracy Ke, Jun S. Liu, Yucong Ma , 2024. [ abs ][ pdf ][ bib ]

Lower Complexity Bounds of Finite-Sum Optimization Problems: The Results and Construction Yuze Han, Guangzeng Xie, Zhihua Zhang , 2024. [ abs ][ pdf ][ bib ]

On Truthing Issues in Supervised Classification Jonathan K. Su , 2024. [ abs ][ pdf ][ bib ]

Subscribe to the PwC Newsletter

Join the community, trending research, storydiffusion: consistent self-attention for long-range image and video generation.

This module converts the generated sequence of images into videos with smooth transitions and consistent subjects that are significantly more stable than the modules based on latent spaces only, especially in the context of long video generation.

DeepSeek-V2: A Strong, Economical, and Efficient Mixture-of-Experts Language Model

MLA guarantees efficient inference through significantly compressing the Key-Value (KV) cache into a latent vector, while DeepSeekMoE enables training strong models at an economical cost through sparse computation.

A decoder-only foundation model for time-series forecasting

Motivated by recent advances in large language models for Natural Language Processing (NLP), we design a time-series foundation model for forecasting whose out-of-the-box zero-shot performance on a variety of public datasets comes close to the accuracy of state-of-the-art supervised forecasting models for each individual dataset.

Lumina-T2X: Transforming Text into Any Modality, Resolution, and Duration via Flow-based Large Diffusion Transformers

Sora unveils the potential of scaling Diffusion Transformer for generating photorealistic images and videos at arbitrary resolutions, aspect ratios, and durations, yet it still lacks sufficient implementation details.

Granite Code Models: A Family of Open Foundation Models for Code Intelligence

ibm-granite/granite-code-models • 7 May 2024

Increasingly, code LLMs are being integrated into software development environments to improve the productivity of human programmers, and LLM-based agents are beginning to show promise for handling complex tasks autonomously.

AniTalker: Animate Vivid and Diverse Talking Faces through Identity-Decoupled Facial Motion Encoding

The paper introduces AniTalker, an innovative framework designed to generate lifelike talking faces from a single portrait.

AgentScope: A Flexible yet Robust Multi-Agent Platform

modelscope/agentscope • 21 Feb 2024

With the rapid advancement of Large Language Models (LLMs), significant progress has been made in multi-agent applications.

CLLMs: Consistency Large Language Models

Parallel decoding methods such as Jacobi decoding show promise for more efficient LLM inference as it breaks the sequential nature of the LLM decoding process and transforms it into parallelizable computation.

A Multi-Level Superoptimizer for Tensor Programs

mirage-project/mirage • 9 May 2024

We introduce Mirage, the first multi-level superoptimizer for tensor programs.

FiT: Flexible Vision Transformer for Diffusion Model

Nature is infinitely resolution-free.

Thank you for visiting nature.com. You are using a browser version with limited support for CSS. To obtain the best experience, we recommend you use a more up to date browser (or turn off compatibility mode in Internet Explorer). In the meantime, to ensure continued support, we are displaying the site without styles and JavaScript.

- View all journals

Machine learning articles from across Nature Portfolio

Machine learning is the ability of a machine to improve its performance based on previous results. Machine learning methods enable computers to learn without being explicitly programmed and have multiple applications, for example, in the improvement of data mining algorithms.

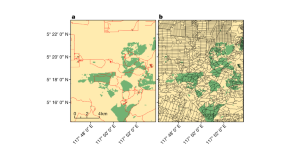

‘Ghost roads’ could be the biggest direct threat to tropical forests

By using volunteers to map roads in forests across Borneo, Sumatra and New Guinea, an innovative study shows that existing maps of the Asia-Pacific region are rife with errors. It also reveals that unmapped roads are extremely common — up to seven times more abundant than mapped ones. Such ‘ghost roads’ are promoting illegal logging, mining, wildlife poaching and deforestation in some of the world’s biologically richest ecosystems.

Adapting vision–language AI models to cardiology tasks

Vision–language models can be trained to read cardiac ultrasound images with implications for improving clinical workflows, but additional development and validation will be required before such models can replace humans.

- Rima Arnaout

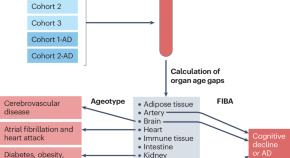

Not every organ ticks the same

A new study describes the development of proteomics-based ageing clocks that calculate the biological age of specific organs and define features of extreme ageing associated with age-related diseases. Their findings support the notion that plasma proteins can be used to monitor the ageing rates of specific organs and disease progression.

- Khaoula Talbi

- Anette Melk

Latest Research and Reviews

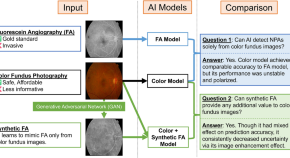

Deep learning segmentation of non-perfusion area from color fundus images and AI-generated fluorescein angiography

- Kanato Masayoshi

- Yusaku Katada

- Toshihide Kurihara

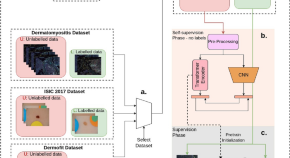

Shifting to machine supervision: annotation-efficient semi and self-supervised learning for automatic medical image segmentation and classification

- Pranav Singh

- Raviteja Chukkapalli

- Jacopo Cirrone

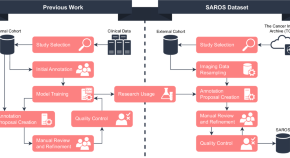

SAROS: A dataset for whole-body region and organ segmentation in CT imaging

- Sven Koitka

- Giulia Baldini

MISATO: machine learning dataset of protein–ligand complexes for structure-based drug discovery

MISATO is a database for structure-based drug discovery that combines quantum mechanics data with molecular dynamics simulations on ~20,000 protein–ligand structures. The artificial intelligence models included provide an easy entry point for the machine learning and drug discovery communities.

- Till Siebenmorgen

- Filipe Menezes

- Grzegorz M. Popowicz

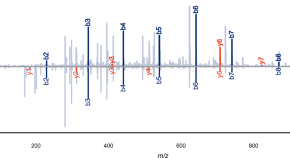

Fragment ion intensity prediction improves the identification rate of non-tryptic peptides in timsTOF

Immunopeptidomics is crucial for the discovery of potential immunotherapy and vaccine candidates. Here, the authors generate a ground truth timsTOF dataset to fine-tune the deep learning model Prosit, improving peptide-spectrum match rescoring by up to 3-fold during immunopeptide identification.

- Charlotte Adams

- Wassim Gabriel

- Kurt Boonen

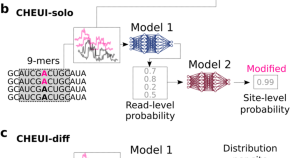

Prediction of m6A and m5C at single-molecule resolution reveals a transcriptome-wide co-occurrence of RNA modifications

The epitranscriptome holds many unexplored RNA functions, but detecting multiple modifications from one sample remains challenging. Here, authors devise a strategy combining AI and nanopore sequencing to uncover a transcriptome-wide co-occurrence of two modification types in individual RNA molecules.

- P Acera Mateos

News and Comment

The US Congress is taking on AI —this computer scientist is helping

Kiri Wagstaff, who temporarily shelved her academic career to provide advice on federal AI legislation, talks about life inside the halls of power.

- Nicola Jones

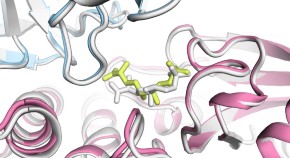

Major AlphaFold upgrade offers boost for drug discovery

Latest version of the AI models how proteins interact with other molecules — but DeepMind restricts access to the tool.

- Ewen Callaway

Who’s making chips for AI? Chinese manufacturers lag behind US tech giants

Researchers in China say they are finding themselves five to ten years behind their US counterparts as export restrictions bite.

- Jonathan O'Callaghan

Response to “The perpetual motion machine of AI-generated data and the distraction of ChatGPT as a ‘scientist’”

- William Stafford Noble

Quick links

- Explore articles by subject

- Guide to authors

- Editorial policies

Why Attend?

- Live Online

- 1,00,000+ people attended since 2009

- Rs 1999 FREE

- Certificate of Participation

- An exclusive Surprise

Getting Started with Research Papers on Machine Learning: What to Read & How

A quick glance into any of the top-rated research papers on Machine Learning shows us how Machine Learning and digital technologies are becoming an integral part of every industry.

According to recent research by Gartner, “Smart machines will enter mainstream adoption by 2021.” Adopting Machine Learning help your organization gain a major competitive edge.

Why is Machine Learning so Hot Today?

With over 250 million active customers and tens of millions of products, Amazon’s machine learning makes accurate product recommendations. These recommendations are an outcome of the customer’s browsing and purchasing behavior almost instantly. No humans could do that.

Google is using driverless cars with the help of machine learning to make our roads safer. IBM’s Watson is already a big name in healthcare with its machine learning and cognitive computing power.

If you have an interest in a career in Machine Learning or Deep Learning, you must develop a habit of reading Research Papers on Machine Learning regularly. Reading research papers in Machine Learning keeps you abreast of the latest trends and thoughts.

The course books define the basic premises of your learning Research papers on Machine Learning give you a deeper understanding of the implementation models in every industry.

Being an ML professional your primary task is to think about problems that are difficult to identify. Solve them through innovative means, rather than memorize what has already been found.

Another advantage of browsing through research papers on machine learning is that you can learn Machine Learning algorithms better. Students or ML professionals who read research papers on machine learning algorithms have a better understanding of programming and coding.

Want to Know How Machine Learning Is Impacting our Lives?

The food or grocery segment is one area where Machine Learning has left an indelible mark. Up to 40% of a grocer’s revenue comes from sales of fresh produce. Therefore, maintaining product quality is very important. But that is easier said than done.

Grocers are dependent on their supply chains and consumers. Keeping their shelves stocked and their products fresh is a difficult situation for them.

But with machine learning grocers already know the secret to smarter fresh-food replenishment. They can train ML programs on historical datasets and input data about promotions and store hours as well. Then use the analyses to gauge how much of each product to order and display.

ML systems can also collect information about weather forecasts, public holidays, order quantity parameters, and other contextual information.

Grocers or store-owners can then issue a recommended order every 24 hours so that the grocer always has the appropriate products in the appropriate amounts in stock.

Research Papers on Machine Learning Algorithms

Research Papers on Machine Learning have questioned which machine learning algorithm and what underlying model structure to use has been based on time-consuming investigations and research by human experts.

It has been found out that the right way to select the best algorithms and the most appropriate model architecture, with the correct hyper-parameters, is through trial and error.

Meta-Learning, as it has evolved through the latest research papers on machine learning. It is a concept where exploration of algorithms and model structures take place using machine learning methods.

For us, learning happens at multiple scales. Our brains are born with the ability to learn new concepts and tasks. Similarly, research papers in Machine Learning show that in Meta-Learning or Learning to Learn, there is a hierarchical application of AI algorithms.

This includes first learning which is the best network architecture, and what optimization algorithms and hyper-parameters are most appropriate for the model that has been selected.

The model that has been selected through this process refines the most mundane of tasks. The research has already achieved remarkable results and with the use of different optimization techniques. Evolutionary Strategies is perhaps the best example of this.

However, with a Meta- Reinforcement Learning Algorithm, the objective is to learn the working behind Reinforcement Learning agent that includes both the Reinforcement Learning algorithm and the policy.

Pieter Abbeel gave an explanation for this at the Meta-Learning Symposium held during NIPS 2017. This was also one of the highest rated research papers on Machine Learning.

Research Papers on Machine Learning: One-Shot Learning

In one of the several research papers in Machine Learning, Oriol Vinyals states that humans are capable of learning new concepts with minimal supervision. In a Deep Learning network, there is a requirement of huge amount of labelled training data because neural networks are still not able to recognize a new object that they have only seen once or twice.

However, more recent researches on machine learning have shown that the application of model-based, or metric-based, or optimization-based Meta-Learning approaches to define network architectures that can learn from just a few data examples.

Moreover, the latest research papers on machine learning, i.e., on One-Shot Learning by Vinyals shows significant improvements have taken place over previous baseline one-shot accuracy for video and language tasks.

This approach uses a model that learns a classifier based on an attention kernel to map a small labelled support set and an unlabelled example to its corresponding label

Again, for Reinforcement Learning applications, One-Shot Imitation Learning brings out the possibility of learning from just a few demonstrations of a given task. It is possible to generalize to new instances of the same task by applying a Meta-Learning approach to train robust policies.

Research Papers on Machine Learning: Simulation-Based Learning

Several existing Reinforcement Learning (RL) systems, today rely on simulations to explore the solution space and solve complex problems. These include systems based on Self-Play for gaming applications.

Self-Play is an essential part of the algorithms used by Google\DeepMind in AlphaGo. In the more recent AlphaGo Zero reinforcement learning systems. These are some of the breakthrough approaches that have defeated the world champion at the ancient Chinese game of Go.

Thus, it is interesting to note that the newer AlphaGo Zero system has achieved a significant step forward. The training of AlphaGo Zero system was entirely by Self-Play RL starting from a completely random play. It received no human data or supervision input. The system is effectively self-learning.

Therefore, simulation for Reinforcement Learning training has also been used in Imagination Augmented RL algorithms – the recent Imagination-Augmented Agents (I2A) approach improves on the original model-based RL algorithms by combining both model-free and model-based policy rollouts.

Thus, this approach allows the policy improvement & has resulted in a significant improvement in performance.

Research Papers on Machine Learning: The Wasserstein Auto-Encoder

Wasserstein research paper on Auto-Encoders shows how Autoencoders, which are neural networks, are used for dimensionality reduction. Autoencoders are more popularly used for generative learning models. Variational autoencoder (VAE) is largely used in applications in image and text recognition space.

Moreover, researchers from Max Planck Institute for Intelligent Systems, Germany, in collaboration with scientists from Google Brain have come up with the Wasserstein Auto encoder (WAE). It is capable of utilizing Wasserstein distance in any generative model.

Their aim was to reduce optimal transport cost function in the model distribution.

Thus, after testing, WAE proved to be more functional. It provided a more stable solution than other auto encoders such as VAE with lesser architectural complexity.

Research Papers on Machine Learning: Ultra-strong Machine Learning Comprehensibility of Programs Learned with ILP

Authors of the paper on Ultra-strong machine learning comprehensibility of programs learned with ILP are among the most widely read research papers on machine learning algorithms . They introduced an operational definition for comprehensibility of logic programs. They conducted human trials to determine how properties of a program affect its ease of comprehension.

As a matter of fact, Scholars have used two sets of experiments testing human comprehensibility of logic programs. In the first experiment, they have tested human comprehensibility with and without predicate invention.

Thus, in the second experiment, researchers have directly tested whether any state-of-the-art ILP systems are ultra-strong learners in Michie’s sense, and select the Metagol system for use in human trials.

The results show that participants were not able to learn the relational concept on their own from a set of examples. They were able to apply the relational definition provided by the ILP system correctly.

Moreover, this implies the existence of a class of relational concepts which are hard to acquire for humans, though easy to understand given an abstract explanation. The scholars are of opinion that improved understanding of this class could have potential relevance to contexts involving human learning, teaching, and verbal interaction.

Develop your Own Thoughts

While all of the aforementioned papers present a unique perspective in the advancements in machine learning, you must develop your own thoughts on a hot topic and publish it.

The novel methods mentioned in these research papers in machine learning provide diverse avenues for ML research. As a Machine Learning and artificial intelligence enthusiasts, you can gain a lot when it comes to the latest techniques developed in research.

Thus, as a researcher, Machine Learning looks promising as a career option. You may go for a course in MOOC or take up online courses like the John Hopkins Data Science specialization.

Thus, participating in Kaggle or other online machine learning competitions will also help you gain experience. Attending local meetups or academic conferences is always a fruitful way to learn.

Career in Data Science

You may also enroll in a Data Analytics course for more lucrative career options in Data Science . Moreover, Industry-relevant curriculums, pragmatic market-ready approach, hands-on Capstone Project are some of the best reasons for choosing Digital Vidya. Need experts for creating a killer resume that stands out in the crowd?

Thus, for a rewarding career in Machine Learning , one must stay up to date with any up and coming changes. This also means staying abreast of the latest developments for tools, theory and algorithms.

Furthermore, online communities are great places to know of these changes. Also, read a lot. Read articles on Google Map-Reduce, Google File System, Google Big Table, and The Unreasonable Effectiveness of Data. You will get plenty of free Machine Learning books online. Practice problems, coding competitions, and hackathons are a great way to hone your skills.

Moreover, try finding answers to questions at the end of every research paper on Machine Learning. In addition to research papers in machine learning, subscribe to Machine Learning newsletters or join Machine Learning communities. The latter is better as it helps you gain knowledge through practical implementation of Machine Learning.

Therefore, to build a promising career in Machine Learning, join the Machine Learning Course .

Table of Contents

1 thought on “Getting Started with Research Papers on Machine Learning: What to Read & How”

I really appreciate the work you have done, you explained everything in such an amazing and simple way.

Leave a Comment Cancel Reply

Your email address will not be published. Required fields are marked *

Save my name, email, and website in this browser for the next time I comment.

- Covers all Digital Marketing Techniques

- Digital Media Mastery (with Paid Media Expertise)

Discuss With A Career Advisor

Not Sure, What to learn and how it will help you?

Academia.edu no longer supports Internet Explorer.

To browse Academia.edu and the wider internet faster and more securely, please take a few seconds to upgrade your browser .

Enter the email address you signed up with and we'll email you a reset link.

- We're Hiring!

- Help Center

Machine Learning For Absolute Beginners

Related Papers

International Journal of Engineering and Applied Sciences (IJEAS)

Jawed Qureshi

Machine learning is more than just a buzzword. It is fundamentally changing the way that industries and the businesses within them carry out their everyday functions and activities from Finance and Recruitment right the way across to Sales and Marketing experience. Machine learning can be defined as a subset of artificial intelligence (AI) that relies on models and inference to effectively perform a specific task, using algorithms and scientific models. In a more practical sense, a machine learning system takes a set of data and uses it to answer a question and continues to ingest more and more data to teach itself over time and ultimately become able to answer future questions in an unsupervised manner. This paper explores how different industries and organizations are using machine learning algorithms in their day to day activities and where we see this transposed into our own lives.

Computer Science & Information Technology (CS & IT) Computer Science Conference Proceedings (CSCP)

There has been a dramatic increase in media interest in Artificial Intelligence (AI), in particular with regards to the promises and potential pitfalls of ongoing research, development and deployments. Recent news of success and failures are discussed. The existential opportunities and threats of extreme goals of AI (expressed in terms of Superintelligence/AGI and SocioEconomic impacts) are examined with regards to this media " frenzy " , and some comment and analysis provided. The application of the paper is in two parts, namely to first provide a review of this media coverage, and secondly to recommend project naming in AI with precise and realistic short term goals of achieving really useful machines, with specific smart components. An example of this is provided, namely the RUMLSM project, a novel AI/Machine Learning system proposed to resolve some of the known issues in bottom-up Deep Learning by Neural Networks, recognised by DARPA as the " Third Wave of AI. " An extensive, and up to date at the time of writing, Internet accessible reference set of supporting media articles is provided.

Machine Learning

Emanuel Diamant

Koteswara Rao Pasupuleti