Have a language expert improve your writing

Run a free plagiarism check in 10 minutes, generate accurate citations for free.

- Knowledge Base

Methodology

- Guide to Experimental Design | Overview, Steps, & Examples

Guide to Experimental Design | Overview, 5 steps & Examples

Published on December 3, 2019 by Rebecca Bevans . Revised on June 21, 2023.

Experiments are used to study causal relationships . You manipulate one or more independent variables and measure their effect on one or more dependent variables.

Experimental design create a set of procedures to systematically test a hypothesis . A good experimental design requires a strong understanding of the system you are studying.

There are five key steps in designing an experiment:

- Consider your variables and how they are related

- Write a specific, testable hypothesis

- Design experimental treatments to manipulate your independent variable

- Assign subjects to groups, either between-subjects or within-subjects

- Plan how you will measure your dependent variable

For valid conclusions, you also need to select a representative sample and control any extraneous variables that might influence your results. If random assignment of participants to control and treatment groups is impossible, unethical, or highly difficult, consider an observational study instead. This minimizes several types of research bias, particularly sampling bias , survivorship bias , and attrition bias as time passes.

Table of contents

Step 1: define your variables, step 2: write your hypothesis, step 3: design your experimental treatments, step 4: assign your subjects to treatment groups, step 5: measure your dependent variable, other interesting articles, frequently asked questions about experiments.

You should begin with a specific research question . We will work with two research question examples, one from health sciences and one from ecology:

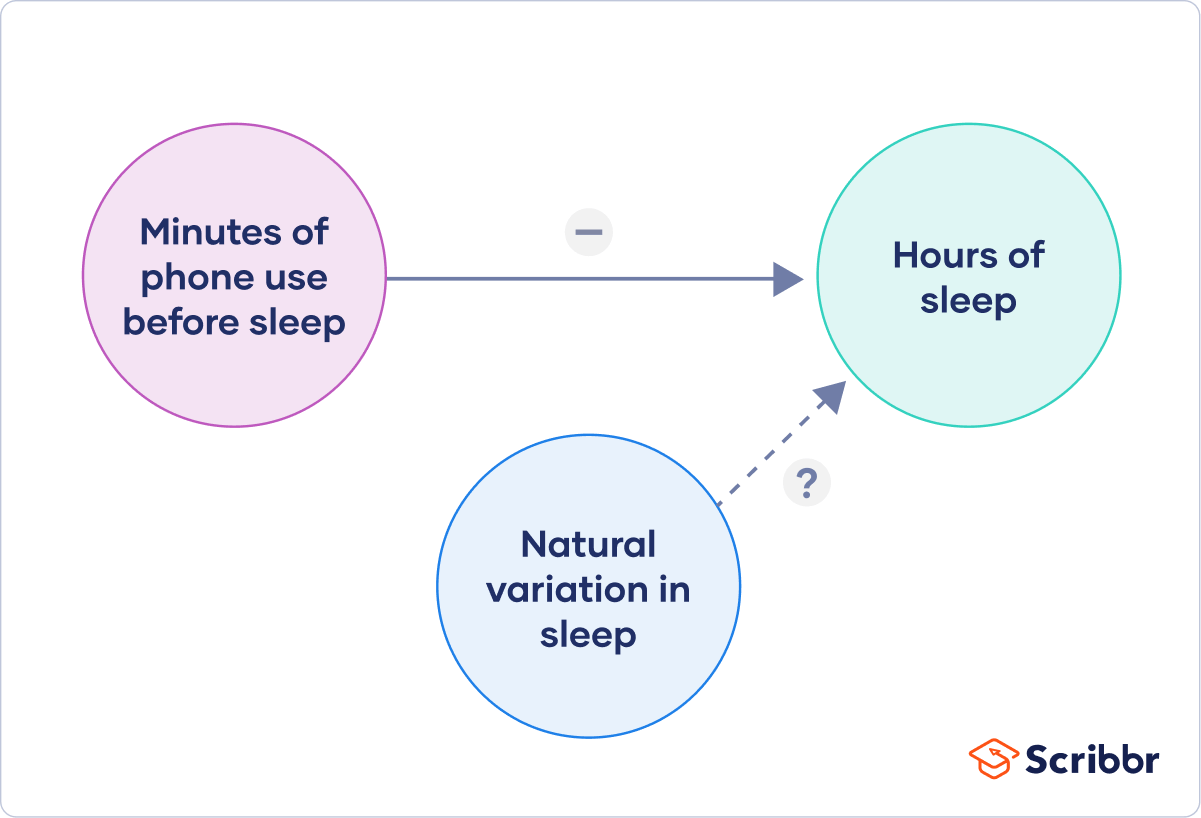

To translate your research question into an experimental hypothesis, you need to define the main variables and make predictions about how they are related.

Start by simply listing the independent and dependent variables .

Then you need to think about possible extraneous and confounding variables and consider how you might control them in your experiment.

Finally, you can put these variables together into a diagram. Use arrows to show the possible relationships between variables and include signs to show the expected direction of the relationships.

Here we predict that increasing temperature will increase soil respiration and decrease soil moisture, while decreasing soil moisture will lead to decreased soil respiration.

Prevent plagiarism. Run a free check.

Now that you have a strong conceptual understanding of the system you are studying, you should be able to write a specific, testable hypothesis that addresses your research question.

The next steps will describe how to design a controlled experiment . In a controlled experiment, you must be able to:

- Systematically and precisely manipulate the independent variable(s).

- Precisely measure the dependent variable(s).

- Control any potential confounding variables.

If your study system doesn’t match these criteria, there are other types of research you can use to answer your research question.

How you manipulate the independent variable can affect the experiment’s external validity – that is, the extent to which the results can be generalized and applied to the broader world.

First, you may need to decide how widely to vary your independent variable.

- just slightly above the natural range for your study region.

- over a wider range of temperatures to mimic future warming.

- over an extreme range that is beyond any possible natural variation.

Second, you may need to choose how finely to vary your independent variable. Sometimes this choice is made for you by your experimental system, but often you will need to decide, and this will affect how much you can infer from your results.

- a categorical variable : either as binary (yes/no) or as levels of a factor (no phone use, low phone use, high phone use).

- a continuous variable (minutes of phone use measured every night).

How you apply your experimental treatments to your test subjects is crucial for obtaining valid and reliable results.

First, you need to consider the study size : how many individuals will be included in the experiment? In general, the more subjects you include, the greater your experiment’s statistical power , which determines how much confidence you can have in your results.

Then you need to randomly assign your subjects to treatment groups . Each group receives a different level of the treatment (e.g. no phone use, low phone use, high phone use).

You should also include a control group , which receives no treatment. The control group tells us what would have happened to your test subjects without any experimental intervention.

When assigning your subjects to groups, there are two main choices you need to make:

- A completely randomized design vs a randomized block design .

- A between-subjects design vs a within-subjects design .

Randomization

An experiment can be completely randomized or randomized within blocks (aka strata):

- In a completely randomized design , every subject is assigned to a treatment group at random.

- In a randomized block design (aka stratified random design), subjects are first grouped according to a characteristic they share, and then randomly assigned to treatments within those groups.

Sometimes randomization isn’t practical or ethical , so researchers create partially-random or even non-random designs. An experimental design where treatments aren’t randomly assigned is called a quasi-experimental design .

Between-subjects vs. within-subjects

In a between-subjects design (also known as an independent measures design or classic ANOVA design), individuals receive only one of the possible levels of an experimental treatment.

In medical or social research, you might also use matched pairs within your between-subjects design to make sure that each treatment group contains the same variety of test subjects in the same proportions.

In a within-subjects design (also known as a repeated measures design), every individual receives each of the experimental treatments consecutively, and their responses to each treatment are measured.

Within-subjects or repeated measures can also refer to an experimental design where an effect emerges over time, and individual responses are measured over time in order to measure this effect as it emerges.

Counterbalancing (randomizing or reversing the order of treatments among subjects) is often used in within-subjects designs to ensure that the order of treatment application doesn’t influence the results of the experiment.

Here's why students love Scribbr's proofreading services

Discover proofreading & editing

Finally, you need to decide how you’ll collect data on your dependent variable outcomes. You should aim for reliable and valid measurements that minimize research bias or error.

Some variables, like temperature, can be objectively measured with scientific instruments. Others may need to be operationalized to turn them into measurable observations.

- Ask participants to record what time they go to sleep and get up each day.

- Ask participants to wear a sleep tracker.

How precisely you measure your dependent variable also affects the kinds of statistical analysis you can use on your data.

Experiments are always context-dependent, and a good experimental design will take into account all of the unique considerations of your study system to produce information that is both valid and relevant to your research question.

If you want to know more about statistics , methodology , or research bias , make sure to check out some of our other articles with explanations and examples.

- Student’s t -distribution

- Normal distribution

- Null and Alternative Hypotheses

- Chi square tests

- Confidence interval

- Cluster sampling

- Stratified sampling

- Data cleansing

- Reproducibility vs Replicability

- Peer review

- Likert scale

Research bias

- Implicit bias

- Framing effect

- Cognitive bias

- Placebo effect

- Hawthorne effect

- Hindsight bias

- Affect heuristic

Experimental design means planning a set of procedures to investigate a relationship between variables . To design a controlled experiment, you need:

- A testable hypothesis

- At least one independent variable that can be precisely manipulated

- At least one dependent variable that can be precisely measured

When designing the experiment, you decide:

- How you will manipulate the variable(s)

- How you will control for any potential confounding variables

- How many subjects or samples will be included in the study

- How subjects will be assigned to treatment levels

Experimental design is essential to the internal and external validity of your experiment.

The key difference between observational studies and experimental designs is that a well-done observational study does not influence the responses of participants, while experiments do have some sort of treatment condition applied to at least some participants by random assignment .

A confounding variable , also called a confounder or confounding factor, is a third variable in a study examining a potential cause-and-effect relationship.

A confounding variable is related to both the supposed cause and the supposed effect of the study. It can be difficult to separate the true effect of the independent variable from the effect of the confounding variable.

In your research design , it’s important to identify potential confounding variables and plan how you will reduce their impact.

In a between-subjects design , every participant experiences only one condition, and researchers assess group differences between participants in various conditions.

In a within-subjects design , each participant experiences all conditions, and researchers test the same participants repeatedly for differences between conditions.

The word “between” means that you’re comparing different conditions between groups, while the word “within” means you’re comparing different conditions within the same group.

An experimental group, also known as a treatment group, receives the treatment whose effect researchers wish to study, whereas a control group does not. They should be identical in all other ways.

Cite this Scribbr article

If you want to cite this source, you can copy and paste the citation or click the “Cite this Scribbr article” button to automatically add the citation to our free Citation Generator.

Bevans, R. (2023, June 21). Guide to Experimental Design | Overview, 5 steps & Examples. Scribbr. Retrieved April 2, 2024, from https://www.scribbr.com/methodology/experimental-design/

Is this article helpful?

Rebecca Bevans

Other students also liked, random assignment in experiments | introduction & examples, quasi-experimental design | definition, types & examples, how to write a lab report, unlimited academic ai-proofreading.

✔ Document error-free in 5minutes ✔ Unlimited document corrections ✔ Specialized in correcting academic texts

Experimental Method In Psychology

Saul Mcleod, PhD

Editor-in-Chief for Simply Psychology

BSc (Hons) Psychology, MRes, PhD, University of Manchester

Saul Mcleod, PhD., is a qualified psychology teacher with over 18 years of experience in further and higher education. He has been published in peer-reviewed journals, including the Journal of Clinical Psychology.

Learn about our Editorial Process

Olivia Guy-Evans, MSc

Associate Editor for Simply Psychology

BSc (Hons) Psychology, MSc Psychology of Education

Olivia Guy-Evans is a writer and associate editor for Simply Psychology. She has previously worked in healthcare and educational sectors.

On This Page:

The experimental method involves the manipulation of variables to establish cause-and-effect relationships. The key features are controlled methods and the random allocation of participants into controlled and experimental groups .

What is an Experiment?

An experiment is an investigation in which a hypothesis is scientifically tested. An independent variable (the cause) is manipulated in an experiment, and the dependent variable (the effect) is measured; any extraneous variables are controlled.

An advantage is that experiments should be objective. The researcher’s views and opinions should not affect a study’s results. This is good as it makes the data more valid and less biased.

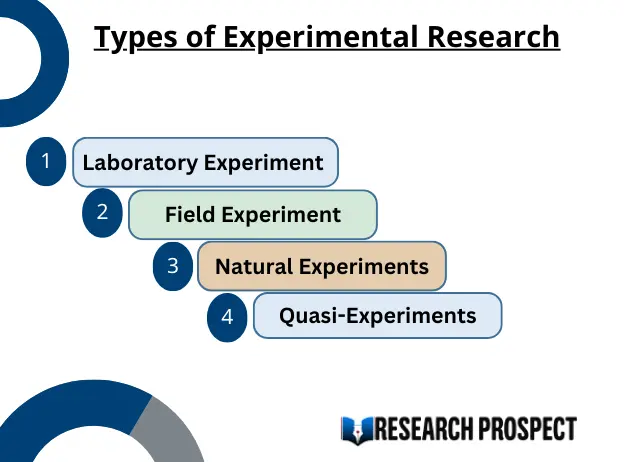

There are three types of experiments you need to know:

1. Lab Experiment

A laboratory experiment in psychology is a research method in which the experimenter manipulates one or more independent variables and measures the effects on the dependent variable under controlled conditions.

A laboratory experiment is conducted under highly controlled conditions (not necessarily a laboratory) where accurate measurements are possible.

The researcher uses a standardized procedure to determine where the experiment will take place, at what time, with which participants, and in what circumstances.

Participants are randomly allocated to each independent variable group.

Examples are Milgram’s experiment on obedience and Loftus and Palmer’s car crash study .

- Strength : It is easier to replicate (i.e., copy) a laboratory experiment. This is because a standardized procedure is used.

- Strength : They allow for precise control of extraneous and independent variables. This allows a cause-and-effect relationship to be established.

- Limitation : The artificiality of the setting may produce unnatural behavior that does not reflect real life, i.e., low ecological validity. This means it would not be possible to generalize the findings to a real-life setting.

- Limitation : Demand characteristics or experimenter effects may bias the results and become confounding variables .

2. Field Experiment

A field experiment is a research method in psychology that takes place in a natural, real-world setting. It is similar to a laboratory experiment in that the experimenter manipulates one or more independent variables and measures the effects on the dependent variable.

However, in a field experiment, the participants are unaware they are being studied, and the experimenter has less control over the extraneous variables .

Field experiments are often used to study social phenomena, such as altruism, obedience, and persuasion. They are also used to test the effectiveness of interventions in real-world settings, such as educational programs and public health campaigns.

An example is Holfing’s hospital study on obedience .

- Strength : behavior in a field experiment is more likely to reflect real life because of its natural setting, i.e., higher ecological validity than a lab experiment.

- Strength : Demand characteristics are less likely to affect the results, as participants may not know they are being studied. This occurs when the study is covert.

- Limitation : There is less control over extraneous variables that might bias the results. This makes it difficult for another researcher to replicate the study in exactly the same way.

3. Natural Experiment

A natural experiment in psychology is a research method in which the experimenter observes the effects of a naturally occurring event or situation on the dependent variable without manipulating any variables.

Natural experiments are conducted in the day (i.e., real life) environment of the participants, but here, the experimenter has no control over the independent variable as it occurs naturally in real life.

Natural experiments are often used to study psychological phenomena that would be difficult or unethical to study in a laboratory setting, such as the effects of natural disasters, policy changes, or social movements.

For example, Hodges and Tizard’s attachment research (1989) compared the long-term development of children who have been adopted, fostered, or returned to their mothers with a control group of children who had spent all their lives in their biological families.

Here is a fictional example of a natural experiment in psychology:

Researchers might compare academic achievement rates among students born before and after a major policy change that increased funding for education.

In this case, the independent variable is the timing of the policy change, and the dependent variable is academic achievement. The researchers would not be able to manipulate the independent variable, but they could observe its effects on the dependent variable.

- Strength : behavior in a natural experiment is more likely to reflect real life because of its natural setting, i.e., very high ecological validity.

- Strength : Demand characteristics are less likely to affect the results, as participants may not know they are being studied.

- Strength : It can be used in situations in which it would be ethically unacceptable to manipulate the independent variable, e.g., researching stress .

- Limitation : They may be more expensive and time-consuming than lab experiments.

- Limitation : There is no control over extraneous variables that might bias the results. This makes it difficult for another researcher to replicate the study in exactly the same way.

Key Terminology

Ecological validity.

The degree to which an investigation represents real-life experiences.

Experimenter effects

These are the ways that the experimenter can accidentally influence the participant through their appearance or behavior.

Demand characteristics

The clues in an experiment lead the participants to think they know what the researcher is looking for (e.g., the experimenter’s body language).

Independent variable (IV)

The variable the experimenter manipulates (i.e., changes) is assumed to have a direct effect on the dependent variable.

Dependent variable (DV)

Variable the experimenter measures. This is the outcome (i.e., the result) of a study.

Extraneous variables (EV)

All variables which are not independent variables but could affect the results (DV) of the experiment. EVs should be controlled where possible.

Confounding variables

Variable(s) that have affected the results (DV), apart from the IV. A confounding variable could be an extraneous variable that has not been controlled.

Random Allocation

Randomly allocating participants to independent variable conditions means that all participants should have an equal chance of participating in each condition.

The principle of random allocation is to avoid bias in how the experiment is carried out and limit the effects of participant variables.

Order effects

Changes in participants’ performance due to their repeating the same or similar test more than once. Examples of order effects include:

(i) practice effect: an improvement in performance on a task due to repetition, for example, because of familiarity with the task;

(ii) fatigue effect: a decrease in performance of a task due to repetition, for example, because of boredom or tiredness.

Want to create or adapt books like this? Learn more about how Pressbooks supports open publishing practices.

10 Experimental research

Experimental research—often considered to be the ‘gold standard’ in research designs—is one of the most rigorous of all research designs. In this design, one or more independent variables are manipulated by the researcher (as treatments), subjects are randomly assigned to different treatment levels (random assignment), and the results of the treatments on outcomes (dependent variables) are observed. The unique strength of experimental research is its internal validity (causality) due to its ability to link cause and effect through treatment manipulation, while controlling for the spurious effect of extraneous variable.

Experimental research is best suited for explanatory research—rather than for descriptive or exploratory research—where the goal of the study is to examine cause-effect relationships. It also works well for research that involves a relatively limited and well-defined set of independent variables that can either be manipulated or controlled. Experimental research can be conducted in laboratory or field settings. Laboratory experiments , conducted in laboratory (artificial) settings, tend to be high in internal validity, but this comes at the cost of low external validity (generalisability), because the artificial (laboratory) setting in which the study is conducted may not reflect the real world. Field experiments are conducted in field settings such as in a real organisation, and are high in both internal and external validity. But such experiments are relatively rare, because of the difficulties associated with manipulating treatments and controlling for extraneous effects in a field setting.

Experimental research can be grouped into two broad categories: true experimental designs and quasi-experimental designs. Both designs require treatment manipulation, but while true experiments also require random assignment, quasi-experiments do not. Sometimes, we also refer to non-experimental research, which is not really a research design, but an all-inclusive term that includes all types of research that do not employ treatment manipulation or random assignment, such as survey research, observational research, and correlational studies.

Basic concepts

Treatment and control groups. In experimental research, some subjects are administered one or more experimental stimulus called a treatment (the treatment group ) while other subjects are not given such a stimulus (the control group ). The treatment may be considered successful if subjects in the treatment group rate more favourably on outcome variables than control group subjects. Multiple levels of experimental stimulus may be administered, in which case, there may be more than one treatment group. For example, in order to test the effects of a new drug intended to treat a certain medical condition like dementia, if a sample of dementia patients is randomly divided into three groups, with the first group receiving a high dosage of the drug, the second group receiving a low dosage, and the third group receiving a placebo such as a sugar pill (control group), then the first two groups are experimental groups and the third group is a control group. After administering the drug for a period of time, if the condition of the experimental group subjects improved significantly more than the control group subjects, we can say that the drug is effective. We can also compare the conditions of the high and low dosage experimental groups to determine if the high dose is more effective than the low dose.

Treatment manipulation. Treatments are the unique feature of experimental research that sets this design apart from all other research methods. Treatment manipulation helps control for the ‘cause’ in cause-effect relationships. Naturally, the validity of experimental research depends on how well the treatment was manipulated. Treatment manipulation must be checked using pretests and pilot tests prior to the experimental study. Any measurements conducted before the treatment is administered are called pretest measures , while those conducted after the treatment are posttest measures .

Random selection and assignment. Random selection is the process of randomly drawing a sample from a population or a sampling frame. This approach is typically employed in survey research, and ensures that each unit in the population has a positive chance of being selected into the sample. Random assignment, however, is a process of randomly assigning subjects to experimental or control groups. This is a standard practice in true experimental research to ensure that treatment groups are similar (equivalent) to each other and to the control group prior to treatment administration. Random selection is related to sampling, and is therefore more closely related to the external validity (generalisability) of findings. However, random assignment is related to design, and is therefore most related to internal validity. It is possible to have both random selection and random assignment in well-designed experimental research, but quasi-experimental research involves neither random selection nor random assignment.

Threats to internal validity. Although experimental designs are considered more rigorous than other research methods in terms of the internal validity of their inferences (by virtue of their ability to control causes through treatment manipulation), they are not immune to internal validity threats. Some of these threats to internal validity are described below, within the context of a study of the impact of a special remedial math tutoring program for improving the math abilities of high school students.

History threat is the possibility that the observed effects (dependent variables) are caused by extraneous or historical events rather than by the experimental treatment. For instance, students’ post-remedial math score improvement may have been caused by their preparation for a math exam at their school, rather than the remedial math program.

Maturation threat refers to the possibility that observed effects are caused by natural maturation of subjects (e.g., a general improvement in their intellectual ability to understand complex concepts) rather than the experimental treatment.

Testing threat is a threat in pre-post designs where subjects’ posttest responses are conditioned by their pretest responses. For instance, if students remember their answers from the pretest evaluation, they may tend to repeat them in the posttest exam.

Not conducting a pretest can help avoid this threat.

Instrumentation threat , which also occurs in pre-post designs, refers to the possibility that the difference between pretest and posttest scores is not due to the remedial math program, but due to changes in the administered test, such as the posttest having a higher or lower degree of difficulty than the pretest.

Mortality threat refers to the possibility that subjects may be dropping out of the study at differential rates between the treatment and control groups due to a systematic reason, such that the dropouts were mostly students who scored low on the pretest. If the low-performing students drop out, the results of the posttest will be artificially inflated by the preponderance of high-performing students.

Regression threat —also called a regression to the mean—refers to the statistical tendency of a group’s overall performance to regress toward the mean during a posttest rather than in the anticipated direction. For instance, if subjects scored high on a pretest, they will have a tendency to score lower on the posttest (closer to the mean) because their high scores (away from the mean) during the pretest were possibly a statistical aberration. This problem tends to be more prevalent in non-random samples and when the two measures are imperfectly correlated.

Two-group experimental designs

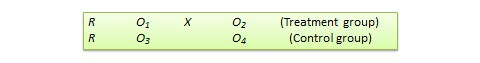

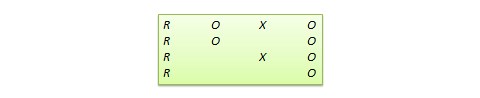

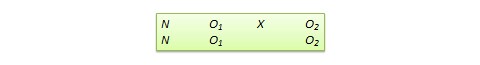

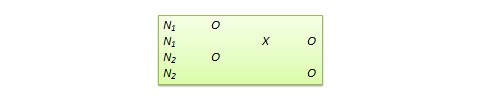

Pretest-posttest control group design . In this design, subjects are randomly assigned to treatment and control groups, subjected to an initial (pretest) measurement of the dependent variables of interest, the treatment group is administered a treatment (representing the independent variable of interest), and the dependent variables measured again (posttest). The notation of this design is shown in Figure 10.1.

Statistical analysis of this design involves a simple analysis of variance (ANOVA) between the treatment and control groups. The pretest-posttest design handles several threats to internal validity, such as maturation, testing, and regression, since these threats can be expected to influence both treatment and control groups in a similar (random) manner. The selection threat is controlled via random assignment. However, additional threats to internal validity may exist. For instance, mortality can be a problem if there are differential dropout rates between the two groups, and the pretest measurement may bias the posttest measurement—especially if the pretest introduces unusual topics or content.

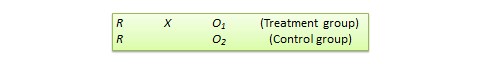

Posttest -only control group design . This design is a simpler version of the pretest-posttest design where pretest measurements are omitted. The design notation is shown in Figure 10.2.

The treatment effect is measured simply as the difference in the posttest scores between the two groups:

The appropriate statistical analysis of this design is also a two-group analysis of variance (ANOVA). The simplicity of this design makes it more attractive than the pretest-posttest design in terms of internal validity. This design controls for maturation, testing, regression, selection, and pretest-posttest interaction, though the mortality threat may continue to exist.

Because the pretest measure is not a measurement of the dependent variable, but rather a covariate, the treatment effect is measured as the difference in the posttest scores between the treatment and control groups as:

Due to the presence of covariates, the right statistical analysis of this design is a two-group analysis of covariance (ANCOVA). This design has all the advantages of posttest-only design, but with internal validity due to the controlling of covariates. Covariance designs can also be extended to pretest-posttest control group design.

Factorial designs

Two-group designs are inadequate if your research requires manipulation of two or more independent variables (treatments). In such cases, you would need four or higher-group designs. Such designs, quite popular in experimental research, are commonly called factorial designs. Each independent variable in this design is called a factor , and each subdivision of a factor is called a level . Factorial designs enable the researcher to examine not only the individual effect of each treatment on the dependent variables (called main effects), but also their joint effect (called interaction effects).

In a factorial design, a main effect is said to exist if the dependent variable shows a significant difference between multiple levels of one factor, at all levels of other factors. No change in the dependent variable across factor levels is the null case (baseline), from which main effects are evaluated. In the above example, you may see a main effect of instructional type, instructional time, or both on learning outcomes. An interaction effect exists when the effect of differences in one factor depends upon the level of a second factor. In our example, if the effect of instructional type on learning outcomes is greater for three hours/week of instructional time than for one and a half hours/week, then we can say that there is an interaction effect between instructional type and instructional time on learning outcomes. Note that the presence of interaction effects dominate and make main effects irrelevant, and it is not meaningful to interpret main effects if interaction effects are significant.

Hybrid experimental designs

Hybrid designs are those that are formed by combining features of more established designs. Three such hybrid designs are randomised bocks design, Solomon four-group design, and switched replications design.

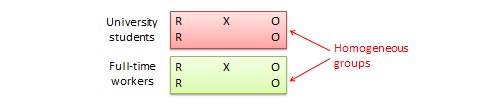

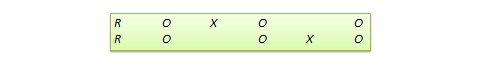

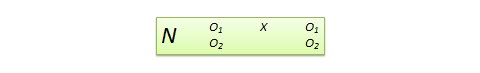

Randomised block design. This is a variation of the posttest-only or pretest-posttest control group design where the subject population can be grouped into relatively homogeneous subgroups (called blocks ) within which the experiment is replicated. For instance, if you want to replicate the same posttest-only design among university students and full-time working professionals (two homogeneous blocks), subjects in both blocks are randomly split between the treatment group (receiving the same treatment) and the control group (see Figure 10.5). The purpose of this design is to reduce the ‘noise’ or variance in data that may be attributable to differences between the blocks so that the actual effect of interest can be detected more accurately.

Solomon four-group design . In this design, the sample is divided into two treatment groups and two control groups. One treatment group and one control group receive the pretest, and the other two groups do not. This design represents a combination of posttest-only and pretest-posttest control group design, and is intended to test for the potential biasing effect of pretest measurement on posttest measures that tends to occur in pretest-posttest designs, but not in posttest-only designs. The design notation is shown in Figure 10.6.

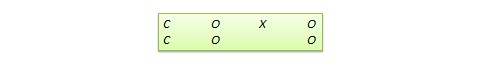

Switched replication design . This is a two-group design implemented in two phases with three waves of measurement. The treatment group in the first phase serves as the control group in the second phase, and the control group in the first phase becomes the treatment group in the second phase, as illustrated in Figure 10.7. In other words, the original design is repeated or replicated temporally with treatment/control roles switched between the two groups. By the end of the study, all participants will have received the treatment either during the first or the second phase. This design is most feasible in organisational contexts where organisational programs (e.g., employee training) are implemented in a phased manner or are repeated at regular intervals.

Quasi-experimental designs

Quasi-experimental designs are almost identical to true experimental designs, but lacking one key ingredient: random assignment. For instance, one entire class section or one organisation is used as the treatment group, while another section of the same class or a different organisation in the same industry is used as the control group. This lack of random assignment potentially results in groups that are non-equivalent, such as one group possessing greater mastery of certain content than the other group, say by virtue of having a better teacher in a previous semester, which introduces the possibility of selection bias . Quasi-experimental designs are therefore inferior to true experimental designs in interval validity due to the presence of a variety of selection related threats such as selection-maturation threat (the treatment and control groups maturing at different rates), selection-history threat (the treatment and control groups being differentially impacted by extraneous or historical events), selection-regression threat (the treatment and control groups regressing toward the mean between pretest and posttest at different rates), selection-instrumentation threat (the treatment and control groups responding differently to the measurement), selection-testing (the treatment and control groups responding differently to the pretest), and selection-mortality (the treatment and control groups demonstrating differential dropout rates). Given these selection threats, it is generally preferable to avoid quasi-experimental designs to the greatest extent possible.

In addition, there are quite a few unique non-equivalent designs without corresponding true experimental design cousins. Some of the more useful of these designs are discussed next.

Regression discontinuity (RD) design . This is a non-equivalent pretest-posttest design where subjects are assigned to the treatment or control group based on a cut-off score on a preprogram measure. For instance, patients who are severely ill may be assigned to a treatment group to test the efficacy of a new drug or treatment protocol and those who are mildly ill are assigned to the control group. In another example, students who are lagging behind on standardised test scores may be selected for a remedial curriculum program intended to improve their performance, while those who score high on such tests are not selected from the remedial program.

Because of the use of a cut-off score, it is possible that the observed results may be a function of the cut-off score rather than the treatment, which introduces a new threat to internal validity. However, using the cut-off score also ensures that limited or costly resources are distributed to people who need them the most, rather than randomly across a population, while simultaneously allowing a quasi-experimental treatment. The control group scores in the RD design do not serve as a benchmark for comparing treatment group scores, given the systematic non-equivalence between the two groups. Rather, if there is no discontinuity between pretest and posttest scores in the control group, but such a discontinuity persists in the treatment group, then this discontinuity is viewed as evidence of the treatment effect.

Proxy pretest design . This design, shown in Figure 10.11, looks very similar to the standard NEGD (pretest-posttest) design, with one critical difference: the pretest score is collected after the treatment is administered. A typical application of this design is when a researcher is brought in to test the efficacy of a program (e.g., an educational program) after the program has already started and pretest data is not available. Under such circumstances, the best option for the researcher is often to use a different prerecorded measure, such as students’ grade point average before the start of the program, as a proxy for pretest data. A variation of the proxy pretest design is to use subjects’ posttest recollection of pretest data, which may be subject to recall bias, but nevertheless may provide a measure of perceived gain or change in the dependent variable.

Separate pretest-posttest samples design . This design is useful if it is not possible to collect pretest and posttest data from the same subjects for some reason. As shown in Figure 10.12, there are four groups in this design, but two groups come from a single non-equivalent group, while the other two groups come from a different non-equivalent group. For instance, say you want to test customer satisfaction with a new online service that is implemented in one city but not in another. In this case, customers in the first city serve as the treatment group and those in the second city constitute the control group. If it is not possible to obtain pretest and posttest measures from the same customers, you can measure customer satisfaction at one point in time, implement the new service program, and measure customer satisfaction (with a different set of customers) after the program is implemented. Customer satisfaction is also measured in the control group at the same times as in the treatment group, but without the new program implementation. The design is not particularly strong, because you cannot examine the changes in any specific customer’s satisfaction score before and after the implementation, but you can only examine average customer satisfaction scores. Despite the lower internal validity, this design may still be a useful way of collecting quasi-experimental data when pretest and posttest data is not available from the same subjects.

An interesting variation of the NEDV design is a pattern-matching NEDV design , which employs multiple outcome variables and a theory that explains how much each variable will be affected by the treatment. The researcher can then examine if the theoretical prediction is matched in actual observations. This pattern-matching technique—based on the degree of correspondence between theoretical and observed patterns—is a powerful way of alleviating internal validity concerns in the original NEDV design.

Perils of experimental research

Experimental research is one of the most difficult of research designs, and should not be taken lightly. This type of research is often best with a multitude of methodological problems. First, though experimental research requires theories for framing hypotheses for testing, much of current experimental research is atheoretical. Without theories, the hypotheses being tested tend to be ad hoc, possibly illogical, and meaningless. Second, many of the measurement instruments used in experimental research are not tested for reliability and validity, and are incomparable across studies. Consequently, results generated using such instruments are also incomparable. Third, often experimental research uses inappropriate research designs, such as irrelevant dependent variables, no interaction effects, no experimental controls, and non-equivalent stimulus across treatment groups. Findings from such studies tend to lack internal validity and are highly suspect. Fourth, the treatments (tasks) used in experimental research may be diverse, incomparable, and inconsistent across studies, and sometimes inappropriate for the subject population. For instance, undergraduate student subjects are often asked to pretend that they are marketing managers and asked to perform a complex budget allocation task in which they have no experience or expertise. The use of such inappropriate tasks, introduces new threats to internal validity (i.e., subject’s performance may be an artefact of the content or difficulty of the task setting), generates findings that are non-interpretable and meaningless, and makes integration of findings across studies impossible.

The design of proper experimental treatments is a very important task in experimental design, because the treatment is the raison d’etre of the experimental method, and must never be rushed or neglected. To design an adequate and appropriate task, researchers should use prevalidated tasks if available, conduct treatment manipulation checks to check for the adequacy of such tasks (by debriefing subjects after performing the assigned task), conduct pilot tests (repeatedly, if necessary), and if in doubt, use tasks that are simple and familiar for the respondent sample rather than tasks that are complex or unfamiliar.

In summary, this chapter introduced key concepts in the experimental design research method and introduced a variety of true experimental and quasi-experimental designs. Although these designs vary widely in internal validity, designs with less internal validity should not be overlooked and may sometimes be useful under specific circumstances and empirical contingencies.

Social Science Research: Principles, Methods and Practices (Revised edition) Copyright © 2019 by Anol Bhattacherjee is licensed under a Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International License , except where otherwise noted.

Share This Book

- Bipolar Disorder

- Therapy Center

- When To See a Therapist

- Types of Therapy

- Best Online Therapy

- Best Couples Therapy

- Best Family Therapy

- Managing Stress

- Sleep and Dreaming

- Understanding Emotions

- Self-Improvement

- Healthy Relationships

- Student Resources

- Personality Types

- Guided Meditations

- Verywell Mind Insights

- 2023 Verywell Mind 25

- Mental Health in the Classroom

- Editorial Process

- Meet Our Review Board

- Crisis Support

How the Experimental Method Works in Psychology

Kendra Cherry, MS, is a psychosocial rehabilitation specialist, psychology educator, and author of the "Everything Psychology Book."

:max_bytes(150000):strip_icc():format(webp)/IMG_9791-89504ab694d54b66bbd72cb84ffb860e.jpg)

Amanda Tust is a fact-checker, researcher, and writer with a Master of Science in Journalism from Northwestern University's Medill School of Journalism.

:max_bytes(150000):strip_icc():format(webp)/Amanda-Tust-1000-ffe096be0137462fbfba1f0759e07eb9.jpg)

sturti/Getty Images

The Experimental Process

Types of experiments, potential pitfalls of the experimental method.

The experimental method is a type of research procedure that involves manipulating variables to determine if there is a cause-and-effect relationship. The results obtained through the experimental method are useful but do not prove with 100% certainty that a singular cause always creates a specific effect. Instead, they show the probability that a cause will or will not lead to a particular effect.

At a Glance

While there are many different research techniques available, the experimental method allows researchers to look at cause-and-effect relationships. Using the experimental method, researchers randomly assign participants to a control or experimental group and manipulate levels of an independent variable. If changes in the independent variable lead to changes in the dependent variable, it indicates there is likely a causal relationship between them.

What Is the Experimental Method in Psychology?

The experimental method involves manipulating one variable to determine if this causes changes in another variable. This method relies on controlled research methods and random assignment of study subjects to test a hypothesis.

For example, researchers may want to learn how different visual patterns may impact our perception. Or they might wonder whether certain actions can improve memory . Experiments are conducted on many behavioral topics, including:

The scientific method forms the basis of the experimental method. This is a process used to determine the relationship between two variables—in this case, to explain human behavior .

Positivism is also important in the experimental method. It refers to factual knowledge that is obtained through observation, which is considered to be trustworthy.

When using the experimental method, researchers first identify and define key variables. Then they formulate a hypothesis, manipulate the variables, and collect data on the results. Unrelated or irrelevant variables are carefully controlled to minimize the potential impact on the experiment outcome.

History of the Experimental Method

The idea of using experiments to better understand human psychology began toward the end of the nineteenth century. Wilhelm Wundt established the first formal laboratory in 1879.

Wundt is often called the father of experimental psychology. He believed that experiments could help explain how psychology works, and used this approach to study consciousness .

Wundt coined the term "physiological psychology." This is a hybrid of physiology and psychology, or how the body affects the brain.

Other early contributors to the development and evolution of experimental psychology as we know it today include:

- Gustav Fechner (1801-1887), who helped develop procedures for measuring sensations according to the size of the stimulus

- Hermann von Helmholtz (1821-1894), who analyzed philosophical assumptions through research in an attempt to arrive at scientific conclusions

- Franz Brentano (1838-1917), who called for a combination of first-person and third-person research methods when studying psychology

- Georg Elias Müller (1850-1934), who performed an early experiment on attitude which involved the sensory discrimination of weights and revealed how anticipation can affect this discrimination

Key Terms to Know

To understand how the experimental method works, it is important to know some key terms.

Dependent Variable

The dependent variable is the effect that the experimenter is measuring. If a researcher was investigating how sleep influences test scores, for example, the test scores would be the dependent variable.

Independent Variable

The independent variable is the variable that the experimenter manipulates. In the previous example, the amount of sleep an individual gets would be the independent variable.

A hypothesis is a tentative statement or a guess about the possible relationship between two or more variables. In looking at how sleep influences test scores, the researcher might hypothesize that people who get more sleep will perform better on a math test the following day. The purpose of the experiment, then, is to either support or reject this hypothesis.

Operational definitions are necessary when performing an experiment. When we say that something is an independent or dependent variable, we must have a very clear and specific definition of the meaning and scope of that variable.

Extraneous Variables

Extraneous variables are other variables that may also affect the outcome of an experiment. Types of extraneous variables include participant variables, situational variables, demand characteristics, and experimenter effects. In some cases, researchers can take steps to control for extraneous variables.

Demand Characteristics

Demand characteristics are subtle hints that indicate what an experimenter is hoping to find in a psychology experiment. This can sometimes cause participants to alter their behavior, which can affect the results of the experiment.

Intervening Variables

Intervening variables are factors that can affect the relationship between two other variables.

Confounding Variables

Confounding variables are variables that can affect the dependent variable, but that experimenters cannot control for. Confounding variables can make it difficult to determine if the effect was due to changes in the independent variable or if the confounding variable may have played a role.

Psychologists, like other scientists, use the scientific method when conducting an experiment. The scientific method is a set of procedures and principles that guide how scientists develop research questions, collect data, and come to conclusions.

The five basic steps of the experimental process are:

- Identifying a problem to study

- Devising the research protocol

- Conducting the experiment

- Analyzing the data collected

- Sharing the findings (usually in writing or via presentation)

Most psychology students are expected to use the experimental method at some point in their academic careers. Learning how to conduct an experiment is important to understanding how psychologists prove and disprove theories in this field.

There are a few different types of experiments that researchers might use when studying psychology. Each has pros and cons depending on the participants being studied, the hypothesis, and the resources available to conduct the research.

Lab Experiments

Lab experiments are common in psychology because they allow experimenters more control over the variables. These experiments can also be easier for other researchers to replicate. The drawback of this research type is that what takes place in a lab is not always what takes place in the real world.

Field Experiments

Sometimes researchers opt to conduct their experiments in the field. For example, a social psychologist interested in researching prosocial behavior might have a person pretend to faint and observe how long it takes onlookers to respond.

This type of experiment can be a great way to see behavioral responses in realistic settings. But it is more difficult for researchers to control the many variables existing in these settings that could potentially influence the experiment's results.

Quasi-Experiments

While lab experiments are known as true experiments, researchers can also utilize a quasi-experiment. Quasi-experiments are often referred to as natural experiments because the researchers do not have true control over the independent variable.

A researcher looking at personality differences and birth order, for example, is not able to manipulate the independent variable in the situation (personality traits). Participants also cannot be randomly assigned because they naturally fall into pre-existing groups based on their birth order.

So why would a researcher use a quasi-experiment? This is a good choice in situations where scientists are interested in studying phenomena in natural, real-world settings. It's also beneficial if there are limits on research funds or time.

Field experiments can be either quasi-experiments or true experiments.

Examples of the Experimental Method in Use

The experimental method can provide insight into human thoughts and behaviors, Researchers use experiments to study many aspects of psychology.

A 2019 study investigated whether splitting attention between electronic devices and classroom lectures had an effect on college students' learning abilities. It found that dividing attention between these two mediums did not affect lecture comprehension. However, it did impact long-term retention of the lecture information, which affected students' exam performance.

An experiment used participants' eye movements and electroencephalogram (EEG) data to better understand cognitive processing differences between experts and novices. It found that experts had higher power in their theta brain waves than novices, suggesting that they also had a higher cognitive load.

A study looked at whether chatting online with a computer via a chatbot changed the positive effects of emotional disclosure often received when talking with an actual human. It found that the effects were the same in both cases.

One experimental study evaluated whether exercise timing impacts information recall. It found that engaging in exercise prior to performing a memory task helped improve participants' short-term memory abilities.

Sometimes researchers use the experimental method to get a bigger-picture view of psychological behaviors and impacts. For example, one 2018 study examined several lab experiments to learn more about the impact of various environmental factors on building occupant perceptions.

A 2020 study set out to determine the role that sensation-seeking plays in political violence. This research found that sensation-seeking individuals have a higher propensity for engaging in political violence. It also found that providing access to a more peaceful, yet still exciting political group helps reduce this effect.

While the experimental method can be a valuable tool for learning more about psychology and its impacts, it also comes with a few pitfalls.

Experiments may produce artificial results, which are difficult to apply to real-world situations. Similarly, researcher bias can impact the data collected. Results may not be able to be reproduced, meaning the results have low reliability .

Since humans are unpredictable and their behavior can be subjective, it can be hard to measure responses in an experiment. In addition, political pressure may alter the results. The subjects may not be a good representation of the population, or groups used may not be comparable.

And finally, since researchers are human too, results may be degraded due to human error.

What This Means For You

Every psychological research method has its pros and cons. The experimental method can help establish cause and effect, and it's also beneficial when research funds are limited or time is of the essence.

At the same time, it's essential to be aware of this method's pitfalls, such as how biases can affect the results or the potential for low reliability. Keeping these in mind can help you review and assess research studies more accurately, giving you a better idea of whether the results can be trusted or have limitations.

Colorado State University. Experimental and quasi-experimental research .

American Psychological Association. Experimental psychology studies human and animals .

Mayrhofer R, Kuhbandner C, Lindner C. The practice of experimental psychology: An inevitably postmodern endeavor . Front Psychol . 2021;11:612805. doi:10.3389/fpsyg.2020.612805

Mandler G. A History of Modern Experimental Psychology .

Stanford University. Wilhelm Maximilian Wundt . Stanford Encyclopedia of Philosophy.

Britannica. Gustav Fechner .

Britannica. Hermann von Helmholtz .

Meyer A, Hackert B, Weger U. Franz Brentano and the beginning of experimental psychology: implications for the study of psychological phenomena today . Psychol Res . 2018;82:245-254. doi:10.1007/s00426-016-0825-7

Britannica. Georg Elias Müller .

McCambridge J, de Bruin M, Witton J. The effects of demand characteristics on research participant behaviours in non-laboratory settings: A systematic review . PLoS ONE . 2012;7(6):e39116. doi:10.1371/journal.pone.0039116

Laboratory experiments . In: The Sage Encyclopedia of Communication Research Methods. Allen M, ed. SAGE Publications, Inc. doi:10.4135/9781483381411.n287

Schweizer M, Braun B, Milstone A. Research methods in healthcare epidemiology and antimicrobial stewardship — quasi-experimental designs . Infect Control Hosp Epidemiol . 2016;37(10):1135-1140. doi:10.1017/ice.2016.117

Glass A, Kang M. Dividing attention in the classroom reduces exam performance . Educ Psychol . 2019;39(3):395-408. doi:10.1080/01443410.2018.1489046

Keskin M, Ooms K, Dogru AO, De Maeyer P. Exploring the cognitive load of expert and novice map users using EEG and eye tracking . ISPRS Int J Geo-Inf . 2020;9(7):429. doi:10.3390.ijgi9070429

Ho A, Hancock J, Miner A. Psychological, relational, and emotional effects of self-disclosure after conversations with a chatbot . J Commun . 2018;68(4):712-733. doi:10.1093/joc/jqy026

Haynes IV J, Frith E, Sng E, Loprinzi P. Experimental effects of acute exercise on episodic memory function: Considerations for the timing of exercise . Psychol Rep . 2018;122(5):1744-1754. doi:10.1177/0033294118786688

Torresin S, Pernigotto G, Cappelletti F, Gasparella A. Combined effects of environmental factors on human perception and objective performance: A review of experimental laboratory works . Indoor Air . 2018;28(4):525-538. doi:10.1111/ina.12457

Schumpe BM, Belanger JJ, Moyano M, Nisa CF. The role of sensation seeking in political violence: An extension of the significance quest theory . J Personal Social Psychol . 2020;118(4):743-761. doi:10.1037/pspp0000223

By Kendra Cherry, MSEd Kendra Cherry, MS, is a psychosocial rehabilitation specialist, psychology educator, and author of the "Everything Psychology Book."

- Skip to main content

- Skip to primary sidebar

- Skip to footer

- QuestionPro

- Solutions Industries Gaming Automotive Sports and events Education Government Travel & Hospitality Financial Services Healthcare Cannabis Technology Use Case NPS+ Communities Audience Contactless surveys Mobile LivePolls Member Experience GDPR Positive People Science 360 Feedback Surveys

- Resources Blog eBooks Survey Templates Case Studies Training Help center

Home Market Research

Experimental Research: What it is + Types of designs

Any research conducted under scientifically acceptable conditions uses experimental methods. The success of experimental studies hinges on researchers confirming the change of a variable is based solely on the manipulation of the constant variable. The research should establish a notable cause and effect.

What is Experimental Research?

Experimental research is a study conducted with a scientific approach using two sets of variables. The first set acts as a constant, which you use to measure the differences of the second set. Quantitative research methods , for example, are experimental.

If you don’t have enough data to support your decisions, you must first determine the facts. This research gathers the data necessary to help you make better decisions.

You can conduct experimental research in the following situations:

- Time is a vital factor in establishing a relationship between cause and effect.

- Invariable behavior between cause and effect.

- You wish to understand the importance of cause and effect.

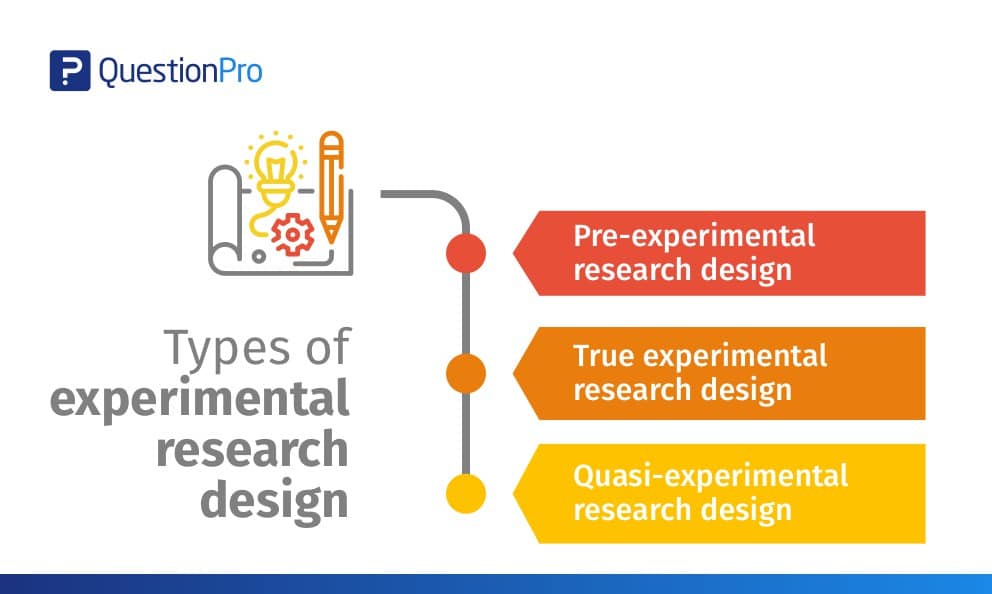

Experimental Research Design Types

The classic experimental design definition is: “The methods used to collect data in experimental studies.”

There are three primary types of experimental design:

- Pre-experimental research design

- True experimental research design

- Quasi-experimental research design

The way you classify research subjects based on conditions or groups determines the type of research design you should use.

0 1. Pre-Experimental Design

A group, or various groups, are kept under observation after implementing cause and effect factors. You’ll conduct this research to understand whether further investigation is necessary for these particular groups.

You can break down pre-experimental research further into three types:

- One-shot Case Study Research Design

- One-group Pretest-posttest Research Design

- Static-group Comparison

0 2. True Experimental Design

It relies on statistical analysis to prove or disprove a hypothesis, making it the most accurate form of research. Of the types of experimental design, only true design can establish a cause-effect relationship within a group. In a true experiment, three factors need to be satisfied:

- There is a Control Group, which won’t be subject to changes, and an Experimental Group, which will experience the changed variables.

- A variable that can be manipulated by the researcher

- Random distribution

This experimental research method commonly occurs in the physical sciences.

0 3. Quasi-Experimental Design

The word “Quasi” indicates similarity. A quasi-experimental design is similar to an experimental one, but it is not the same. The difference between the two is the assignment of a control group. In this research, an independent variable is manipulated, but the participants of a group are not randomly assigned. Quasi-research is used in field settings where random assignment is either irrelevant or not required.

Importance of Experimental Design

Experimental research is a powerful tool for understanding cause-and-effect relationships. It allows us to manipulate variables and observe the effects, which is crucial for understanding how different factors influence the outcome of a study.

But the importance of experimental research goes beyond that. It’s a critical method for many scientific and academic studies. It allows us to test theories, develop new products, and make groundbreaking discoveries.

For example, this research is essential for developing new drugs and medical treatments. Researchers can understand how a new drug works by manipulating dosage and administration variables and identifying potential side effects.

Similarly, experimental research is used in the field of psychology to test theories and understand human behavior. By manipulating variables such as stimuli, researchers can gain insights into how the brain works and identify new treatment options for mental health disorders.

It is also widely used in the field of education. It allows educators to test new teaching methods and identify what works best. By manipulating variables such as class size, teaching style, and curriculum, researchers can understand how students learn and identify new ways to improve educational outcomes.

In addition, experimental research is a powerful tool for businesses and organizations. By manipulating variables such as marketing strategies, product design, and customer service, companies can understand what works best and identify new opportunities for growth.

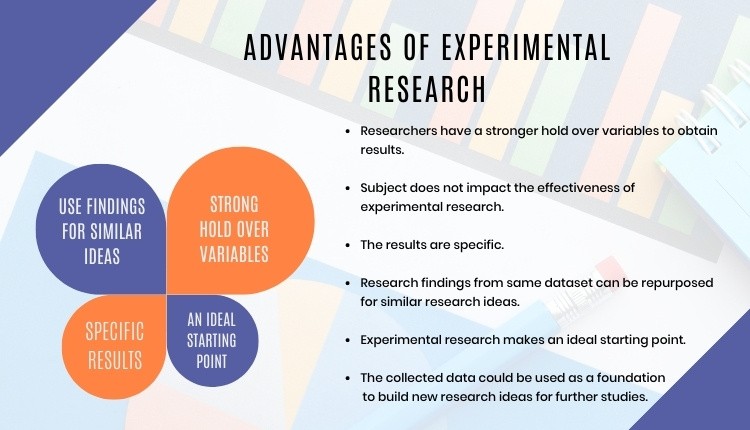

Advantages of Experimental Research

When talking about this research, we can think of human life. Babies do their own rudimentary experiments (such as putting objects in their mouths) to learn about the world around them, while older children and teens do experiments at school to learn more about science.

Ancient scientists used this research to prove that their hypotheses were correct. For example, Galileo Galilei and Antoine Lavoisier conducted various experiments to discover key concepts in physics and chemistry. The same is true of modern experts, who use this scientific method to see if new drugs are effective, discover treatments for diseases, and create new electronic devices (among others).

It’s vital to test new ideas or theories. Why put time, effort, and funding into something that may not work?

This research allows you to test your idea in a controlled environment before marketing. It also provides the best method to test your theory thanks to the following advantages:

- Researchers have a stronger hold over variables to obtain desired results.

- The subject or industry does not impact the effectiveness of experimental research. Any industry can implement it for research purposes.

- The results are specific.

- After analyzing the results, you can apply your findings to similar ideas or situations.

- You can identify the cause and effect of a hypothesis. Researchers can further analyze this relationship to determine more in-depth ideas.

- Experimental research makes an ideal starting point. The data you collect is a foundation for building more ideas and conducting more action research .

Whether you want to know how the public will react to a new product or if a certain food increases the chance of disease, experimental research is the best place to start. Begin your research by finding subjects using QuestionPro Audience and other tools today.

LEARN MORE FREE TRIAL

MORE LIKE THIS

Healthcare Staff Burnout: What it Is + How To Manage It

Apr 4, 2024

Top 15 Employee Retention Software in 2024

Top 10 Employee Development Software for Talent Growth

Apr 3, 2024

Top 5 Insight Community Platforms to Elevate Your Research

Other categories.

- Academic Research

- Artificial Intelligence

- Assessments

- Brand Awareness

- Case Studies

- Communities

- Consumer Insights

- Customer effort score

- Customer Engagement

- Customer Experience

- Customer Loyalty

- Customer Research

- Customer Satisfaction

- Employee Benefits

- Employee Engagement

- Employee Retention

- Friday Five

- General Data Protection Regulation

- Insights Hub

- Life@QuestionPro

- Market Research

- Mobile diaries

- Mobile Surveys

- New Features

- Online Communities

- Question Types

- Questionnaire

- QuestionPro Products

- Release Notes

- Research Tools and Apps

- Revenue at Risk

- Survey Templates

- Training Tips

- Uncategorized

- Video Learning Series

- What’s Coming Up

- Workforce Intelligence

- Experimental Research Designs: Types, Examples & Methods

Experimental research is the most familiar type of research design for individuals in the physical sciences and a host of other fields. This is mainly because experimental research is a classical scientific experiment, similar to those performed in high school science classes.

Imagine taking 2 samples of the same plant and exposing one of them to sunlight, while the other is kept away from sunlight. Let the plant exposed to sunlight be called sample A, while the latter is called sample B.

If after the duration of the research, we find out that sample A grows and sample B dies, even though they are both regularly wetted and given the same treatment. Therefore, we can conclude that sunlight will aid growth in all similar plants.

What is Experimental Research?

Experimental research is a scientific approach to research, where one or more independent variables are manipulated and applied to one or more dependent variables to measure their effect on the latter. The effect of the independent variables on the dependent variables is usually observed and recorded over some time, to aid researchers in drawing a reasonable conclusion regarding the relationship between these 2 variable types.

The experimental research method is widely used in physical and social sciences, psychology, and education. It is based on the comparison between two or more groups with a straightforward logic, which may, however, be difficult to execute.

Mostly related to a laboratory test procedure, experimental research designs involve collecting quantitative data and performing statistical analysis on them during research. Therefore, making it an example of quantitative research method .

What are The Types of Experimental Research Design?

The types of experimental research design are determined by the way the researcher assigns subjects to different conditions and groups. They are of 3 types, namely; pre-experimental, quasi-experimental, and true experimental research.

Pre-experimental Research Design

In pre-experimental research design, either a group or various dependent groups are observed for the effect of the application of an independent variable which is presumed to cause change. It is the simplest form of experimental research design and is treated with no control group.

Although very practical, experimental research is lacking in several areas of the true-experimental criteria. The pre-experimental research design is further divided into three types

- One-shot Case Study Research Design

In this type of experimental study, only one dependent group or variable is considered. The study is carried out after some treatment which was presumed to cause change, making it a posttest study.

- One-group Pretest-posttest Research Design:

This research design combines both posttest and pretest study by carrying out a test on a single group before the treatment is administered and after the treatment is administered. With the former being administered at the beginning of treatment and later at the end.

- Static-group Comparison:

In a static-group comparison study, 2 or more groups are placed under observation, where only one of the groups is subjected to some treatment while the other groups are held static. All the groups are post-tested, and the observed differences between the groups are assumed to be a result of the treatment.

Quasi-experimental Research Design

The word “quasi” means partial, half, or pseudo. Therefore, the quasi-experimental research bearing a resemblance to the true experimental research, but not the same. In quasi-experiments, the participants are not randomly assigned, and as such, they are used in settings where randomization is difficult or impossible.

This is very common in educational research, where administrators are unwilling to allow the random selection of students for experimental samples.

Some examples of quasi-experimental research design include; the time series, no equivalent control group design, and the counterbalanced design.

True Experimental Research Design

The true experimental research design relies on statistical analysis to approve or disprove a hypothesis. It is the most accurate type of experimental design and may be carried out with or without a pretest on at least 2 randomly assigned dependent subjects.

The true experimental research design must contain a control group, a variable that can be manipulated by the researcher, and the distribution must be random. The classification of true experimental design include:

- The posttest-only Control Group Design: In this design, subjects are randomly selected and assigned to the 2 groups (control and experimental), and only the experimental group is treated. After close observation, both groups are post-tested, and a conclusion is drawn from the difference between these groups.

- The pretest-posttest Control Group Design: For this control group design, subjects are randomly assigned to the 2 groups, both are presented, but only the experimental group is treated. After close observation, both groups are post-tested to measure the degree of change in each group.

- Solomon four-group Design: This is the combination of the pretest-only and the pretest-posttest control groups. In this case, the randomly selected subjects are placed into 4 groups.

The first two of these groups are tested using the posttest-only method, while the other two are tested using the pretest-posttest method.

Examples of Experimental Research

Experimental research examples are different, depending on the type of experimental research design that is being considered. The most basic example of experimental research is laboratory experiments, which may differ in nature depending on the subject of research.

Administering Exams After The End of Semester

During the semester, students in a class are lectured on particular courses and an exam is administered at the end of the semester. In this case, the students are the subjects or dependent variables while the lectures are the independent variables treated on the subjects.

Only one group of carefully selected subjects are considered in this research, making it a pre-experimental research design example. We will also notice that tests are only carried out at the end of the semester, and not at the beginning.

Further making it easy for us to conclude that it is a one-shot case study research.

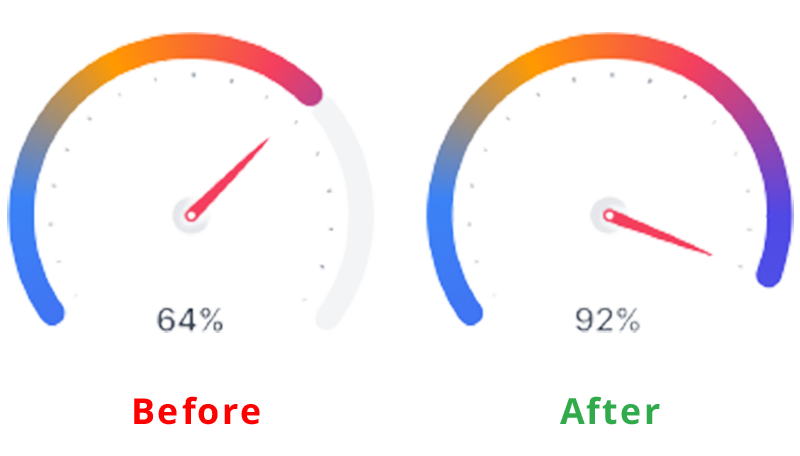

Employee Skill Evaluation

Before employing a job seeker, organizations conduct tests that are used to screen out less qualified candidates from the pool of qualified applicants. This way, organizations can determine an employee’s skill set at the point of employment.

In the course of employment, organizations also carry out employee training to improve employee productivity and generally grow the organization. Further evaluation is carried out at the end of each training to test the impact of the training on employee skills, and test for improvement.

Here, the subject is the employee, while the treatment is the training conducted. This is a pretest-posttest control group experimental research example.

Evaluation of Teaching Method

Let us consider an academic institution that wants to evaluate the teaching method of 2 teachers to determine which is best. Imagine a case whereby the students assigned to each teacher is carefully selected probably due to personal request by parents or due to stubbornness and smartness.

This is a no equivalent group design example because the samples are not equal. By evaluating the effectiveness of each teacher’s teaching method this way, we may conclude after a post-test has been carried out.

However, this may be influenced by factors like the natural sweetness of a student. For example, a very smart student will grab more easily than his or her peers irrespective of the method of teaching.

What are the Characteristics of Experimental Research?

Experimental research contains dependent, independent and extraneous variables. The dependent variables are the variables being treated or manipulated and are sometimes called the subject of the research.

The independent variables are the experimental treatment being exerted on the dependent variables. Extraneous variables, on the other hand, are other factors affecting the experiment that may also contribute to the change.

The setting is where the experiment is carried out. Many experiments are carried out in the laboratory, where control can be exerted on the extraneous variables, thereby eliminating them.

Other experiments are carried out in a less controllable setting. The choice of setting used in research depends on the nature of the experiment being carried out.

- Multivariable

Experimental research may include multiple independent variables, e.g. time, skills, test scores, etc.

Why Use Experimental Research Design?

Experimental research design can be majorly used in physical sciences, social sciences, education, and psychology. It is used to make predictions and draw conclusions on a subject matter.

Some uses of experimental research design are highlighted below.

- Medicine: Experimental research is used to provide the proper treatment for diseases. In most cases, rather than directly using patients as the research subject, researchers take a sample of the bacteria from the patient’s body and are treated with the developed antibacterial

The changes observed during this period are recorded and evaluated to determine its effectiveness. This process can be carried out using different experimental research methods.

- Education: Asides from science subjects like Chemistry and Physics which involves teaching students how to perform experimental research, it can also be used in improving the standard of an academic institution. This includes testing students’ knowledge on different topics, coming up with better teaching methods, and the implementation of other programs that will aid student learning.

- Human Behavior: Social scientists are the ones who mostly use experimental research to test human behaviour. For example, consider 2 people randomly chosen to be the subject of the social interaction research where one person is placed in a room without human interaction for 1 year.

The other person is placed in a room with a few other people, enjoying human interaction. There will be a difference in their behaviour at the end of the experiment.

- UI/UX: During the product development phase, one of the major aims of the product team is to create a great user experience with the product. Therefore, before launching the final product design, potential are brought in to interact with the product.

For example, when finding it difficult to choose how to position a button or feature on the app interface, a random sample of product testers are allowed to test the 2 samples and how the button positioning influences the user interaction is recorded.

What are the Disadvantages of Experimental Research?

- It is highly prone to human error due to its dependency on variable control which may not be properly implemented. These errors could eliminate the validity of the experiment and the research being conducted.

- Exerting control of extraneous variables may create unrealistic situations. Eliminating real-life variables will result in inaccurate conclusions. This may also result in researchers controlling the variables to suit his or her personal preferences.

- It is a time-consuming process. So much time is spent on testing dependent variables and waiting for the effect of the manipulation of dependent variables to manifest.

- It is expensive.